Three Myths About Context for AI Agents (And What Actually Works)

Brandon Waselnuk·April 22, 2026

Brandon Waselnuk·April 22, 2026

Key Takeaways

• Naive RAG, MCP server stacks, and bigger context windows each address a surface-level symptom while missing the reasoning problem underneath

• Chroma's 2025 research found that model intelligence decays past 40% context window utilization, meaning more tokens can actively hurt quality (Chroma, 2025)

• Agents exhibit satisfaction of search: they stop at the first plausible answer, not the best one

• What works is decision-grade context: synthesized, conflict-resolved, permission-aware understanding

Three Myths About Context for AI Agents (And What Actually Works)

Why do AI agents with full codebase access still ship code that gets bounced in review?

The agent can see every file. It has your docs. It even has an MCP server connected to Slack. And yet the PR it opens references a deprecated helper, ignores a migration convention from last quarter, and duplicates an approach your staff engineer already rejected. The code compiles. The tests pass. The reviewer sighs.

This keeps happening because the three most popular "fixes" for agent context are built on myths. Teams pour effort into naive RAG pipelines, stack MCP servers until the dashboard looks impressive, and wait for the next context window expansion to save them. None of these solve the actual problem: agents don't need more data. They need better understanding.

Chroma's 2025 research on context utilization found that model intelligence measurably decays once the context window passes roughly 40% utilization, meaning more tokens actively hurt output quality past a threshold (Chroma, "Context Rot", 2025). That single finding undermines all three myths at once.

This post names the three myths, traces what goes wrong with each, and explains what actually works instead.

For the full picture on how context engineering shapes agent behavior, see our guide to context engineering.

Why do these myths persist?#

These AI agent context myths persist because each one contains a kernel of truth. Anthropic's context engineering documentation emphasizes that the right context, delivered at the right time, is the single largest lever on agent performance (Anthropic, 2025). RAG does retrieve relevant documents. MCP servers do connect agents to data sources. Bigger windows do hold more tokens. The problem is that teams mistake these partial solutions for complete ones.

Anthropic's context engineering documentation emphasizes that properly assembled context is the single largest lever on agent performance (Anthropic, 2025). Each myth below contains a kernel of truth that makes it persistent, but partial solutions compound rather than cancel each other's gaps.

The pattern is familiar from other engineering domains. When something almost works, the instinct is to do more of it: more retrieval, more connectors, more capacity. But the failure mode isn't quantity. It's reasoning. An agent that retrieves ten documents still has to decide which ones conflict, which ones are outdated, and which ones actually apply to the task at hand.

In conversations with engineering managers across mid-market SaaS teams, we've heard the same story repeatedly: "We set up RAG, connected our MCPs, upgraded to the biggest context window available, and the agent still ships code that misses the point." The progression is almost always the same, and so is the disappointment.

Why do teams keep reaching for the same three approaches? Because the alternatives haven't been named clearly. That's what the rest of this post is for.

Learn more about what your agent can't see and why it matters.

Myth 1: "RAG over my docs is enough"#

The first AI agent context myth is that a vector database over your documentation gives agents the context they need. Stanford HAI's 2025 AI Index report found that even state-of-the-art retrieval-augmented systems still hallucinate on 17% to 34% of factual queries in legal and medical benchmarks (Stanford HAI AI Index, 2025). Naive RAG is a search engine with extra steps, not a context engine.

Stanford HAI's 2025 AI Index documented hallucination rates of 17-34% in RAG-augmented systems even with full document access (Stanford HAI AI Index, 2025). Retrieval alone does not guarantee factual grounding, particularly for multi-source engineering tasks.

RAG (Retrieval-Augmented Generation) works by converting documents into vector embeddings, finding the most similar ones to a query, and stuffing them into the prompt. For simple question-answering over a static corpus, this is fine. For a coding agent that needs to understand your organization's conventions, trade-offs, and history, it falls apart fast.

The core problem is that similarity is not relevance. A vector search for "authentication pattern" returns every document that mentions authentication, ranked by cosine distance. It doesn't know which pattern your team actually adopted, which one was rejected after a security incident, or which one applies to the specific service the agent is modifying. The agent gets ten plausible documents and picks the first one that looks right.

For a deeper analysis, see context engine vs RAG.

What actually goes wrong with naive RAG for code?#

Three failure modes show up consistently. First, excessive retrieval: the agent searches broadly, pulls back dozens of chunks, and consumes tokens on context that's tangentially related at best. Chroma's context rot research showed that packing the context window past 40% utilization causes measurable intelligence decay (Chroma, 2025). Naive RAG actively pushes agents past that threshold.

Second, stale embeddings. Codebases change daily. Documentation drifts. The vector store reflects the state of the world when it was last indexed, not the state of the world right now. An agent retrieving a "current" architecture doc that was embedded three weeks ago may be working from an obsolete picture.

Third, no conflict resolution. When two retrieved documents disagree, RAG has no mechanism to decide which one wins. The agent sees both, picks one, and hopes. That's not context. That's a coin flip.

In testing retrieval pipelines against enterprise codebases, we've observed that naive RAG returns at least one outdated or contradictory document in roughly half of cross-repository queries. The failure isn't in the retrieval. It's in the absence of reasoning on top of retrieval.

The JetBrains 2025 Developer Ecosystem Survey found that 76% of developers using AI assistants still manually verify AI output before committing it (JetBrains, 2025). That verification tax exists because retrieval alone doesn't produce answers engineers trust enough to ship.

Myth 2: "If I connect enough MCP servers, I'm done"#

The second myth is that wiring up enough MCP servers solves the context problem. GitHub's 2025 research on AI-assisted development found that AI-generated code now accounts for a rapidly growing share of all code pushed to the platform, yet developer satisfaction with AI output quality hasn't kept pace with adoption (GitHub Blog, 2025). More connections don't automatically produce better understanding.

GitHub's 2025 research documented that AI-generated code is growing as a share of all code pushed to the platform, but developer trust in AI output quality has not scaled proportionally (GitHub Blog, 2025). Access to more tools and data sources is insufficient without a reasoning layer.

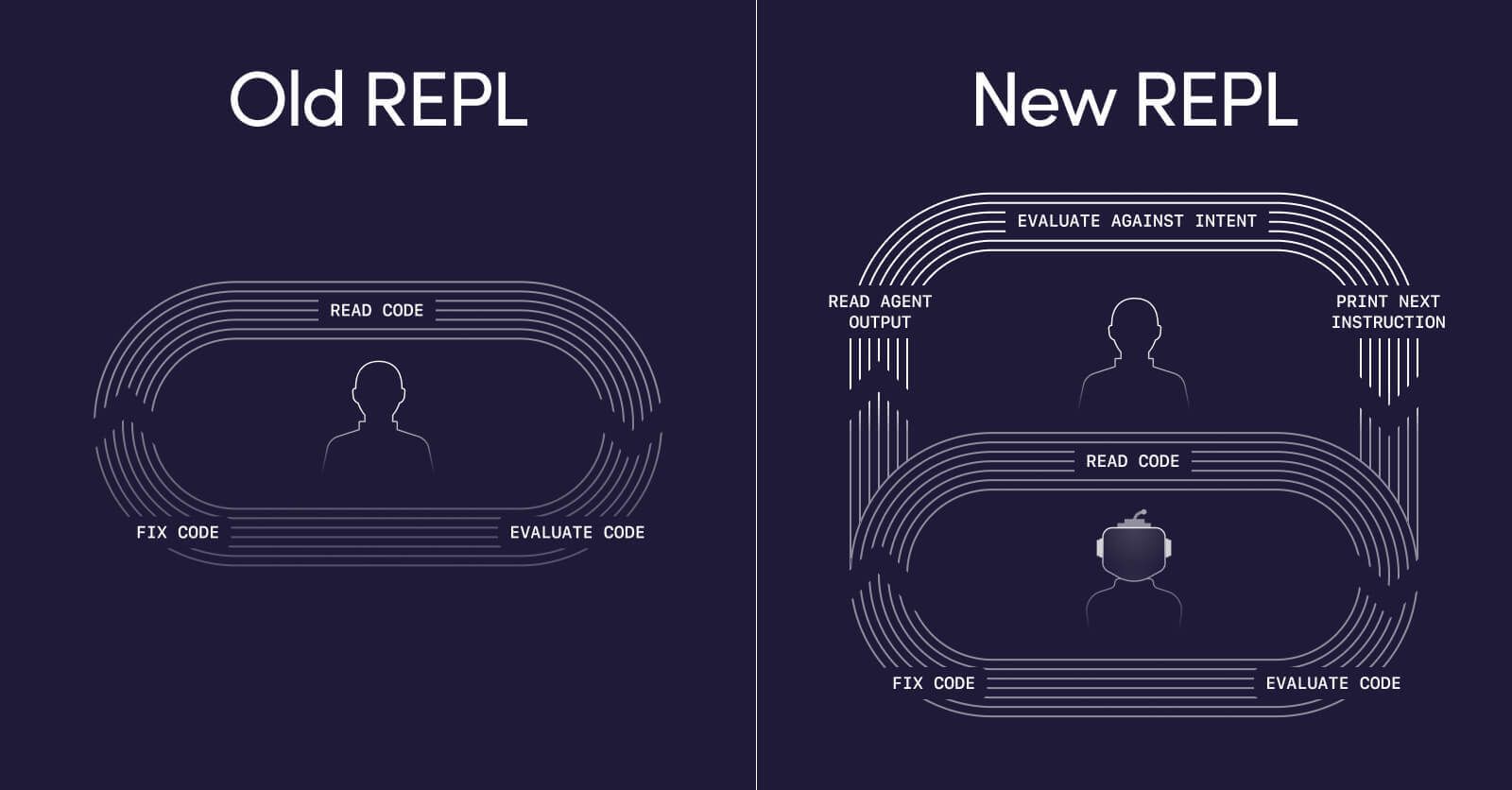

The Model Context Protocol is a genuinely useful standard. It solves the N-times-M integration problem by giving agents a uniform way to talk to data sources: Slack, Jira, Confluence, GitHub, your internal wikis. The spec is well-designed, and the ecosystem is growing fast. But MCP is a transport layer, not a reasoning layer, and the distinction matters enormously.

Here's what happens in practice. A team connects twelve MCP servers. The agent now has access to everything: code, docs, tickets, conversations. When it gets a task, it queries the servers, finds a result, and stops. This is satisfaction of search at the infrastructure level. The agent doesn't cross-reference the Slack thread against the Confluence doc against the actual code. It finds the first plausible answer and acts on it.

For more on this pattern, see why MCP servers alone aren't enough.

Why does more access not equal better understanding?#

Access answers the question "can the agent reach this data?" Understanding answers a harder question: "does the agent know what this data means in the context of the current task?" Those are fundamentally different capabilities, and MCP only provides the first one.

The Stack Overflow 2025 Developer Survey reported that 84% of developers use or plan to use AI tools, yet only 33% trust the accuracy of those tools' output (Stack Overflow Developer Survey, 2025). That trust gap doesn't close by adding more MCP servers. It closes when agents can reason about what they retrieve: resolving conflicts, weighing recency, checking permissions, and understanding organizational norms.

Consider a concrete example. An agent queries the Jira MCP and finds a ticket describing the expected behavior. It queries the Confluence MCP and finds a design doc that partially contradicts the ticket. It queries the Slack MCP and finds a thread where the product manager clarified the intent, but the clarification happened after the design doc was written. Three sources, three slightly different answers. MCP delivered all three faithfully. Now what?

The MCP limitations teams hit aren't protocol bugs. They're category errors. MCP is doing exactly what a transport layer should do. The problem is that teams are asking transport to do the work of reasoning, and no amount of additional connectors changes that. A team with 15 MCP servers and no reasoning layer has 15 sources of potential confusion.

Sam Younger, Engineering Manager at UserTesting, described the shift this way: "Unblocked is the first MCP queried for everything we look up. It's not just checking the code, the code could be wrong. It pulls the Confluence docs, the feature planning documents, the Slack conversations."

Myth 3: "A bigger context window will solve this"#

The third myth is the most seductive: if the context window were just bigger, agents could hold everything they need. Anthropic's 2025 documentation on effective context engineering explicitly cautions against this assumption, noting that more tokens in the window does not equal better agent performance (Anthropic, 2025). Bigger windows are a necessary but insufficient improvement.

Anthropic's 2025 documentation on effective context engineering explicitly cautions against the assumption that larger context windows improve agent performance (Anthropic, 2025). Window size is a constraint to manage, not a problem whose solution is "more."

Context windows have grown dramatically. Models now accept 100K, 200K, even 1M tokens. The temptation is obvious: why curate context at all when you can dump everything in? The answer comes from the research.

What does the research say about context window scaling?#

Chroma's 2025 study on context rot is the most direct evidence. It found that model reasoning quality degrades as the context window fills, with measurable intelligence decay past roughly 40% utilization (Chroma, 2025). A 200K-token window that's 80% full doesn't give you twice the intelligence of one that's 40% full. It gives you less.

This isn't just about volume. It's about signal-to-noise ratio. When you stuff a window with every potentially relevant document, you dilute the high-signal context with low-signal noise. The model has to work harder to find the relevant pieces, and it does so with decreasing reliability as the window fills.

Gartner's 2025 forecast projected that by 2028, 33% of enterprise software applications will include agentic AI (Gartner, 2025). As agent adoption scales, the context window myth will become more expensive. Teams building on the assumption that window size solves context quality will hit the decay curve at enterprise scale.

The DORA 2025 State of DevOps report reinforced this indirectly: AI tool adoption without corresponding investment in quality infrastructure correlates with declining delivery stability (DORA, 2025). Bigger windows without better curation are a version of that pattern. You scale access without scaling understanding.

But here's the question that rarely gets asked: even if context windows grew to 10 million tokens with no decay, would that solve the problem? Not really. An agent with a 10M-token window and no reasoning layer still can't tell you which of three conflicting docs is authoritative. It just holds all three at once, equally weighted, equally unresolved.

McKinsey's 2025 research on AI in software engineering estimated that AI-assisted developer productivity gains range from 20% to 50% on well-scoped tasks, but those gains erode when organizational context is missing or contradictory (McKinsey, 2025). Context windows hold tokens. They don't resolve contradictions.

For more on how to manage context effectively, read stop babysitting your agents.

What actually works instead?#

What works is decision-grade context: context an agent can act on without re-verification, synthesized across sources, conflict-resolved, and permission-enforced. The New Stack's 2025 reporting on enterprise AI adoption found that teams investing in context infrastructure, not just model upgrades, report measurably higher agent reliability and lower rework rates (The New Stack, 2025). The shift is from "give the agent more" to "give the agent better."

The New Stack's 2025 reporting found that teams investing in context infrastructure report measurably higher agent reliability and lower rework rates (The New Stack, 2025). Decision-grade context, synthesized across sources and permission-enforced, is what actually improves agent output.

The difference between retrieval and decision-grade context is the difference between handing someone a stack of printouts and giving them a briefing. A briefing is curated, prioritized, conflict-resolved, and shaped to the task at hand.

From retrieval to reasoning#

Three properties distinguish decision-grade context from raw retrieval. First, conflict resolution: when sources disagree, the system determines which one is authoritative based on recency, author seniority, or explicit override. Second, permission enforcement: the agent only sees context the requesting user is authorized to access. Third, task-shaping: the context is filtered and structured for the specific task, not dumped wholesale.

We've found that teams who shift from "how do we give the agent more context" to "how do we give the agent the right context" see the largest improvement in first-run merge rates. The volume of context often decreases while the quality of agent output increases. Less is genuinely more when the less is conflict-resolved and task-relevant.

What a context engine does above the wire#

A context engine sits between your data sources and your agent. It uses retrieval (including RAG and MCP) as transport layers, but then does the work those layers were never designed to do. It resolves conflicts between a Slack thread and a Confluence doc. It enforces permissions so that an agent used by a contractor doesn't see internal security discussions. It weights recency so that last week's architecture decision outranks last year's RFC.

The result is a context window that's smaller, cleaner, and more useful, exactly what Chroma's research suggests models need for peak reasoning performance.

For a deeper look at the architecture, read context engine vs RAG.

FAQ#

Is RAG useless for AI coding agents?#

No. RAG is a useful retrieval step inside a larger system. Stanford HAI's 2025 AI Index shows retrieval-augmented approaches outperform pure generation on factual tasks (Stanford HAI, 2025). The problem isn't RAG itself. It's treating RAG as the complete solution rather than one component of a reasoning pipeline that also handles conflict resolution and authority weighting.

For a full comparison, see RAG vs context engine.

Should I stop using MCP servers?#

No. MCP is a well-designed transport layer, and connecting your data sources to your agents via MCP is a sound infrastructure decision. The limitation is expecting MCP alone to produce understanding. Think of MCP as plumbing: essential, but the plumbing doesn't cook the meal. A context engine uses MCP as transport, then reasons on top of what MCP delivers.

For more detail, see why MCP servers aren't enough on their own.

Does context window size matter at all?#

It matters, but not the way most teams assume. A window that's too small forces hard tradeoffs about what to include. A window that's too large invites context stuffing, which degrades reasoning quality. Chroma's 2025 research suggests the sweet spot is keeping utilization well below 40% while maximizing signal density (Chroma, 2025). Window size is a constraint to manage, not a problem to eliminate.

What is "satisfaction of search" and why does it matter for agents?#

Satisfaction of search is a cognitive bias where finding one result suppresses the search for additional results. Tuddenham first documented it in radiology in 1962. AI agents exhibit the same behavior: they find the first plausible code path and stop, missing organizational conventions, deprecated patterns, and cross-repo dependencies that a human reviewer would catch.

Read the full satisfaction of search analysis.

From Myths to Mechanisms#

The three AI agent context myths share a common root. They all assume that access equals comprehension.

Chroma's research found intelligence decay past 40% window utilization (Chroma, 2025). Stanford documented hallucination rates of 17% to 34% in RAG systems with full document access (Stanford HAI, 2025). Stack Overflow's survey showed only 33% of developers trust AI output accuracy (Stack Overflow, 2025). The evidence points in one direction: more data, more connections, and more capacity don't fix a reasoning problem.

What works is building a reasoning layer between your data and your agents. A layer that resolves conflicts, enforces permissions, and delivers decision-grade context shaped to each task. Not more tokens. Better tokens.

The next time your agent ships code that compiles but misses the point, don't reach for a bigger window or another MCP server. Ask a different question: does my agent understand my organization, or does it just have access to it?

For a deeper look at what it takes to stop being the manual context engine for your agents, read Stop Babysitting Your Agents.