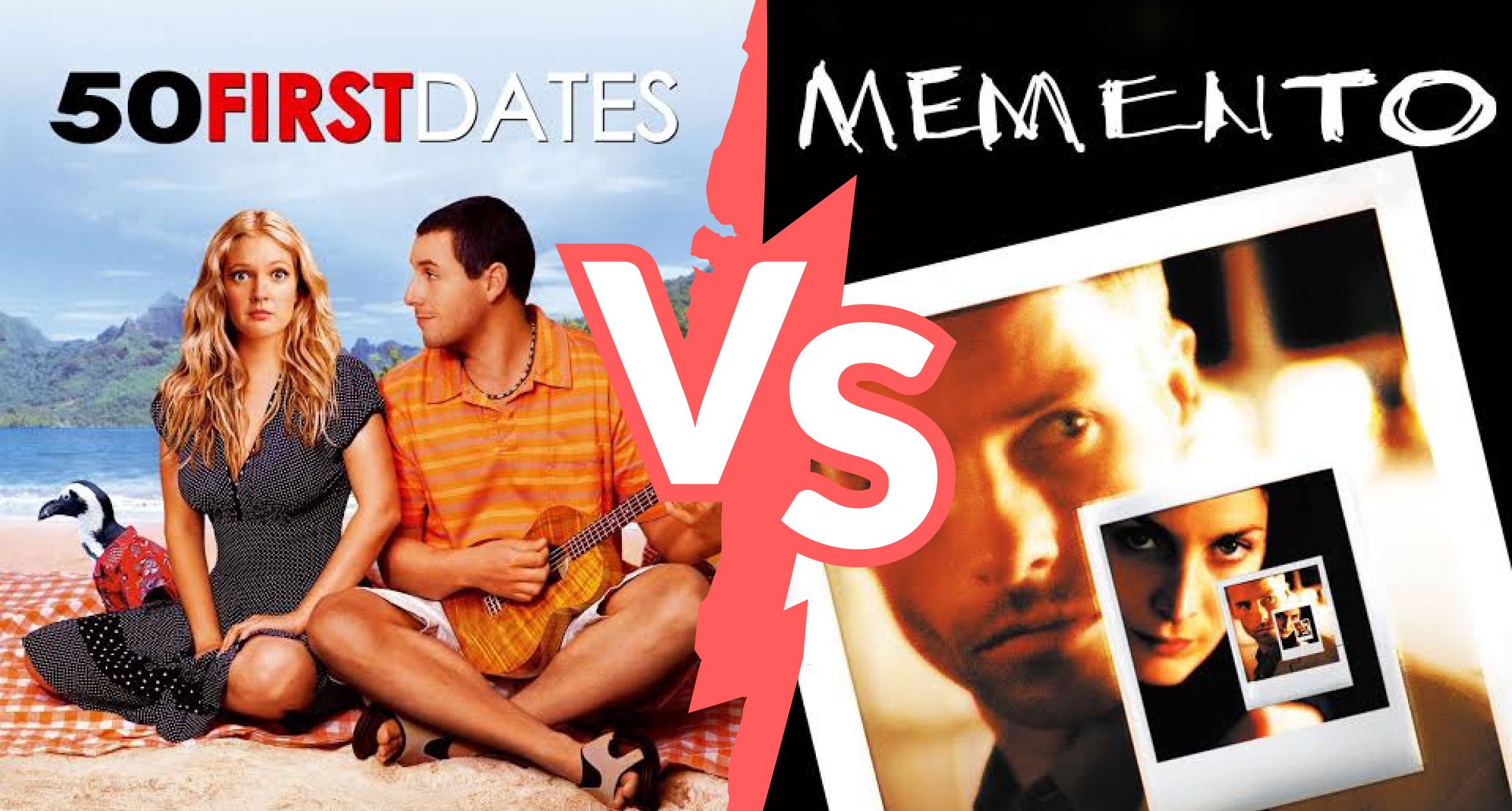

Your Agents Aren't in 50 First Dates. They're in Memento.

Dennis Pilarinos·April 17, 2026

Dennis Pilarinos·April 17, 2026

Steve Yegge described AI agents using “50 First Dates” as the analogy - every session starts fresh, so you have to re-establish context just to get useful work done.

However, in that example, it assumes that “the system” around “the agent” can reliably reconstruct what matters.

In 50 First Dates, Drew Barrymore’s character loses her memory every night. Adam Sandler’s character responds by building a system around that constraint, a daily video, a carefully controlled environment, people who understand the situation and reinforce the same story. Each morning starts from zero, but the reset is managed. The context is curated and consistent enough that she can be brought back up to speed.

That’s the optimistic version of how agents work. They forget, but we can brief them back into usefulness.

What I’ve seen in practice looks much closer to the movie Memento.

Guy Pearce’s character can’t form new memories, so he leaves himself clues, Polaroids, notes, tattoos. Unfortunately, those clues are incomplete and sometimes misleading, and he has no reliable way to tell what he can trust. He’s constantly reconstructing reality from fragments, and getting it wrong in ways that compound over time.

That’s much closer to how agents behave inside real systems.

They aren’t waking up to a clean, well-structured briefing. They’re pulling from code, logs, tickets, Slack threads, each with partial context and missing rationale, and trying to infer how things are supposed to work. The failure mode isn’t just forgetting, it’s confidently stitching together the wrong picture.

That difference matters because it changes how you think about the solution.

If you believe the “50 First Dates” version, you focus on improving the briefing, better prompts, more integrations, more data access. And that does help, to a point.

But if the reality is closer to “Memento,” the problem isn’t that the agent forgot. It’s that the underlying context it’s trying to reconstruct was never assembled in a coherent way to begin with. More data just means more fragments.

The shift is from helping the agent reconstruct context to making sure that context exists in a form that can actually guide it.

That’s where a context engine fits. Not as memory, and not just as retrieval, but as a way to surface the decisions, constraints, and conventions that already exist across an organization so the agent isn’t left guessing.

Once you see it that way, the goal isn’t to make agents better at starting from zero. It’s to stop putting them in that position in the first place.