The short version:

• You are doing the unscalable work of being the context engine for your AI agents.

• Three common fixes (RAG, MCP stacks, bigger context windows) help at the margins and fail at the reasoning layer.

• Agents fail through "satisfaction of search", stopping at the first plausible answer.

• Mergeable AI code on the first run requires decision-grade context, not more tokens.

• In the Stack Overflow Developer Survey 2025, only 33% of developers trust AI tool accuracy (Stack Overflow, 2025). That is the gap to close.

It's 11:07 pm. You're re-prompting Claude Code for the fourth time because it keeps overwriting the same schema migration you already told it to leave alone. You paste the ticket again. You paste the Slack thread where staging broke last quarter. The agent nods, edits the file, and introduces a variant of the same mistake with a different function name.

You close the laptop for a minute and realize the pattern: you are the context engine. Not the agent. You.

That's the job most of us are actually doing when we "use AI to code." We curate. We paste. We re-prompt. We translate the organization into tokens the model can chew on. And if you want to stop babysitting AI agents, the fix isn't a smarter prompt or a bigger model. It's admitting that the missing piece is institutional context for coding agents, and that humans cannot scale as the delivery mechanism for it.

This is a pillar post about why that pattern breaks, which common fixes don't work, and what decision-grade context actually looks like when you want mergeable AI code on the first run.

For a deeper look at feeding agents the right tokens, read our context engineering guide.

Why are you spending all day fixing your AI's code?#

In the Stack Overflow Developer Survey 2025, 84% of developers said they use or plan to use AI tools, yet only 33% said they trust the accuracy of those tools (Stack Overflow Developer Survey, 2025). That trust gap is the babysitting gap. Engineers keep supervising because the output is not reliably mergeable without human rework.

Because you are the context engine. To stop babysitting AI agents, you first have to name the work you're doing for them. Every session spawns an agent that knows code but not your organization: your patterns, your trade-offs, the deprecated function still alive for a reason. You feed it context by hand. With one agent, that's annoying. With three agents running in parallel on background tasks, it collapses.

In our conversations with staff engineers across mid-market SaaS teams, the same phrase keeps surfacing: "I spend more time prepping the agent than reviewing its output." Review burden scales worse than linearly once a single reviewer oversees multiple parallel agents, because each PR arrives with its own context gaps to reconstruct.

The bottleneck isn't the model, it's you#

The model is fast. You are not. When the agent stops to ask "which auth pattern should I follow?", the clock starts on human latency: your Slack, your memory, your willingness to dig into a Notion doc you half-remember writing. That is the bottleneck. Bigger models make the asking faster, not the answering. McKinsey's 2025 research on AI in software engineering found that developer productivity gains from AI tools average 20% to 50% on well-scoped tasks, but those gains erode sharply when organizational context is missing (McKinsey Technology Trends Outlook 2025, 2025).

Why one agent feels fine and three agents break you#

With a single agent, babysitting feels like pair programming with a fast but forgetful junior. You tolerate it. The real breakage shows up the moment you try to run two or three agents in parallel on background tasks. Each one needs its own prompt prep. Each one interrupts with clarifying questions. You context-switch between them and lose the thread of your own work.

We've watched teams try to "just run more agents" to get more throughput and end up with less output than a single engineer working unassisted, because the reviewer becomes a serial bottleneck on parallel garbage. The math of parallelism requires agent autonomy. Without it, more agents means more queue depth on the human.

Related: what your coding agent cannot see about your organization.

What does "babysitting" actually mean?#

GitHub's 2025 research reported that AI-generated code now accounts for a growing share of all code pushed to the platform, with Copilot-assisted developers completing tasks measurably faster in controlled studies (GitHub Blog, 2025). More agent-generated code means more supervision surface. Babysitting is the tax that scales with that surface.

Babysitting names a specific set of behaviors, and if you want to stop babysitting AI agents in practice, you have to see each one clearly. Pre-loading comes first: you curate the prompt with docs, tickets, and schemas before the agent even starts. Supervising is next: you watch the agent work and course-correct mid-task. Rework-reviewing is the tail: you spend review cycles not on code quality but on "the agent didn't know X, let me fix it."

If you're doing any of the three, the agent isn't autonomous. It's a fast intern with amnesia.

Pre-loading: the prep tax#

Pre-loading looks productive. You grab the Jira ticket, paste the Slack thread, screenshot the Figma, maybe drop in the schema. It takes 10 minutes. Multiply that across 20 tasks a week and you've spent half a day as a human ETL pipeline. Gartner's 2025 forecast projects that by 2028, 33% of enterprise software applications will include agentic AI, and that agentic AI will autonomously resolve 80% of common customer service issues without human intervention (Gartner, 2025). The prep tax will only grow as agent adoption scales. That time doesn't show up in any sprint metric, which is part of why it persists.

Supervising: the interrupt tax#

Supervising is the subtler cost. You read every line the agent writes in case it goes off the rails. Your focus breaks every 90 seconds. The DORA 2025 State of DevOps report found that AI tool adoption without corresponding investment in quality signals and developer experience can reduce delivery stability, because the supervision overhead cancels the speedup (DORA, 2025).

Rework-reviewing: the repair tax#

Rework-reviewing is when the PR compiles, the tests pass, and the code still misses the point. You're not reviewing quality. You're retroactively installing context.

The signal that you're in rework-review mode is the comment "this doesn't match how we do X." That sentence is a context miss, not a code quality issue, and no amount of stricter linting will catch it. The pattern lives in convention, not syntax.

The invisible cost on your calendar#

None of these three taxes show up cleanly in sprint metrics. Pre-loading looks like "prep time." Supervising looks like "focus work." Rework-reviewing looks like "code review." The work is real, measurable, and currently invisible to the people making tooling decisions. That's why teams keep buying faster models to solve what is fundamentally a context synthesis problem.

Why do agents keep making the same mistake?#

Anthropic's engineering team documented that effective context engineering requires curating the minimum high-signal tokens for the task, and that model behavior degrades as irrelevant context accumulates (Anthropic: Effective Context Engineering, 2025). Agents repeat mistakes because the context that would prevent them isn't reliably in the window.

They start from zero on every task. That's the root cause behind every plea to stop babysitting AI agents: the agent has no memory of your org. A new engineer eventually internalizes patterns through code review, team chat, and osmosis. Agents don't osmose. They inherit whatever you paste into the prompt, and nothing else. Stanford HAI's 2025 AI Index reported that while AI code generation tools have improved substantially on benchmarks, they still frequently violate project-specific conventions and architectural patterns not captured in test suites (Stanford HAI AI Index 2025, 2025). The tests pass, but the code still doesn't fit.

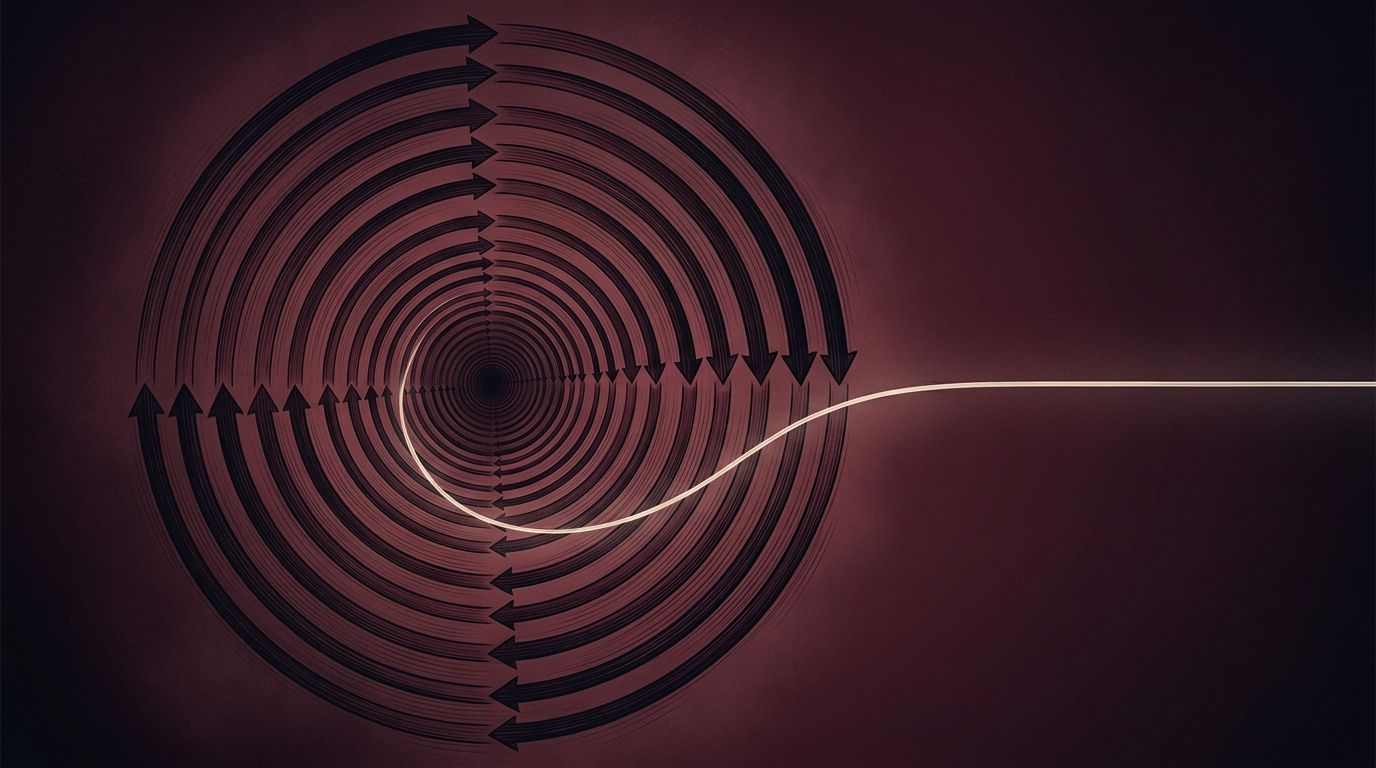

This is the AI agent doom loop: same mistake, different variation, every session. The pattern that would prevent it lives in a Slack thread from six months ago, a Linear comment, or the unwritten "we don't do that anymore" convention the senior engineers hold in their heads.

Why does my AI agent keep making mistakes? Because the agent starts every task without your team's conventions, past decisions, or architectural context. It generates plausible code that passes tests but violates organizational patterns. The fix is feeding synthesized, decision-grade context into the prompt before the agent writes a single line.

Why "just add it to CLAUDE.md" doesn't end the loop#

A rules file captures the patterns you remember to write down. It cannot capture conflicts, permissions, or the reasoning behind a decision. It's a snapshot that goes stale the moment a PR merges. Teams that stop at CLAUDE.md eventually build a second doom loop: the rules file itself becomes a source of outdated guidance that agents follow confidently into the wrong answer.

The deeper issue is that rules are static and organizations are not. Your auth pattern shifted when you migrated off the legacy provider. Your "don't touch this table" convention was overturned in a Slack decision three sprints ago. A rules file doesn't know. A real context engine does, because it reads from the sources that change when decisions change.

The shape of a mistake that keeps repeating#

Watch an agent do the same kind of task twice on two different tickets. It will make the same class of error in both, with different symptoms. That repetition is diagnostic. It tells you which context is missing and where it lives in your organization. The mistake pattern is a map of your context debt.

See also: why a context layer is not a context engine.

Why don't RAG, MCPs, or bigger context windows fix this?#

Chroma's "Context Rot" research found that model performance on retrieval and reasoning tasks degrades substantially as context length grows, with notable drops past roughly 40% of the available window on many frontier models (Chroma Research: Context Rot, 2025). More tokens is not more intelligence.

Three common fixes are usually offered, each with its own failure mode. Each one helps at the margins. None of them, on their own, lets you stop babysitting AI agents in a sustained way, because none of them solve the reasoning problem.

Myth 1: RAG over your docs will do it#

Retrieval-Augmented Generation returns plausibly-relevant documents. It cannot resolve conflicts between them. When your Notion says one thing, your ADR says another, and the code does a third thing, RAG hands the agent all three and hopes for the best. The agent picks one, usually the first, and ships it. This is satisfaction of search: the agent stops hunting the moment it finds a plausible answer, whether or not the answer is correct.

Myth 2: Stack more MCP servers#

MCP servers grant access. Access is not understanding. Hooking up Jira, Confluence, Slack, Linear, and GitHub to your agent gives it more places to look, not better decisions about what to trust. A Slack message from 2023 and a Confluence page from last week are not equally authoritative, and raw MCP access doesn't know the difference.

Myth 3: A 1M-token context window#

Bigger windows mean more data, not more coherence. The Chroma research is unambiguous: past roughly 40% context fill, most frontier models show measurable degradation in retrieval accuracy and reasoning (Chroma Research: Context Rot, 2025). Stuffing the window is how you get confidently wrong output at scale.

For a detailed breakdown, see context engine vs RAG and when retrieval alone fails.

What's actually missing?#

In our customer conversations, engineers using agents without synthesized organizational context estimate they are 20 to 30 percent less productive than when that context is present (, Unblocked customer interviews, 2026). The gap isn't tokens. It's synthesis.

Decision-grade context. The phrase does heavier lifting than it sounds: synthesized across sources, conflict-resolved at the reasoning layer, and permission-aware before the agent ever sees it. The difference between "here are 17 Notion pages that mention auth" and "here's how auth actually works in this codebase right now, and here's what changed last quarter."

Tushar Kawsar, a Software Engineer at UserTesting, put it plainly:

"My workflow is: here's the Jira ticket, here's the Confluence doc, here are the Slack threads, now build me a plan. Unblocked pulls all of that together so the agent starts with the full picture. Without it, I'd estimate I'm 20 to 30 percent less productive."

That 20 to 30 percent is the babysitting tax made visible. No model upgrade closes it, because the gap lives in synthesis work that happens before the prompt. The agent doesn't need a bigger brain. It needs a team that has already done the archaeology.

From retrieval to reasoning#

Retrieval finds documents. Reasoning decides which one to trust, how to reconcile them, and what to hand the agent. That's the layer most teams still do by hand. The New Stack's 2025 coverage of AI-assisted development documents how leading engineering organizations combine multiple retrieval signals with learned ranking to surface authoritative answers from large, noisy corpora (The New Stack, 2025). The same principle applies here: synthesis beats raw retrieval. When a real context engine synthesizes across every system your team uses, agents start with the full picture, not just the code.

What "decision-grade" means in practice#

Decision-grade context has three properties. It's current, meaning it reflects the state of the world at task time, not at indexing time. It's reconciled, meaning conflicting sources have been arbitrated, not concatenated. And it's scoped, meaning the agent gets what it needs for this task rather than everything anyone ever wrote about the topic. Strip any one of those and the agent drifts back into satisfaction of search.

See decision-grade context in action inside a real engineering workflow.

What does a real context engine do for agents?#

Anthropic's engineering write-up on context engineering frames the job as "finding the smallest possible set of high-signal tokens that maximize the likelihood of some desired outcome" (Anthropic, 2025). That is synthesis work, not retrieval work, and it is what a real context engine automates.

Four operations agents can't do for themselves.

- Ingest across code and organizational sources. Repos, Jira, Confluence, Slack, Notion, incident history, ADRs. All of it, not just the code.

- Resolve conflicts between them. When docs disagree with code, something has to arbitrate. An engine that reasons, not just retrieves, can flag the conflict instead of picking a side at random.

- Enforce permissions. Not every engineer should see every channel. Neither should every agent. Permissions are part of the context, not a wrapper around it.

- Deliver context shaped to the task. The same question from a security engineer and a junior backend dev needs different framing. Task-shaping is the last mile.

The result is an agent that can act on the first run instead of asking for clarification or shipping a confidently wrong PR.

Why this is an engine, not a layer#

A layer sits between the agent and the data. An engine does work on that data: joining, reconciling, filtering, and shaping it before the agent ever sees it. The distinction matters because most "context" products are layers. They pipe sources in and hope the model figures it out. An engine does the figuring out first, so the model's reasoning budget goes to the task, not to data triage.

Learn more: how a context engine actually works and why it matters now.

Frequently asked questions#

Forrester's 2025 research on AI-augmented software delivery found that engineering teams with structured knowledge management practices ship higher-quality AI-assisted code, because context depth determines output quality more than model capability (Forrester, 2025). The same principle applies to AI-generated code: surface-level answers from agents fail because they lack the depth of organizational experience.

Can't I just write better prompts?#

Better prompts help. But if the agent doesn't have the context to follow the prompt correctly, better prompts produce confidently wrong output faster. Prompt engineering is a tactic. Context engineering is the system underneath it. The Stack Overflow Developer Survey 2025 found only 33% of developers trust AI tool accuracy (Stack Overflow, 2025), and that number doesn't move with prompt tweaks.

Will bigger models solve this?#

No. Bigger models reason better over the context they're given. They do not manufacture context that isn't there. A frontier model with zero organizational context will still miss your auth pattern, because the pattern isn't in its training data. Chroma's context rot research shows reasoning quality actually drops as you stuff more raw tokens in (Chroma, 2025).

Is this just a problem for legacy codebases?#

It's worst in legacy codebases because more context is implicit. It applies everywhere humans carry knowledge that isn't in the code. Two-year-old startups have the same problem at smaller scale: three Slack channels and a Notion page hold the "why" behind half the code.

What about parallel agents, does the same advice apply?#

Yes, and the stakes go up. Babysitting one agent is annoying. Babysitting five simultaneously is impossible. Autonomy becomes a prerequisite, not a nice-to-have. The review-per-PR cost doesn't drop just because you spawned more agents.

Where should I start?#

Pick one concrete failure mode. A task your agent repeatedly gets wrong. Figure out what context would have prevented it, then put that context behind a reasoning layer, not just a retrieval layer. Build outward from the first fix.

Ready to go deeper? Read what is a context engine and how it changes agent workflows.

How do you move from babysitting to autonomy?#

Industry coverage of AI coding tooling through 2025, including Gergely Orosz's ongoing reporting at The Pragmatic Engineer, consistently points to context infrastructure as the differentiator between teams getting real traction and teams stuck on model upgrades (The Pragmatic Engineer, 2025). Autonomy is earned through context, not bought through licenses.

How to make AI agents autonomous: Audit your last 20 agent-assisted PRs for context misses, trace each failure to the source that holds the preventing knowledge, then put that knowledge behind a reasoning layer that synthesizes across sources at task time. Autonomy is built through context infrastructure, not prompt tricks.

Three steps, in order. This is the practical sequence we recommend to teams that want to stop babysitting AI agents without ripping out their existing stack.

Step 1: Audit where your agents actually fail#

Pattern-match the failures, not the capabilities. Pull your last 20 agent-assisted PRs. Note every comment that says "the agent didn't know X" or "this breaks our convention on Y." That list is your context debt.

Step 2: Trace what context would have prevented each failure#

For each failure, name the source that holds the preventing context. A Slack thread? An ADR? A prior PR? If the context exists but wasn't in the window, the problem is delivery. If it doesn't exist anywhere, the problem is that the knowledge is trapped in someone's head and needs to be externalized.

Step 3: Put that context behind a reasoning layer#

Most teams stop at step 2 and shove everything into CLAUDE.md. That works for a week. Then the file grows, conflicts with itself, and becomes its own source of hallucinated guidance.

Arthur Rodolfo, a Software Engineer at Clio, described the pattern that actually works:

"I built a step called 'enrich' that runs before any code gets written. The agent asks Unblocked for everything, the ticket, the Slack context, what's been done in related repos, and then it starts implementing. It's especially powerful for cross-repository work where you'd otherwise have to do all that archaeology yourself."

The enrich step is the reasoning layer made concrete. It's the agent pulling decision-grade context at task time, not a human preloading a prompt. That's the handoff from babysitting to autonomy.

For the full framework, see our context engineering guide for engineering teams.

How do you measure whether it's working?#

The DORA 2025 State of DevOps report emphasized that AI tool adoption without corresponding investment in internal quality signals can reduce delivery stability, and recommended teams measure throughput and rework explicitly (DORA, 2025). The measurement discipline matters as much as the tool choice.

How to get mergeable code from AI agents on the first run: Provide the agent with current, conflict-resolved, permission-aware context shaped to the specific task. Teams that do this report review cycles dropping from 3-5 round-trips to 1-2, with median task time falling from hours to minutes (Unblocked, 2026).

Three metrics. All decrease when context quality improves.

| Metric | Babysitting baseline | With decision-grade context |

| Time-to-mergeable-code per agent PR | Hours, with rework | Minutes, first-run merge-ready |

| Review cycles per task | 3 to 5 round-trips | 1 to 2 |

| Tokens consumed per completed task | High, wasteful refills | Lower, task-shaped |

Across teams we've observed adopting a synthesized context layer, the median time for a class of cross-repo tasks dropped from roughly 2.5 hours to 25 minutes, while review cycles compressed from an average of 3 to 1 (Unblocked customer observations, 2026). The drop is not the model. The drop is the agent starting with the full picture.

What not to measure#

Don't measure lines of code shipped by agents. It's the wrong proxy. An agent that ships twice the lines with the same rework rate is making your reviewers' lives worse, not better. Measure mergeable output, not output.

Also be careful with raw "agent usage" numbers. High usage can mean agents are genuinely doing work, or it can mean engineers are retrying the same failed task five times. The trend you want is usage going up while review cycles go down. Those two curves moving in opposite directions is the real signal that the babysitting era is ending on your team.

A note on parallel and background agents#

The metrics above get harder to game once you move to parallel or background agents. A reviewer can manually absorb a little rework on one agent's PR. They cannot absorb it on five PRs landing at once. This is why teams that get serious about autonomy tend to front-load their investment in context quality. The parallel workload exposes the weakness of the human-as-context-engine model faster than any benchmark.

Related: what your coding agent cannot see about your organization.

From Babysitting to Autonomy#

The 2025 State of DevOps (DORA) research program reinforced that teams investing in internal developer platforms and knowledge infrastructure consistently outperform teams focused solely on tool adoption (DORA, 2025). Context infrastructure is the version of that investment for the agent era.

The babysitting pattern reflects an architectural gap, not a personal failing. We gave agents access to tools and called it a day, then acted surprised when they kept asking us to be their memory.

The path out is straightforward to describe and non-trivial to execute. Stop trying to be the context engine yourself. The next model release will not fix a problem that does not live in the model. Treat organizational context, the why behind the code, as a first-class input the agent fetches at task time from a system that reasons across sources, instead of a rules file that rots.

If you want to stop babysitting AI agents and get mergeable AI code on the first run, invest in the layer that synthesizes across every system your team uses, so agents start with the full picture, not just the code. That is what a real context engine does, and it is why teams like Clio, UserTesting, and Tradeshift built their agent workflows on Unblocked as the institutional context for coding agents.

Pick one failure mode this week. Trace the missing context. Put it behind a layer that reasons. Watch the review cycles compress.

That's the handoff. That's autonomy.

Ready to go deeper? Read what is a context engine and how it changes agent workflows.