Bottom line: This discipline is distinct from prompt engineering and distinct from RAG. It focuses on sourcing, retrieving, and ranking institutional knowledge so coding agents stop repeating work the team has already done, debated, or rejected, and so reviewers stop bouncing AI-generated PRs for the same three reasons every sprint.

Most AI coding tools still ship code that gets bounced in review. The autocomplete looks fluent, the diff compiles, and then a senior engineer sends it back because it duplicates a deprecated helper, violates a convention the team agreed on six months ago in Slack, or reinvents a service another squad already owns. The model did not fail at generation. It failed at context. Closing that gap is what this discipline exists to do: curating, retrieving, ranking, and refreshing the institutional knowledge a coding agent needs to produce work a human reviewer will actually accept. It pulls the WHY behind a codebase out of PRs, tickets, docs, and chat, and puts it in front of the model before it writes a line. It is the layer of institutional context for coding agents that most teams are still missing, and it is the reason their AI tools keep generating plausible-looking code that their engineers keep rejecting.

Why does context engineering exist as a discipline?#

Context engineering emerged because bigger context windows alone do not improve AI code quality. DORA's State of DevOps 2024 found AI adoption rising while team-level throughput and stability declined, as reported by The New Stack. The missing input is curated institutional knowledge, not more tokens.

Why more tokens stopped being the answer#

For a long time, "give the model more tokens" was treated as the answer. A bigger window would, eventually, swallow the repo. That assumption has not aged well. Engineering orgs that measured the output found the same pattern: raw context is not the same as relevant context, and both are far less than the full picture. Recent industry data reinforces this. DORA's State of DevOps 2024 program, alongside reporting from The New Stack summarizing the findings, noted that AI adoption among developers rose sharply while that year's team-level data showed AI use correlating with decreases in software delivery throughput and stability. Generating more code, faster, is not the same as shipping more value, and generating it with a wider window does not close the gap on its own.

The missing ingredient is WHY, not WHAT#

The missing ingredient is almost always context: why a module exists, which past approach was rejected, which incident shaped the current retry logic, which team owns the downstream service. Code search tells an agent what the codebase looks like today. It cannot tell the agent why that shape was chosen, what was tried first, or which decisions still bind the team. Without that layer, an agent will happily rebuild something the team already killed, because nothing in the repo says "do not do this." Institutional memory lives in PRs, chat, and tickets, not in the files.

The shift from prompt engineering to context work#

Prompt engineering is about the instructions you send a model. This discipline is about the evidence you send with those instructions. Prompting optimizes how you ask; context work optimizes what the model knows before it answers. As coding agents take on multi-step work (open a branch, read related tickets, draft a PR), the prompt itself becomes the smallest part of the payload. Everything else is retrieved code, linked PR threads, architecture notes, and past decisions. Teams that treat those inputs as a first-class engineering surface ship reviewable code. Teams that rely on prompt cleverness alone keep bouncing PRs.

What changed in 2025-2026 that made this a distinct role#

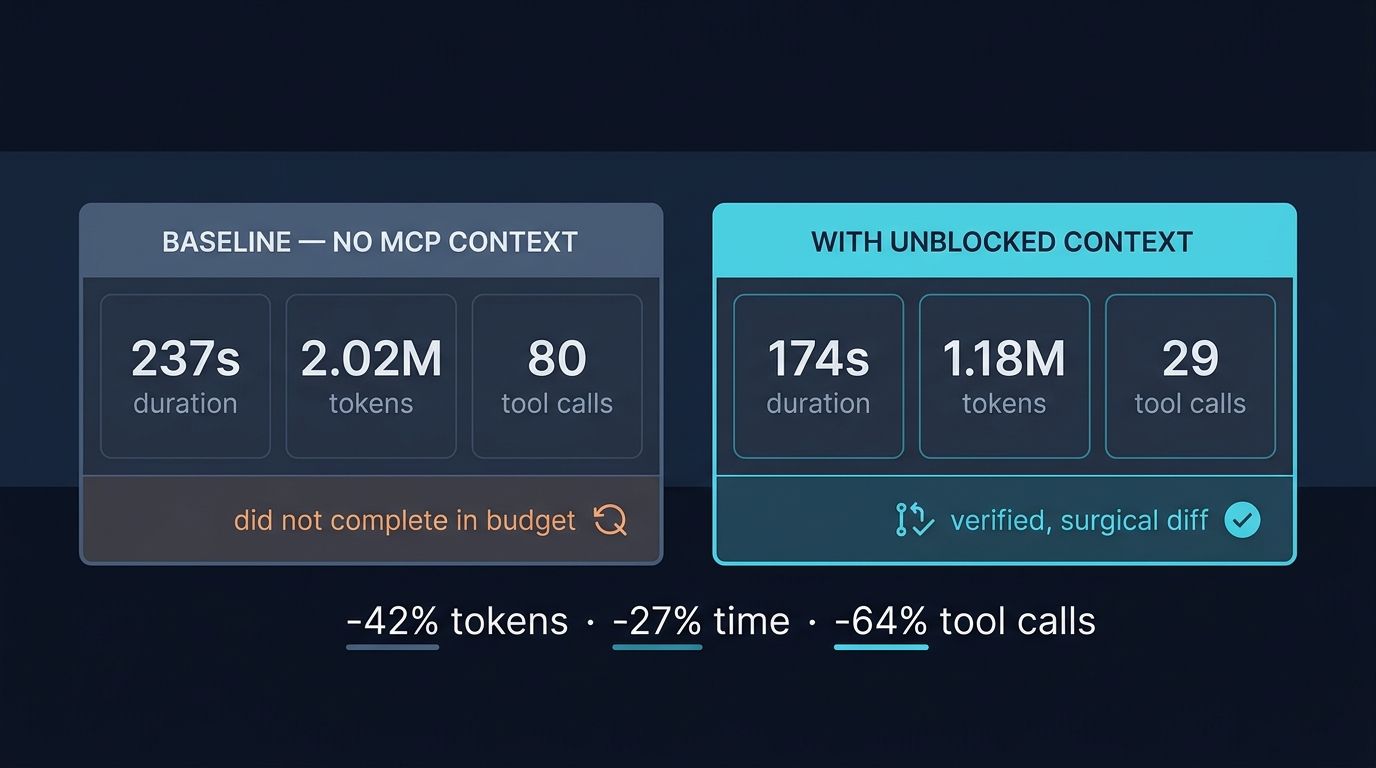

Three forces turned context work into its own discipline. First, coding agents moved from single-file suggestions to repo-aware, multi-tool work, which exposed every gap in what the agent could see. Second, the Model Context Protocol (MCP) gave every tool a common socket to attach to an agent, so "what sources are connected" became a design decision rather than a vendor lock-in. Third, engineering leaders started measuring AI output in review-acceptance rate and change-failure rate, not keystrokes saved. Once the metric shifted, the bottleneck stopped being the model and started being the pipeline feeding it.

What are the four pillars of context engineering?#

The practice rests on four pillars: source curation, retrieval strategy, ranking and relevance, and feedback loops. Skip one and the rest degrade fast. This framework appears across vendor architectures and internal platform teams as the working decomposition of what a coding agent actually needs to read before it writes.

Whether you run this in-house or adopt a platform, the practice rests on four pillars. Skip one and the rest degrade fast.

Pillar 1: Source curation#

Decide which systems count as authoritative. Most engineering orgs have knowledge scattered across PRs, issue trackers, docs wikis, chat, incident write-ups, and code comments. Curation means choosing which of those an agent can read, at what fidelity, and which it should ignore. A draft Notion page from 2022 is not the same signal as a merged PR from last week. Without curation, agents over-index on noisy or outdated material and quietly resurrect patterns the team has already moved past. Source curation is the quiet first win: prune aggressively, label authority, and the downstream retrieval gets easier before you tune a single ranker.

Pillar 2: Retrieval strategy#

Once sources are curated, retrieval is how the right slice reaches the model at the right moment. Naive vector search over everything is the common failure mode. Good retrieval blends semantic search, keyword search, graph traversal across linked entities (PR to issue to Slack thread to deploy), and recency weighting. It also knows when not to retrieve, when the current file and prompt are enough and piling on material would push the model toward a worse answer. The strongest retrieval setups treat "return nothing" as a legitimate answer when no source clears the relevance bar.

Pillar 3: Ranking and relevance#

Retrieval returns candidates; ranking decides what actually enters the window. This is where most homegrown setups quietly break. If you hand the model ten loosely related snippets, the signal-to-noise ratio drops and the output gets worse, not better. Ranking has to account for freshness (is this decision still in force?), authority (was this in a merged PR or a rejected draft?), and proximity to the task at hand (same service, same team, same file). A good ranker is the difference between "the agent cited the right PR" and "the agent hallucinated a plausible one."

Pillar 4: Feedback loops#

This is not a one-shot pipeline. It learns. When a reviewer rejects a suggestion, when an engineer edits generated code before committing, when an agent's output lands cleanly on the first try, all of that is training data for the next retrieval. Teams that wire this feedback in see review-acceptance rates climb quarter over quarter. Teams that treat the pipeline as static watch quality drift as the codebase evolves around it. Together, these four pillars turn a pile of organizational knowledge into something an agent can use. Miss any one and the system decays.

How is context engineering different from prompt engineering or RAG?#

Prompt engineering tunes the instruction sent to a model. RAG retrieves top-k similar documents and stuffs them in. Context engineering is the broader discipline that curates sources, ranks by authority and freshness, traverses entity graphs, and learns from reviewer feedback. All three can coexist; only the third survives review at scale.

These three terms get blurred, often by vendors, and the confusion costs teams real time.

Prompt engineering: tuning the instruction#

Prompt engineering is the craft of writing the instruction. It is a legitimate skill, covering few-shot examples, role framing, and output schemas, but it operates on a fixed knowledge base (the model's weights) and a fixed input (whatever you paste into the prompt). In a coding context, prompt engineering alone cannot tell the model that your team standardized on a specific retry library last quarter. The model has no way to know. You can write a perfect instruction and still get a suggestion that contradicts last month's architecture review, because the instruction never carried that evidence.

RAG: one retrieval technique, not the whole stack#

RAG, retrieval-augmented generation, is a specific technique: embed documents, store them in a vector database, retrieve the top-k by similarity to the query, and stuff the results into the prompt. RAG is useful, and it is part of a good stack, but it is not the whole stack. Classic RAG has no notion of authority, no graph between entities, no recency weighting by default, and no feedback loop. It treats a draft doc and a merged PR as equivalent text, which for engineering work is almost always wrong.

The broader discipline, and why it wins review#

Context engineering is the broader discipline. It uses RAG where RAG fits, but it also covers source curation, ranking heuristics, graph traversal across PRs and tickets and chat, freshness, and the feedback loops that keep the whole system honest over time. A well-instrumented coding agent knows that the Slack thread where the team killed the previous retry approach matters more than the stale doc that still describes it, and it knows why that rejection happened. Prompt engineering tunes the question, RAG fetches some documents, and the broader discipline decides what the agent should know before the question is ever asked. Prompt-only setups produce suggestions that look right; context-aware setups produce suggestions that survive review.

What does great context engineering look like in practice?#

Teams with mature context setups show measurable signals within weeks: rising review-acceptance rates on AI-assisted PRs, fewer "why does this exist?" pings to senior staff, and agents citing specific prior PRs and incidents. Practitioners describe the feel as having extra senior engineers with full recall attached to the team.

You can usually tell within a week whether a team's context setup is working. The signals are concrete.

"Unblocked behaves like two ace engineers on my team who know everything that's happened. It's a support arm of my platform engineering team."

— Prasad Thulukanam, Director of Cloud & Platform Engineering, RB Global

That framing, an extra pair of senior engineers with full recall, is what good context looks like from the inside. It is not a smarter model. It is a model with institutional memory attached. The best setups unify PRs, Slack, Jira, Notion, Confluence, and code repos into one retrievable surface, and they feed the agent the WHY, not just the WHAT.

Signals the pipeline is working#

- Review-acceptance rate on AI-assisted PRs climbs over the first few months and stays there.

- New engineers stop pinging senior staff for "why does this exist?" questions because the agent already answers them.

- Agents reference specific prior PRs, incidents, or decisions in their explanations, not generic boilerplate.

- Change-failure rate on AI-assisted work tracks at or below human-only work, instead of spiking.

- Engineers report reclaiming hours per week previously lost to knowledge-hunting across tools.

Common anti-patterns#

- "Just index everything." Indexing without curation buries the useful signals under draft docs, test fixtures, and archived channels.

- Vector search as the whole plan. Embeddings miss structural relationships between a PR, its ticket, and the chat thread where it was debated.

- Static pipelines. No feedback loop means the system cannot tell a merged pattern from a rejected one six months later.

- Treating the window as the product. A bigger window does not fix a low-quality retrieval set; it just lets you waste more tokens on noise.

- Owner-by-default sprawl. If nobody owns the pipeline, nobody refreshes it, and quality quietly degrades.

What tools and architectures support context engineering?#

The tooling stack is converging around three layers: MCP servers as the wire format for connecting sources, context layers as thin retrieval pipes, and context engines as the full graph-and-ranking stack. The pragmatic audit is where institutional knowledge is lossy today, not build-versus-buy in the abstract.

The tooling picture is converging around a few patterns. Knowing the difference matters when you are buying or building.

MCP servers: the wire format for knowledge sources#

The Model Context Protocol is becoming the default wire format for attaching tools and knowledge sources to agents. An MCP server exposes a capability, such as "search our PRs," "read our Jira issues," or "fetch this Slack thread," in a shape any MCP-speaking agent can call. MCP matters because it separates where your knowledge lives from which agent consumes it. You can swap agents without re-plumbing every integration, which is why most serious stacks standardize on MCP even when they also ship a native API.

Context layers vs context engines#

A context layer is a thin retrieval and access-control slice sitting between your data and the agent. It usually ships as a connector pack plus a query API. Useful, but limited: a layer typically does not reason across entities, rank by authority, or learn from feedback. It is a pipe, not a brain. We unpacked that distinction in A context layer is not a context engine. A context engine is the full stack: source curation, multi-modal retrieval, entity graphs, ranking, and feedback loops. It treats your PRs, issues, docs, and chat as a linked knowledge graph rather than a bag of documents, and it exposes that graph to any coding agent through MCP. See What is a context engine? for the architecture, and How a context engine actually works and why you need to care now for the mechanics that separate a real engine from a connector pack.

How to audit your own stack#

The pragmatic move for most engineering leaders is not "build vs. buy" in the abstract. It is "where is institutional knowledge currently lossy, and what closes that loss fastest?" For each major source (PRs, issues, docs, chat, incidents) ask three questions. Can an agent actually read it end-to-end, including the discussion threads, not just the surface document? Does retrieval respect authority and freshness, so a rejected draft does not outweigh a merged decision? And is there a signal path back when a human edits or rejects an agent's output, so the next retrieval is better than the last? A "no" to any of the three is where your next investment goes. Most teams find the biggest gap in the conversational knowledge: the Slack threads, design reviews, and incident retros that hold the WHY but rarely make it into the formal docs.

Who owns context engineering?#

Ownership typically lands in platform engineering, developer productivity, or a dedicated AI platform team. The anti-pattern is leaving it unowned: unowned pipelines rot on the same timeline as unowned CI, quickly and usually right before an important launch. Most orgs staff it as a two-to-four-person pod once adoption grows past a handful of teams.

Early on, this work lived under whoever ran developer productivity or the AI platform team. As the discipline matures, three owner patterns are emerging.

Three ownership patterns#

Platform engineering. Most common in larger orgs. Platform owns the infrastructure, the MCP server inventory, and the access-control policy. They treat the pipeline as another paved road, versioned and monitored like any other shared service.

Developer productivity / DevEx. Common when the AI strategy is led from the developer experience side. DevEx owns adoption, metrics (review-acceptance rate, time-to-first-useful-answer), and the feedback loop back into retrieval.

A dedicated context or AI platform team. Emerging at orgs where AI-assisted development is a strategic priority. This team owns the whole stack end-to-end and partners with security and data governance.

Whichever shape you pick, the anti-pattern is leaving it unowned. Pipelines that nobody maintains rot on the same timeline as unowned CI: quickly, and usually right before an important launch. A useful rule of thumb: if a new engineer joins and cannot name the person responsible for keeping the agent's knowledge sources current, the ownership model is not yet real.

Staffing the work#

Staffing-wise, most orgs start with a single engineer carving out a fraction of their time and graduate to a small pod, typically two to four people, as adoption grows past a handful of teams. The work splits roughly into three lanes: integrations (getting new sources connected via MCP and keeping them healthy), quality (tuning retrieval and ranking, running eval sets against real developer queries), and enablement (onboarding teams, tracking the metrics that matter, feeding issues back into the pipeline). You do not need a headcount plan on day one, but you do need a name on each lane within the first quarter.

FAQ#

Short answers to the recurring questions engineering leaders ask when scoping this work: how it differs from RAG, whether window size matters, how it compares to internal search, what MCP changes for buyers, how to measure it, and how long standing up a useful first version takes.

Is this just a rebrand of RAG? No. RAG is one retrieval technique. The broader discipline covers source curation, ranking, graph traversal, freshness, and feedback loops, with or without vector embeddings under the hood.

Do I need a huge context window to do this well? Larger windows help at the margins, but ranking matters more. A 200K-token window filled with low-relevance snippets still produces worse output than a 32K window filled with the right ones.

How is this different from an internal search tool? Internal search returns documents to a human. A context pipeline returns curated, ranked, reasoning-ready evidence to a coding agent, including graph relationships between PRs, tickets, and chat. Different consumer, different quality bar.

What does MCP change for engineering leaders? It lets you treat knowledge sources as pluggable. You connect your PR, Jira, Slack, and docs systems once, and any MCP-compatible agent can consume them. Less vendor lock-in, more reuse.

How do I know if it is actually working? Track review-acceptance rate on AI-assisted PRs, change-failure rate on AI-assisted work, and time engineers spend hunting for context. All three should move in the right direction within a quarter. If they have not moved after 90 days of steady usage, the issue is almost never the model. It is the pipeline feeding it.

How long does it take to stand this up? A thin first version, with one or two sources wired in, basic retrieval, and a single feedback channel, is usually a few weeks of focused work. Reaching the point where agents reliably cite specific prior PRs, incidents, and decisions in their output is typically a quarter or two of iteration. The curve is steepest early; most of the return comes from the first three sources you connect well, not the tenth you connect poorly.

Putting This Into Practice#

The teams getting real value from AI coding tools in 2026 are not the ones with the cleverest prompts or the biggest token budgets. They are the teams treating context as an engineered surface: curated sources, graph-aware retrieval, authority-weighted ranking, and feedback loops that learn from what reviewers accept and reject. Start by auditing where institutional knowledge is lossy today: the decisions in Slack that never make it to docs, the rejected approaches buried in closed PRs, the incident write-ups nobody links from the code that caused them. Pick one source to connect cleanly through MCP, one ranking signal to add, and one feedback loop to wire in. Measure review-acceptance rate before and after. That is how this discipline stops being a concept and starts being a capability, and how agents start with the full picture instead of just the code.