Context Engineering vs RAG: When to Use Which Approach

Brandon Waselnuk·April 21, 2026

Brandon Waselnuk·April 21, 2026

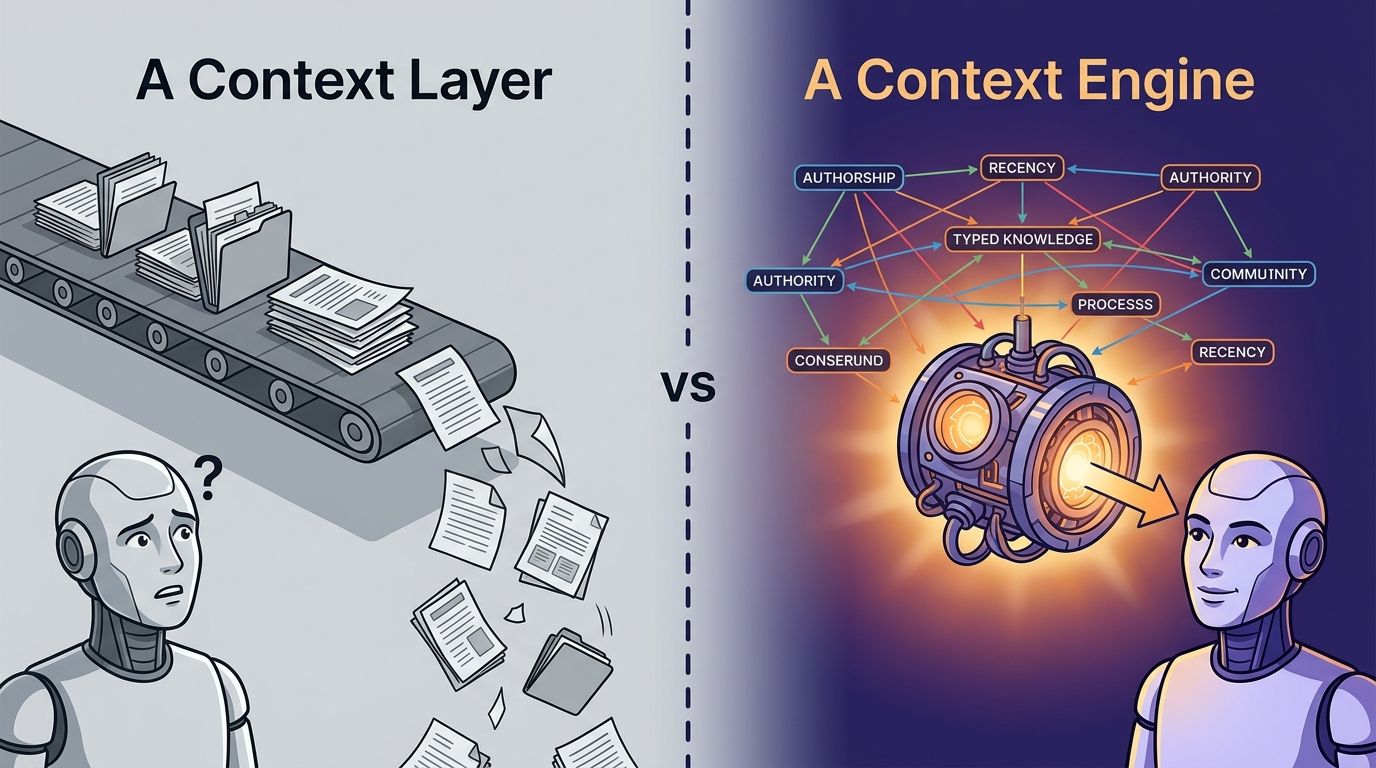

Bottom line: RAG fetches documents by similarity. Context engineering curates, ranks, resolves, and governs what reaches the model. Most teams need both, but starting with RAG alone leaves gaps in permissions, conflict resolution, and freshness that compound as agents take on more responsibility.

Context Engineering vs RAG: When to Use Which Approach

RAG isn't the wrong answer. It's the incomplete one. Engineering teams keep debating "should we use RAG?" when the real question is "what does our agent need to know, and who decides?" Retrieval-augmented generation is a technique for fetching similar documents at query time. Context engineering is the discipline of designing what an AI system sees, from which sources, in what order, under which permissions. One is a tool. The other is a practice. The confusion between them costs teams months of pipeline work that solves the wrong problem, and the context engineering vs RAG distinction matters more as agents move from autocomplete to autonomous code generation.

For background on context engineering as a discipline, see the context engineering guide.

What problem does RAG actually solve?#

RAG solves the knowledge cutoff problem. Anthropic's engineering team describes retrieval as one input channel inside a broader system, useful for grounding a model in external data but not sufficient on its own (Anthropic, 2025). When a model needs facts it wasn't trained on, RAG provides them by fetching similar documents at query time.

The mechanism is straightforward. Chunk your documents, embed them as vectors, store them in a database, and at query time embed the question and pull the top-k most similar chunks. The model then generates with those chunks in its context window. For single-source question answering, like a support bot over product docs, this works well. Stack Overflow's 2025 Developer Survey found that 76% of developers now use or plan to use AI tools in their workflow (Stack Overflow Developer Survey, 2025). Many of those tools use some form of RAG under the hood.

RAG retrieves documents by vector similarity to ground LLM outputs in external data. Anthropic frames retrieval as one input channel that a broader context system orchestrates, not the full solution (Anthropic, 2025).

"When to use RAG" has a clean answer: use it when you have one authoritative corpus, questions shaped like "find the relevant passage," and no permission complexity. A product manual. A contained knowledge base. A FAQ chatbot. RAG is excellent in that lane.

The trouble starts when teams try to stretch RAG beyond that lane. Most engineering teams hit that wall fast.

For more detail on where RAG fits in a broader architecture, see our post on context engines vs RAG.

What problem does context engineering solve that RAG doesn't?#

Context engineering solves the "right information, right shape, right time" problem that RAG leaves open. Stanford HAI's 2025 AI Index found that AI model accuracy drops sharply as task complexity increases, with multi-step reasoning tasks showing materially higher error rates than simple retrieval (Stanford HAI AI Index, 2025). That accuracy drop is the gap context engineering exists to close.

RAG asks: "Which documents look similar to this query?" Context engineering asks a harder set of questions. Which sources are authoritative? Which document wins when two disagree? Who is allowed to see this information? Is this still current, or was it superseded last month? And what does the combination of these sources mean for the specific task the agent is performing?

In our experience, the failure pattern is almost always the same. The agent retrieves the right chunks. It just picks the wrong one because nothing told it which source was authoritative, which was stale, or which the team had already rejected. Retrieval worked. Reasoning didn't, because there was no reasoning layer.

Context engineering addresses the gap between document retrieval and actionable knowledge. Stanford HAI's 2025 AI Index shows AI accuracy degrades significantly on complex, multi-step tasks, exactly the kind coding agents perform (Stanford HAI AI Index, 2025).

Context engineering as a discipline covers five operations that RAG doesn't touch: source curation (deciding what counts), conflict resolution (deciding what wins), permission enforcement (deciding who sees what), freshness management (deciding what's current), and synthesis (deciding what the combination means). Skip any one of those and you get an agent that retrieves fluently and acts incorrectly.

What does this look like concretely? Consider a coding agent asked to implement a retry mechanism. RAG retrieves three documents: a Notion spec from 2023, a Slack thread from last quarter where the team rejected that approach, and the current implementation in the repo. RAG returns all three ranked by similarity. A context engineering approach would resolve the conflict, surface the rejection rationale, and deliver only the current implementation plus the reason the old approach was killed.

For definitions and deeper detail, see our post on what context engineering is.

When is RAG enough for your team?#

RAG is enough when your failure mode is "the model doesn't know about our docs," not "the model knows too much and picks the wrong source." GitHub's 2025 Octoverse report found that AI-generated code now comprises a growing share of pull requests on the platform (GitHub Octoverse, 2025). For many of those contributions, basic retrieval is all that's needed.

Here's a practical test. If your team can answer "yes" to all four of these questions, RAG is likely sufficient:

Single authoritative source#

You have one corpus that doesn't contradict itself. Product documentation, a style guide, a well-maintained wiki. No conflicting versions. No outdated drafts floating alongside current specs.

Read-only outputs#

The agent answers questions. It doesn't write code, open PRs, or take actions. The cost of a wrong answer is a confused user, not a broken build.

No permission complexity#

Everyone who queries the system is allowed to see everything the system retrieves. No contractor boundaries, no team-level access controls, no compliance constraints.

Low conflict frequency#

Sources rarely disagree. When they do, the disagreement is obvious and the user can resolve it manually. The agent doesn't need to arbitrate.

Most teams overestimate how long they'll stay in this lane. A single-source FAQ bot is RAG-shaped. But the moment you connect a second system, like Slack alongside your docs, you've introduced conflict potential. And the moment the agent writes code instead of answering questions, the cost of a wrong retrieval jumps by an order of magnitude.

When should you invest in context engineering instead?#

Teams should invest in the context engineering approach when agents act on retrieved information, not just display it. DORA's 2025 State of DevOps report found that teams integrating AI without investing in quality and review processes saw declining delivery performance (DORA, 2025). The quality gap is almost always a context gap.

Four signals tell you RAG alone won't cut it.

Your agents write code, not just answers#

When the output is a diff, the stakes change. A hallucinated function reference compiles, passes lint, and breaks at runtime or in review. McKinsey's 2025 research found that AI coding tools boost productivity 20-45% on well-defined tasks, but gains drop sharply when agents lack sufficient project context (McKinsey, 2025). "Well-defined" is doing a lot of work in that stat. Most real tasks aren't well-defined without institutional context.

Your knowledge lives in more than one system#

Code is in GitHub. Decisions are in Slack. Specs are in Notion. Incidents are in PagerDuty. RAG can index all of those, but it can't reconcile them. When the Notion spec says "use library X" and the Slack thread says "we killed library X last sprint," RAG returns both. An agent needs to know which one wins.

You have permission boundaries#

Contractors shouldn't see executive strategy docs. Junior engineers shouldn't see compensation data in HR tickets. RAG retrieves by similarity, not by authorization. A context engineering approach enforces permissions before context hits the prompt, not after the agent has already seen it.

Your agents keep failing review#

This is the clearest signal. If AI-generated PRs keep getting bounced for "you should have known about X," the problem isn't the model. It's what the model was allowed to see. The context engineering approach treats review feedback as a signal that improves future retrieval, not just a rejection.

DORA's 2025 State of DevOps report found that AI adoption without investment in quality processes correlated with declining delivery performance (DORA, 2025). Context engineering is the quality process for what agents see.

For more on this concept, see decision-grade context.

Can you use RAG inside a context engineering strategy?#

Yes, and most mature implementations do. Anthropic's guidance on effective context engineering treats retrieval as a pluggable component inside a broader orchestration system (Anthropic, 2025). RAG is not the rival. It's the first step.

Think of it as layers. RAG handles the initial fetch: embed the query, pull similar chunks, return candidates. Context engineering handles everything after that. Conflict resolution filters contradictory sources. Permission enforcement strips unauthorized content. Freshness ranking deprioritizes stale material. Synthesis combines surviving context into a task-shaped input.

Gustavo Alvarez, Software Engineer at Sixfold, described the layered approach in practice:

"My biggest use of Unblocked MCP has been AI governance, searching across Slack, fourteen Notion docs, S3..."

That's retrieval from multiple systems, governed by a context layer that decides what reaches the agent and in what shape. The retrieval step uses similarity search. Everything after it is context engineering.

Most production context engineering implementations use RAG as an internal retrieval step. Anthropic describes retrieval as one input channel that a broader system orchestrates, with selection, compression, and reasoning happening above the retrieval layer (Anthropic, 2025).

A practical layering model#

The simplest way to think about context engineering vs RAG is as concentric circles. RAG is the inner circle: fetch by similarity. Around it sits conflict resolution: when two sources disagree, pick the authoritative one. Around that sits permission enforcement: filter by who's asking. The outer ring is synthesis: combine what survived into a coherent input the agent can act on.

Google DeepMind's 2025 research on scaling retrieval-augmented systems found that retrieval quality plateaus without post-retrieval processing, and that reasoning layers above retrieval consistently improve downstream task accuracy (Google DeepMind, 2025). You don't abandon RAG. You build on top of it.

The Pragmatic Engineer noted in 2025 that the real bottleneck for AI coding tools is not generation quality but context quality, specifically whether the system can assemble the right context from scattered organizational sources (The Pragmatic Engineer, 2025). RAG assembles some context. Context engineering assembles the right context.

Is it worth building all those layers yourself? That depends on your team's maturity and the complexity of your knowledge landscape. The layers exist whether you build them or not. The question is whether you handle them deliberately or let the agent guess.

For a deeper look at how these layers work inside a context engine, read our context engine vs RAG deep dive.

Frequently asked questions#

Is context engineering just RAG with extra steps?#

No. RAG is one retrieval technique. Context engineering is a discipline that includes source curation, conflict resolution, permission enforcement, freshness management, and synthesis. InfoWorld's 2025 analysis confirmed that most enterprise RAG failures stem from context quality, not retrieval recall (InfoWorld, 2025). The "extra steps" are where the actual accuracy comes from.

Can a small team benefit from context engineering?#

Yes. Small teams often have less documentation and more tribal knowledge, which makes the gap between "what the code says" and "why the code is that way" even wider. You don't need a platform to start. Curating which sources your agent can see and adding a freshness signal to retrieval results are context engineering moves any team can make today.

Does context engineering replace prompt engineering?#

No. Prompt engineering shapes the instruction. Context engineering shapes the evidence the model sees alongside that instruction. Anthropic's engineering team describes the two as complementary, with context curation having a larger impact on output quality than instruction tuning alone (Anthropic, 2025). You need both, but context work is the higher-impact investment for coding agents.

What are the biggest RAG limitations for coding agents?#

Four limitations show up repeatedly: stale source confusion (old docs rank higher than current code), permission leakage (unauthorized content retrieved by similarity), conflict blindness (contradictory sources returned without resolution), and reasoning gaps (chunks retrieved but not connected). Stanford HAI's 2025 AI Index confirms that AI accuracy degrades on multi-step reasoning, which is exactly what coding tasks demand (Stanford HAI AI Index, 2025).

For more on RAG limitations, see the detailed analysis here.

Start with the question, not the tool#

The context engineering vs RAG debate resolves quickly once you flip the framing. Don't ask "should we use RAG?" Ask "what does our agent need to know to get this right, and who decides what it sees?"

If the answer is "it needs one set of docs and anyone can see them," RAG is the right tool. Ship it, move on. If the answer involves multiple conflicting sources, permission boundaries, stale-vs-current arbitration, or agents that act rather than answer, you need context engineering, and RAG will likely be one component inside it.

Gartner estimates that by 2026, more than 80% of enterprises will have deployed generative AI in production, up from fewer than 5% in early 2023 (Gartner, 2025). As that adoption scales, the gap between retrieval and decision-grade context will widen. Teams that invest in context engineering now will ship agents that survive review. Teams that stop at RAG will keep bouncing PRs.

Start with the question your agent needs to answer. Work backward to the context it needs. The tool choice follows from there.

For a complete framework, see the context engineering guide.