The 5 Criteria That Define a Context Engine (And Which Tools Meet Them)

Dennis Pilarinos·April 28, 2026

Dennis Pilarinos·April 28, 2026

Key Takeaways

• A real context engine must meet 5 criteria: many sources, permissions, conflict resolution, temporal reasoning, and user-aware context

• 84% of developers use or plan to use AI coding tools (Stack Overflow Developer Survey, 2025), yet most context tools fail at least two criteria

• The 5th criterion, knowing WHO is asking and WHY, is where every competitor breaks down

• Unblocked is the only tool that scores 5/5; most "context engines" are retrieval layers with new branding

The 5 Criteria That Define a Context Engine (And Which Tools Meet Them)

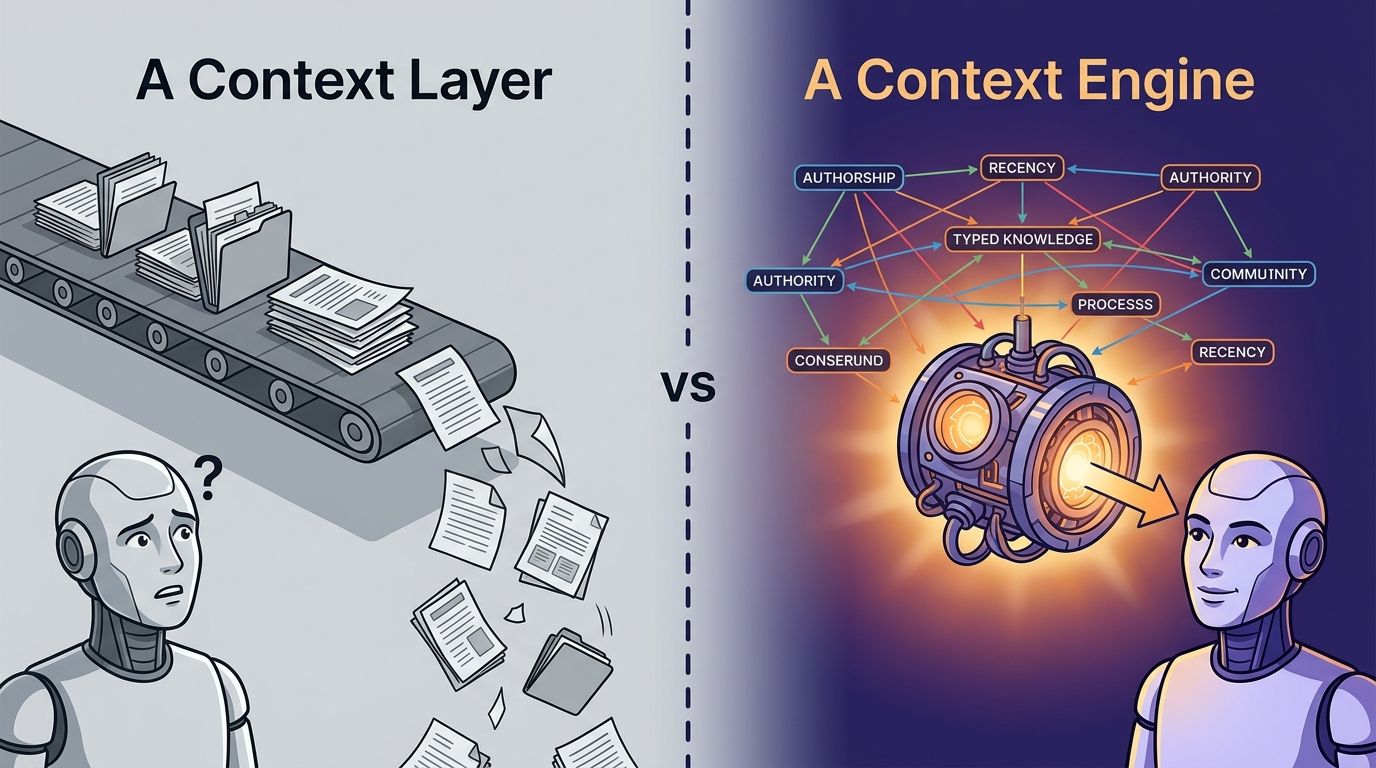

"Context engine" is the most overloaded phrase in enterprise AI right now. Vector databases claim it. IDE plugins claim it. Enterprise search vendors rebranded existing products and claimed it. Orchestration frameworks ship SDKs and claim you can build one yourself. When a category label means everything, it means nothing. And buyers are paying real money for tools that miss the bar by a mile.

Here's the uncomfortable reality. Most products marketed as context engines fail at least two of the five core criteria. Some fail all five. A 2025 Stanford HAI report found that 78% of enterprises now use AI in at least one business function, yet only a fraction have invested in the context infrastructure needed to make that AI reliable (Stanford HAI AI Index, 2025). That gap, between adoption and context readiness, is exactly where the rubric below belongs.

---

Criterion 1: Does It Pull From Many Systems?#

A context engine must read across code, pull requests, Slack or Teams, docs, tickets, and meeting notes, not just one source. GitHub's 2025 Octoverse report found the average engineering org uses 10 or more distinct developer tools daily (GitHub Octoverse, 2025). If your "context engine" only reads code, it's a code search tool wearing a new jacket.

Engineering knowledge doesn't live in repositories alone. It lives in the PR where someone explained why the retry logic changed, the Slack thread where three senior engineers argued about architecture, the Jira ticket that tracked the eventual decision, and the meeting where leadership reversed course. A tool that reads one of those sources gives you one tenth of the picture.

What fragment solutions get wrong#

Most tools pick a lane. Code assistants read code. Enterprise search reads documents. Vector databases store embeddings of whatever you dump into them. Each one claims to be "complete context" inside its own silo. None of them can answer "why was this decision made?" because the answer spans four systems the tool doesn't see.

What full pass looks like#

Full pass means native, first-class ingestion from every engineering surface: Git hosts, issue trackers, chat tools, documentation platforms, meeting transcripts, and design docs. It means the tool resolves entities across those systems so a function, a PR, a Slack thread, and a ticket all connect to the same concept. Not a forest of disconnected documents.

A true context engine pulls from 10+ distinct systems as a baseline, resolves entities across them, and returns synthesized answers with source-level citations (GitHub Octoverse, 2025). Anything less is retrieval against a subset, not context synthesis.

---

Criterion 2: Does It Respect Permissions?#

A context engine must enforce per-user ACLs, inherit source-system permissions, and never leak data across org boundaries. Gartner's 2025 AI TRiSM research found that 65% of enterprises cite data governance as the top barrier to AI deployment (Gartner AI TRiSM, 2025). Permission leaks are the fastest way to get your context engine ripped out of production.

If an engineer on Team A queries the system, they should only see information they already have access to in the source tools. Not a single Slack message from a private channel they're not in. Not a Confluence page locked to another department. Not a ticket from a project they can't open. The engine must mirror the permission model of every system it indexes.

What fragment solutions get wrong#

Many retrieval tools index everything into a shared vector store with permissions treated as an afterthought. Others ask you to build permission filters yourself using metadata tags. Both approaches fail the moment a Slack channel is renamed, a team boundary shifts, or a user changes roles. The leak is not hypothetical.

What full pass looks like#

Full pass means live permission inheritance: the engine checks the source system's current ACL at query time, not at index time. It honors workspace boundaries, private channels, repo visibility, and team-scoped tickets. And it does all of this without requiring your team to configure a parallel permission system.

Gartner found that 65% of AI deployments stall on data governance concerns, with permission leakage cited as the single most-feared failure mode (Gartner AI TRiSM, 2025). A context engine that skips live permission inheritance isn't enterprise-ready, full stop.

---

Criterion 3: Does It Resolve Conflicts and Gaps?#

A context engine must reconcile stale docs against current code, surface contradictions between sources, and identify what's missing. Chroma Research's 2025 analysis on long-context failure modes showed that retrieval systems degrade sharply when sources disagree, with answer accuracy dropping as context length grows (Chroma Research Context Rot, 2025). Conflict resolution is where retrieval ends and reasoning begins.

Real engineering knowledge is contradictory. A README says one thing. The code says another. The Slack thread says the README is wrong but nobody updated it. A retrieval tool returns all three and makes you sort it out. A context engine reads all three, notices the conflict, and tells you which one is current with evidence.

What fragment solutions get wrong#

RAG pipelines return top-k chunks. If three chunks contradict, the LLM picks one, often the most recent or most frequent, and confidently states it as fact. No flag. No "these sources disagree." No surfacing of the underlying conflict. The user gets a clean answer that happens to be wrong.

What full pass looks like#

Full pass means the engine explicitly detects contradictions, weighs source authority (merged code outranks a stale doc), and surfaces gaps (you asked about X, but nobody documented Y, which X depends on). The output says "sources disagree" when they do, not "here's the confident answer" followed by a hallucination.

Chroma Research's 2025 study documented a phenomenon called "context rot," where accuracy falls as input context grows, especially when sources contradict (Chroma Research, 2025). A real context engine reasons past this by resolving conflicts before generation, not after.

---

Criterion 4: Does It Reason Across Time and Sources?#

A context engine must understand chronology: what was true then, what's true now, and how decisions evolved. McKinsey's 2025 State of AI report found that 72% of organizations deploying AI cite "lack of institutional memory" as a major productivity drag (McKinsey State of AI, 2025). Point-in-time snapshots can't capture how a system evolved.

Why does a service use exponential backoff? Because in 2023, fixed intervals caused a thundering herd. The decision was documented in a PR, discussed in Slack, and superseded in 2025 when the team moved to jittered backoff. A point-in-time tool returns whatever is current. A context engine returns the full arc.

Can a vector database tell you that a 2024 decision was reversed in 2026 by a specific PR? No, it cannot. It can only return text that looks similar to your query.

What fragment solutions get wrong#

Vector stores treat documents as timeless. Code search tools show you the current state of main. Enterprise search ranks by freshness but has no model of "supersession." None of these tools can answer "what changed and why?" because they have no temporal reasoning layer.

What full pass looks like#

Full pass means the engine builds a timeline of decisions, detects when one decision supersedes another, and returns answers anchored in chronology. When asked why a pattern exists, it doesn't just return the current code. It traces the pattern back to the PR that introduced it, the Slack thread that debated it, and the ticket that eventually reversed it if reversed.

McKinsey's 2025 report found that 72% of AI-deploying organizations point to missing institutional memory as a productivity blocker (McKinsey, 2025). Temporal reasoning, tracking how decisions evolved over time, is the specific capability that recovers that memory.

---

Criterion 5: Does It Incorporate the User Asking the Question?#

A context engine must tailor answers to the asker's role, team, codebase focus, and current task. Anthropic's 2025 research on agent evaluation showed that task-aware prompting improves code agent accuracy by up to 31% compared to generic retrieval (Anthropic Research, 2025). Most tools treat every query identically. That's the single biggest gap in the category.

Two engineers can type the exact same question and need completely different answers. A frontend engineer asking about authentication needs different context than a platform engineer asking about authentication. A new hire asking "why does this work this way?" needs history. A senior engineer asking the same question probably needs to know what changed last week. Context without user context is a library, not an answer.

What fragment solutions get wrong#

Every current product in the market treats queries as stateless strings. The tool doesn't know if you're on the payments team or the infra team. It doesn't know what you were just working on. It doesn't know whether you wrote the code you're asking about or are trying to understand it for the first time. The result is the same answer for every asker, which means the answer is optimized for no one.

What full pass looks like#

Full pass means the engine captures role (IC vs staff vs manager), team membership, recent work, and the current task. It weights retrieval toward sources relevant to that profile. It prefers evidence from the asker's team's conventions over global defaults. When the asker is new, it leans into history and rationale. When the asker is senior and deep in a task, it leans into recency and current state.

Anthropic's 2025 evaluation research found that injecting user-task context lifted agent accuracy by up to 31% on real-world engineering benchmarks (Anthropic Research, 2025). User-aware context is not a nice-to-have. It's the difference between a system that ships answers and a system that ships the right answer to the right person.

---

How Major Tools Score Against the 5 Criteria#

The rubric above is only useful if applied. Here's how the major tools marketed as context engines, or adjacent to the category, score across all five criteria. This scorecard reflects current product capabilities as of Q2 2026 and draws on Forrester's 2025 AI Tooling Wave analysis of developer productivity platforms (Forrester, 2025).

| Tool | 1. Many systems | 2. Permissions | 3. Conflicts/gaps | 4. Time/sources | 5. User context | Total |

| Unblocked | ✅ | ✅ | ✅ | ✅ | ✅ | 5/5 |

| Glean | ✅ | ✅ | ⚠️ | ⚠️ | ❌ | 2/5 (+ 2 partial) |

| Sourcegraph | ⚠️ code-centric | ✅ | ⚠️ | ⚠️ | ❌ | 1/5 (+ 3 partial) |

| Augment Code | ❌ | ⚠️ | ❌ | ❌ | ⚠️ | 0/5 (+ 2 partial) |

| Cursor | ❌ user wires it | ⚠️ | ❌ | ❌ | ⚠️ | 0/5 (+ 2 partial) |

| LangChain / LlamaIndex | ⚠️ you build it | ⚠️ you build it | ❌ | ❌ | ❌ | 0/5 (+ 2 partial) |

| Pinecone / Weaviate | ❌ storage only | ❌ | ❌ | ❌ | ❌ | 0/5 |

| Dust | ✅ | ⚠️ | ⚠️ | ❌ | ❌ | 1/5 (+ 2 partial) |

How to read this table#

A check means the tool ships the capability as a first-class product feature, out of the box, without user configuration. A warning means partial: it works under specific conditions, requires meaningful setup, or covers only part of the criterion. A cross means the capability is absent or must be built from scratch by the user.

The pattern nobody wants to admit#

Most tools cluster in two groups. Enterprise search products (Glean, Dust) cover breadth and permissions but fail at reasoning across time, conflict resolution, and user context. Developer tools (Sourcegraph, Cursor, Augment Code) cover code but fail at breadth and temporal reasoning. The frameworks (LangChain, LlamaIndex) and vector stores (Pinecone, Weaviate) are infrastructure, not products. You build the context engine on top of them; they don't ship one.

Forrester's 2025 developer tooling analysis noted that the context layer is the fastest-fragmenting segment in enterprise AI, with most vendors occupying a partial slot rather than the full stack (Forrester, 2025). That fragmentation is why the 5/5 slot is currently empty for everyone except the engines built for it from day one.

---

Why the 5th Criterion Is the Hardest#

The first four criteria are hard engineering problems. The fifth, user-aware context, is a product philosophy problem, and that's why nearly every competitor fails it. The Pragmatic Engineer's 2025 analysis of AI developer tools found that most products ship identical responses to every user because personalization was deprioritized in favor of faster shipping (The Pragmatic Engineer, 2025). The consequence: tools that feel generic in week two.

Building multi-source retrieval is hard but well-understood. Permission inheritance is hard but well-understood. Conflict resolution and temporal reasoning require research, but the patterns exist. User context is different. It requires the product to model the asker, not just the question. Most teams don't ship this because it's invisible in a demo. And demos are how deals close.

What user context actually requires#

Capturing role, team, recent work, and current task requires the engine to have persistent identity for each user, a model of the org chart, and a live feed of what the user has been doing recently. That's not a feature flag. It's a product architecture decision that has to be made at day one, because retrofitting it means rebuilding retrieval around a new primary key.

Why demos hide this gap#

In a demo, the vendor is the asker. They know what question they're going to type. They know what answer they want to show. User-aware context is invisible in that setting because there's only one user. The gap shows up in week two of real use, when four different engineers ask the same question and get the same answer, and three of them walk away thinking "this tool doesn't get what I'm doing."

---

What Changes When a Tool Scores 5/5?#

When a single system meets all five criteria, three things change. First, adoption stops being seat-by-seat and becomes team-by-team because the answers get better as more users onboard. Second, agents stop hallucinating on questions that span sources because the engine reconciles conflicts before generation. Third, senior engineers stop being the human context layer for the rest of the team.

The New Stack's 2025 survey of engineering leaders found that 67% of respondents cited "tribal knowledge trapped in senior engineers' heads" as the single biggest scaling constraint on their teams (The New Stack, 2025). A 5/5 context engine unblocks that constraint. It extracts the knowledge, resolves it, and delivers it with awareness of who's asking.

Does this sound like hype? Check the rubric against any tool you're evaluating. If the tool doesn't meet all five criteria, the knowledge stays trapped.

---

Frequently Asked Questions#

What makes a context engine different from enterprise search?#

Enterprise search returns a ranked list of documents. A context engine returns a synthesized, source-cited answer that resolves conflicts and accounts for time. IDC's 2025 research on knowledge work productivity found that synthesized answers reduce decision time by 45% compared to ranked result lists (IDC, 2025). Different outputs for different jobs.

Does any tool besides Unblocked meet all 5 criteria?#

As of Q2 2026, no. Enterprise search tools cover breadth and permissions but miss temporal reasoning and user context. Developer tools cover code but miss multi-source breadth. Frameworks and vector stores are infrastructure. Forrester's 2025 analysis confirmed that the category is fragmented, with no other vendor currently holding a full 5/5 position (Forrester, 2025).

Can I build a context engine with LangChain?#

You can build components of one, but you'll be assembling retrieval, permissions, conflict resolution, and user-context logic yourself. IEEE's 2025 study on engineering platform TCO found internally-built AI infrastructure costs 3-5x more over three years than purchased platforms (IEEE Software, 2025). Frameworks give you parts; they don't give you a context engine.

How do I evaluate a context engine for my team?#

Score every tool you evaluate against the 5 criteria in this post. Insist on a live test against your own repos, Slack, and ticket data. Ask vendors to demo permission enforcement and temporal reasoning, not just retrieval speed. Anthropic's 2025 evaluation research underscored that real-world benchmarks beat marketing demos by a wide margin (Anthropic Research, 2025).

---

Conclusion#

The context engine category is real, but most products marketed inside it aren't. Five criteria separate the real ones from the retrieval layers: many sources, permissions, conflict resolution, temporal reasoning, and user-aware context. Tools that meet three or four are useful. Tools that meet all five are a different category of product, because the whole stack of criteria compounds into something none of the pieces can deliver alone.

The 5th criterion, knowing who's asking and why, is where the category splits. Every vendor can add more connectors. Fewer can add conflict resolution. Almost none have architected around user context from day one. That architectural choice is what separates a context engine from a smarter search bar.

If you're evaluating a context engine right now, print the scorecard. Walk through it with your team. Don't take a vendor's word that they're "a context engine." Make them prove it against the five criteria. Your future self, and your agents, will thank you.

Ready to see a 5/5 context engine in action? Start a free trial of Unblocked and run the rubric against your own engineering stack.