Context Engine vs Knowledge Graph: When You Need Understanding, Not Just Data

Brandon Waselnuk·April 24, 2026

Brandon Waselnuk·April 24, 2026

Key Takeaways

• Knowledge graphs map entities and relationships; context engines reason across sources to produce synthesized, actionable answers

• Graphs excel at structural queries ("what depends on what?") but fail at temporal reasoning and cross-source synthesis

• Context engines add conflict resolution, permission enforcement, and task-aware delivery on top of any retrieval or graph system

• The graph database market reaches $5.1B by 2029 (MarketsandMarkets, 2025), yet graphs alone can't answer "why"

• Many production context engines use a knowledge graph internally, combining structural precision with reasoning depth

Context Engine vs Knowledge Graph: When You Need Understanding, Not Just Data

The graph database market is projected to reach $5.1 billion by 2029, growing at a 21.6% CAGR (MarketsandMarkets, 2025). Knowledge graphs power everything from Google's search panel to fraud detection systems at banks. They are very good at mapping relationships between entities. But when an engineering team asks "why did we migrate the auth service off the monolith last quarter?", the graph can tell you which services connect to auth. It can't read the Slack debate, the rejected RFC, and the incident postmortem, then synthesize the reasoning behind the migration. That is the job of a context engine.

The context engine vs knowledge graph distinction isn't about which technology is better. It's about whether your problem is structural (what connects to what) or inferential (what does it all mean for the task in front of me). Most engineering teams need both capabilities, but they confuse having one for having the other.

For foundational definitions, see what a context engine is.

What does a knowledge graph actually do?#

A knowledge graph stores entities and their relationships as structured triples: subject, predicate, object. Gartner's 2025 Hype Cycle positioned knowledge graphs as approaching the "Plateau of Productivity," noting that over 50% of large enterprises now use graph-based approaches for some form of data integration (Gartner, 2025). At their core, graphs answer structural questions with precision that relational databases struggle to match.

Gartner's 2025 research found over 50% of large enterprises use graph-based approaches for data integration (Gartner, 2025). Knowledge graphs store entities and relationships as structured triples, excelling at structural queries like "what depends on this service?"

Think of a knowledge graph as a map of your codebase or organization. Node A (the payments service) depends on Node B (the Stripe SDK). Node B was modified in PR #4521. PR #4521 was authored by Engineer C. Engineer C belongs to Team D. Every relationship is explicit, typed, and traversable.

What knowledge graphs do well#

Graphs excel at three things. First, relationship traversal. "What services depend on the billing module?" is a two-hop query that takes milliseconds. Second, entity resolution. The graph knows that "auth-service," "authentication module," and "login-svc" are the same thing. Third, lineage tracking. You can trace a data field from its origin through every transformation and consumer.

These strengths make knowledge graphs invaluable for dependency mapping, impact analysis, and compliance auditing. A code knowledge graph that maps repositories, services, APIs, and their interconnections can answer "what breaks if I change this interface?" faster than any text search.

For related comparison, see decision-grade context.

Where do knowledge graphs fall short for engineering teams?#

Knowledge graphs fail at the reasoning layer. IEEE's 2025 survey of knowledge graph applications in software engineering found that while graphs effectively capture static relationships between code entities, they consistently underperform on tasks requiring temporal reasoning and cross-source synthesis (IEEE, 2025). The gap isn't in what the graph stores. It's in what it can't infer.

IEEE's 2025 survey found knowledge graphs underperform on temporal reasoning and cross-source synthesis tasks in software engineering (IEEE, 2025). The structural precision that makes graphs powerful for dependency mapping becomes a limitation when teams need contextual understanding.

The short version: Knowledge graphs map connections. They don't resolve conflicts between sources, track how relationships change over time, or synthesize meaning from unstructured conversations. For engineering teams running AI agents, that reasoning gap turns a structural asset into an incomplete picture.

The staleness problem#

A knowledge graph is only as current as its last update. Code changes constantly. PRs merge. Services get deprecated. APIs evolve. The graph snapshot from yesterday might show a dependency that was removed this morning. Neo4j's 2025 Graph Database Management report acknowledged that continuous synchronization with live systems remains one of the top operational challenges for graph deployments at scale (Neo4j, 2025).

We've found that teams building code knowledge graphs spend as much time maintaining freshness pipelines as they do on the graph logic itself. The graph is easy to build. Keeping it accurate is the hard part.

The "why" gap#

A graph can tell you that Service A calls Service B. It cannot tell you why that call exists, what alternatives were considered, or what incident in 2024 made the team add the retry logic. That reasoning lives in Slack threads, PR comments, design docs, and incident postmortems. None of those sources fit neatly into a triple store. Unstructured knowledge is where engineering decisions actually live, and knowledge graph limitations become most visible when AI agents need that context to act.

Permission complexity#

Knowledge graphs typically enforce access control at the graph level, not at the source level. If a node was created from data in a private Slack channel and a restricted Jira project, the graph itself doesn't inherit those permission boundaries. Stanford HAI's 2025 AI Index noted that access control in multi-source AI systems remains an unsolved problem in most production deployments (Stanford HAI AI Index, 2025). A knowledge graph that flattens permission boundaries into a single entity layer creates a security gap.

What does a context engine add on top of graph-structured data?#

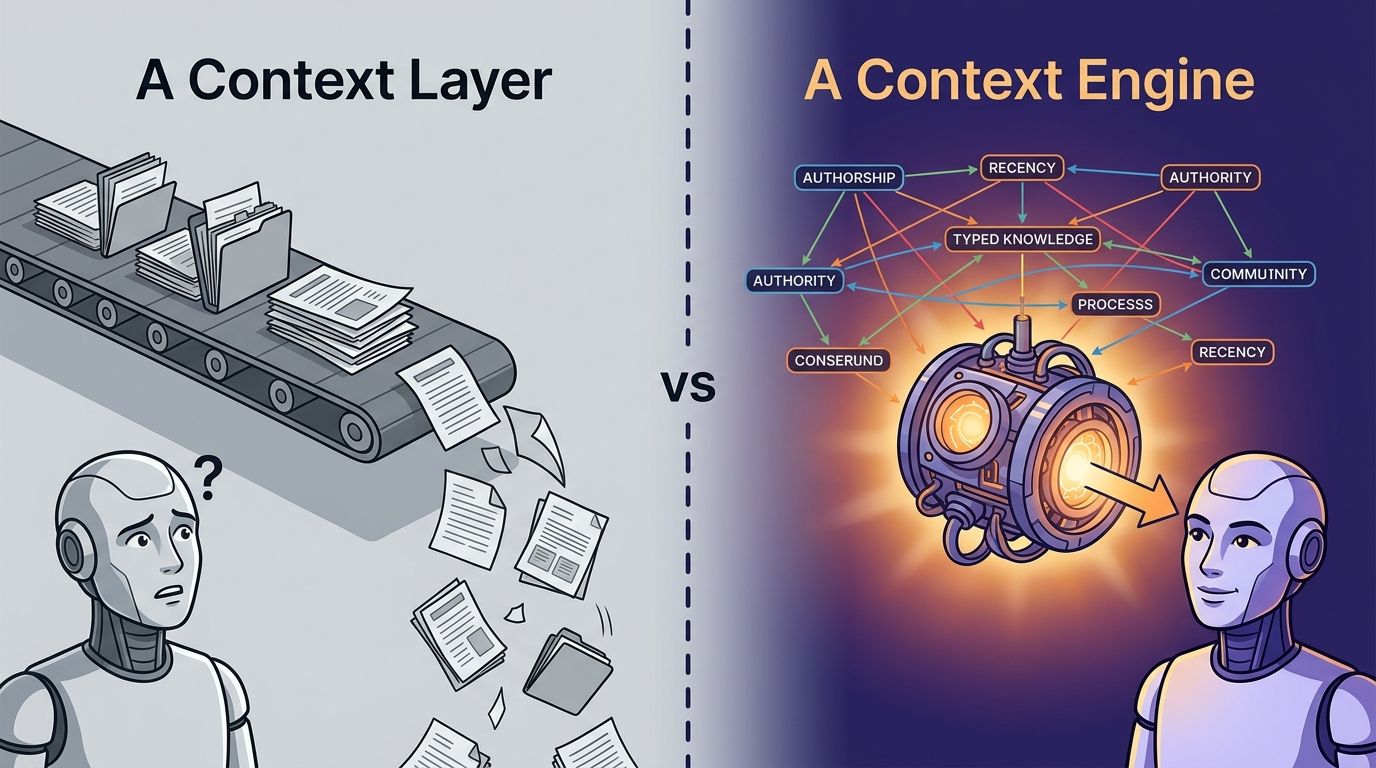

A context engine adds reasoning. Where a graph stores that "Service A depends on Service B," a context engine explains why, when that changed, who decided it, and what the rejected alternatives were. Anthropic's context engineering guide frames the core problem: agents need the right information, in the right shape, at the right time (Anthropic, 2025). A knowledge graph provides information. A context engine provides understanding.

Anthropic's context engineering guide states agents need the right information, in the right shape, at the right time (Anthropic, 2025). A context engine adds cross-source synthesis, temporal reasoning, task-aware delivery, and permission enforcement on top of what any graph or retrieval system provides.

The context engine vs knowledge graph comparison becomes clearest at four capability boundaries.

Cross-source synthesis#

A context engine reads across Git history, Slack, Jira, Confluence, Notion, and code simultaneously. It doesn't just link entities. It reads the actual content, identifies conflicts, and produces one synthesized answer. When you ask "why does our checkout flow use two-phase commit?", the engine reads the RFC in Notion, the implementation PR, the Slack thread where the team debated it, and the incident that prompted the change. Then it tells you the story.

Temporal reasoning#

Graphs are snapshots. Context engines understand time. They know that a Confluence doc from 2023 was superseded by a PR merged last week. They weight recent sources higher and flag stale ones. JetBrains' 2025 Developer Ecosystem Survey found that 76% of developers using AI assistants still manually verify output before committing (JetBrains, 2025). Much of that verification is catching outdated context. Temporal awareness reduces the verification tax.

Task-aware delivery#

A knowledge graph returns the same subgraph regardless of who asks or why. A context engine shapes its response to the task. A staff engineer debugging a production issue gets different context than a new hire onboarding to the same service. The same entities, different emphasis. That's what "task-aware" means in practice.

For an architectural comparison, see context engine vs RAG.

Permission-aware reasoning#

A context engine enforces permissions from every source system at query time. If the Slack channel is private, the Jira project is restricted, and the GitHub repo has limited access, the engine respects all three boundaries simultaneously. The answer changes based on what the requesting user is authorized to see. Stack Overflow's 2025 Developer Survey found that 76% of developers now use or plan to use AI tools, yet fewer than half report governance policies for AI-generated outputs (Stack Overflow, 2025). Permission-aware reasoning is table stakes for production deployments.

Can a context engine use a knowledge graph internally?#

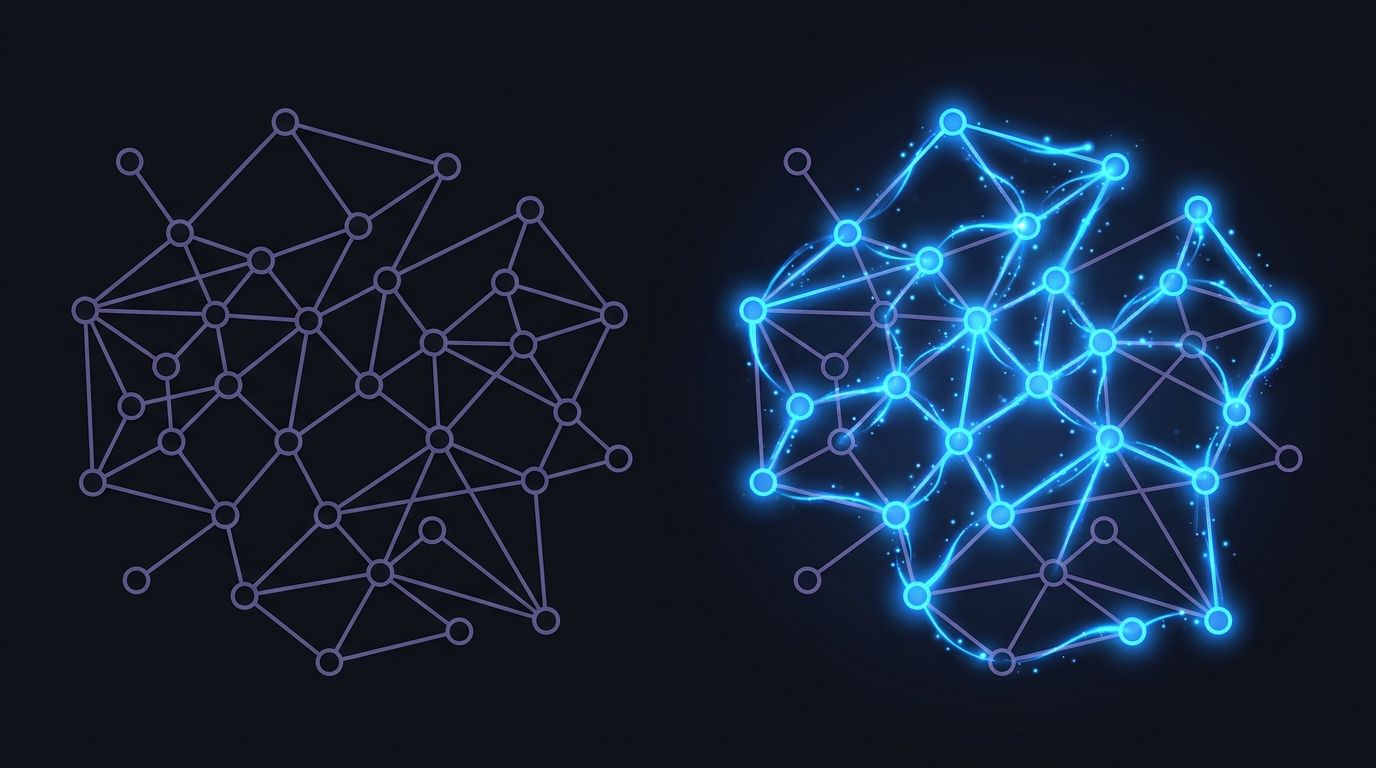

Yes, and many do. A context engine is not a replacement for a knowledge graph. It's a reasoning layer that can sit on top of one. Google DeepMind's 2025 research on retrieval-augmented architectures found that hybrid systems combining structured graph retrieval with unstructured synthesis outperform either approach alone on complex reasoning tasks (Google DeepMind, 2025). The context engine vs knowledge graph framing is misleading when it implies you must pick one.

Google DeepMind's 2025 research found hybrid systems combining graph retrieval with unstructured synthesis outperform either approach alone on complex reasoning tasks (Google DeepMind, 2025). Many production context engines use a knowledge graph internally for structural queries while adding reasoning for everything else.

In practice, the architecture looks like this: the knowledge graph handles entity resolution and relationship traversal. The context engine handles everything else: reading unstructured sources, resolving conflicts, enforcing permissions, and synthesizing the final answer. The graph provides the skeleton. The engine provides the muscle and the judgment.

How the two layers work together#

Think of a question like "what's the blast radius if we deprecate the notifications microservice?" A code knowledge graph answers the structural part: fourteen services call the notifications API, three downstream consumers process its events, and two CI pipelines depend on it. The context engine adds the rest: the team discussed deprecation in Slack three months ago, the PM filed a Jira epic that stalled, the last incident involving notifications happened eight weeks ago and affected checkout, and the current owner is on PTO. Both answers matter. Neither is complete alone.

Why "just build a graph" isn't enough#

So why don't teams just build a knowledge graph and call it done? Because the reasoning work, the synthesis, the conflict resolution, the temporal weighting, can't be encoded as graph traversals. You can't write a Cypher query that reads a Slack thread, weighs it against a Confluence doc, and decides which one reflects current reality. That's an inference problem, not a data structure problem. Knowledge graph limitations become architectural constraints when teams try to stretch graphs into reasoning systems.

For a broader comparison, see context engine vs enterprise search.

When should you invest in a knowledge graph vs a context engine?#

The answer depends on your primary question type. Forrester's 2025 report on enterprise knowledge management found that organizations with mature knowledge management practices are 2.4x more likely to report high employee productivity (Forrester, 2025). But "mature" means matching the right tool to the right problem, not defaulting to whatever sounds most sophisticated.

Forrester's 2025 research found organizations with mature knowledge management are 2.4x more likely to report high employee productivity (Forrester, 2025). Choosing between a knowledge graph and a context engine depends on whether your bottleneck is structural or inferential.

Choose a knowledge graph when#

Invest in a knowledge graph when your primary questions are structural. "What depends on this service?" "Which teams own which APIs?" "What's the data lineage from ingestion to dashboard?" These are graph problems. If your team needs a code knowledge graph for dependency mapping, impact analysis, or compliance tracing, a graph is the right foundation.

Choose a context engine when#

Invest in a context engine when your questions are inferential. "Why does this service exist?" "What's been tried before?" "What does this engineer need to know before touching this module?" These are reasoning problems. If your AI agents need to act on organizational context without human verification, you need a context engine. The knowledge graph vs context engine decision often comes down to whether your bottleneck is finding relationships or understanding their meaning.

We've noticed a pattern: teams that start with a knowledge graph and try to add reasoning later end up rebuilding the reasoning layer from scratch. Teams that start with a context engine and add graph-structured data later have an easier integration path. The reasoning layer is the harder problem. Start there.

The decision framework#

| Your Primary Need | Best Fit | Why |

| Dependency mapping | Knowledge graph | Structural queries, relationship traversal |

| Impact analysis | Knowledge graph | Multi-hop entity connections |

| "Why does this exist?" | Context engine | Cross-source synthesis, temporal reasoning |

| Agent autonomy | Context engine | Permission-aware, conflict-resolved answers |

| Both structural + inferential | Context engine with graph layer | Reasoning on top of structured relationships |

As Olli Draese, Technical Architect at Cribl, put it: "Unblocked is our number one tool to find information we should know but don't." That's the context engine value proposition in one sentence. Not mapping what you already know exists, but surfacing the institutional knowledge you didn't know to look for.

FAQ#

Is a knowledge graph the same as a context engine?#

No. A knowledge graph stores entities and relationships as structured data, answering "what connects to what." A context engine reasons across multiple sources, including graphs, to answer "what does it mean and why." Gartner found that over 50% of large enterprises use graph-based data integration (Gartner, 2025), but graphs alone don't synthesize unstructured knowledge like Slack threads or PR comments into actionable answers.

Can a knowledge graph replace RAG for AI agents?#

A knowledge graph improves on basic RAG by providing structured relationships instead of similarity-based retrieval. However, it doesn't solve RAG's core limitations: conflict resolution, permission enforcement, and cross-source synthesis. Google DeepMind's 2025 research found hybrid systems outperform either approach alone (Google DeepMind, 2025). For deeper analysis, see our context engine vs RAG comparison.

What is a code knowledge graph?#

A code knowledge graph maps entities in a codebase, such as services, APIs, functions, teams, and dependencies, as nodes and edges. It answers structural questions like "what calls this API?" or "which team owns this module?" Its limitations appear when you need temporal context or reasoning about why code exists in its current form, which requires a context engine layer.

Do I need both a knowledge graph and a context engine?#

It depends on your question types. If you only need dependency mapping and impact analysis, a knowledge graph may suffice. If your AI agents need to act autonomously on organizational context, you need a context engine. Many production systems use both: the graph for structural queries and the context engine for reasoning. Forrester found mature knowledge management practices increase productivity by 2.4x (Forrester, 2025).

Data Structure vs Data Understanding#

The context engine vs knowledge graph debate resolves once you separate two questions. "What connects to what?" is a graph problem. "What does it all mean for the task in front of me?" is a reasoning problem. Most engineering teams eventually need both, but the order matters.

Knowledge graphs give you structure. Context engines give you understanding. The graph tells you that Service A depends on Service B. The context engine tells you why, what broke last time someone changed that dependency, and what the team decided to do about it in a Slack thread three months ago.

If your AI agents are acting on organizational knowledge, they need more than a map. They need a system that reads, resolves, and reasons. That's the gap a context engine fills, and it's the gap that determines whether your agents produce confident guesses or trustworthy answers.

Start with what a context engine is to understand the foundation, then explore how it compares to RAG and enterprise search to see where each tool fits.