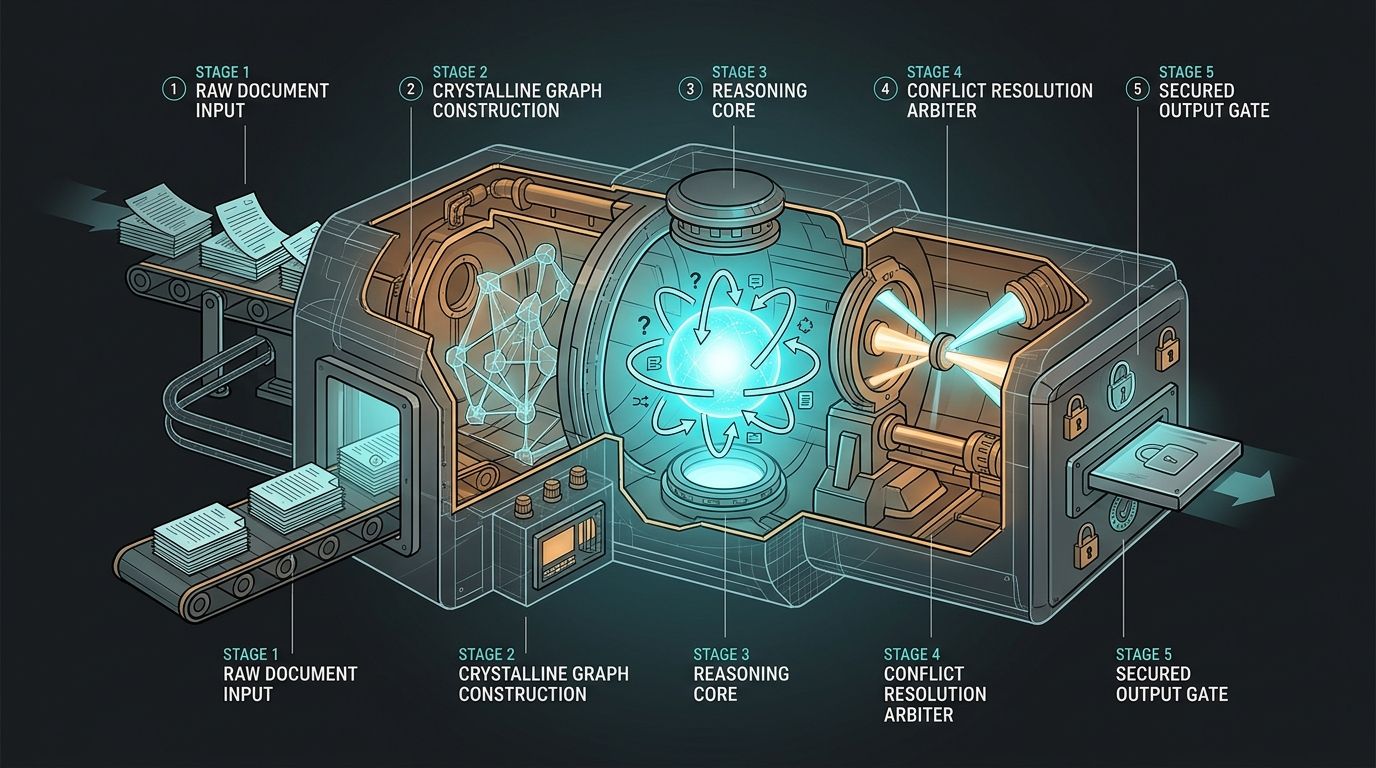

In brief: A context engine is a five-stage pipeline: ingestion, graph construction, retrieval-and-reasoning, conflict resolution, and permissioned delivery. The hard engineering is not in any single stage. It is in keeping all five current, consistent, and fast enough that an agent can call the system inside a single tool-use turn without timing out.

How does a context engine work? At a high level, it ingests every source your engineering org writes into (code, pull requests, Slack, Jira, Notion, Confluence, design docs), builds a continuously-updated graph over that data, then serves a retrieval-plus-reasoning loop that resolves conflicts, weights sources by authority, and returns a single decision-grade answer through a permissions layer. The output is not a list of documents. It is a synthesized response an agent or developer can act on without re-verifying it. That is the bar, and that is what separates the context engine for engineering from a faster search box.

This article walks the architecture stage by stage. If you are evaluating build-vs-buy, staffing a platform team to stand one up, or just trying to figure out why your Copilot-plus-vector-store stack keeps shipping plausible-but-wrong code, the plumbing below is the part that actually matters.

What are the four stages of a context engine?#

How a context engine works comes down to four stages plus a permissions boundary: ingestion, graph construction, retrieval-and-reasoning, and conflict resolution. Permissions cut across all of them. Each stage is a distinct engineering problem rather than a single retrieval box wired to an language model.

Most teams, when they first sketch a context engine on a whiteboard, draw a box labeled "retrieval" and a box labeled "LLM" and an arrow between them. That is not how a context engine works. A production system has at least four distinct stages plus a permissions boundary that cuts across all of them.

The four stages are:

- Ingestion. Pull raw content from every source that holds engineering context: Git, the PR platform, Slack, Jira, Notion, Confluence, Google Docs, incident management, design tools. Normalize it into a common schema. Chunk code along semantic boundaries rather than fixed token windows.

- Graph construction. Turn the normalized stream into a typed graph. Nodes are code symbols, PRs, messages, tickets, people. Edges are authorship, review, reference, mention, ownership, and temporal succession. This is the substrate every other stage queries.

- Retrieval and reasoning. When a query comes in, dispatch parallel retrievers (semantic, lexical, structural, temporal), then feed the union into a reasoning pass that scores candidates against the task, not the string. Anthropic's contextual retrieval research (anthropic.com/news/contextual-retrieval) shows that prepending document-level context to each chunk before embedding reduces retrieval failure rates by roughly two-thirds, and a reranking pass drops them further.

- Conflict resolution and authority weighting. Sources disagree. The engine identifies where signals contradict, weights them by author authority, recency, code ownership, and review density, and emits a single resolved view with provenance.

Why do all five layers have to hold?#

When engineers ask how a context engine works, the honest answer is that it works when every one of the five layers holds together. Miss one and the whole pipeline degrades into something worse than a well-tuned search box.

Wrapping all four stages is the permissions layer. Every node in the graph inherits an access-control profile from the system it came from, whether that is a private repo, a private Slack channel, or a restricted Jira project. Retrieval respects those profiles at query time, not at ingest time, which matters when permissions change after indexing. For how Unblocked handles that cross-source access model, see how Unblocked expands to meet more complex questions.

Miss ingestion and the graph rots. Miss the graph and retrieval reverts to brittle keyword search. Miss reasoning and you ship RAG. Miss conflict resolution and your agent confidently cites last year's deprecated design doc. Miss permissions and you have a lawsuit. The sections that follow unpack each stage with enough detail that you can sanity-check a vendor or scope a build.

How does ingestion work in a context engine?#

Ingestion pulls every engineering artifact from every source system, keeps it current with webhooks and reconciliation sweeps, and normalizes each artifact into a common document shape with typed metadata. Code gets AST-aware chunking so symbols stay intact. This is unglamorous plumbing, and it is where most build attempts stall first.

Source connectors are the hardest part of the build. Each one (GitHub, GitLab, Bitbucket, Slack, Teams, Jira, Linear, Notion, Confluence, Google Docs, PagerDuty, Sentry) has its own auth model, pagination quirks, rate limits, webhook reliability, and delete semantics. A naive connector polls on a schedule. A real one combines an initial historical backfill, a continuous webhook subscription, and a reconciliation sweep that catches the webhooks your provider drops. Slack drops webhooks. Jira drops webhooks. Plan for it.

Once content arrives, normalization turns every artifact into a common document shape with typed metadata: source, author, timestamp, canonical URL, parent container (repo, channel, project), and an access-control profile. Code is special; you cannot treat a file as a document. Good ingestion chunks code along AST-aware boundaries so a class, function, or block stays intact, then attaches symbol-level metadata (imports, exports, call graph edges) to each chunk.

How does graph construction work?#

Graph construction turns the normalized document stream into a typed graph of nodes and edges. Nodes carry payloads plus embeddings. Edges carry meaning: authorship, review, reference, ownership, supersession. The edges are where institutional memory compounds, and they are the substrate every other stage queries.

Graph construction runs on top of the normalized stream. Nodes carry the document payload plus its embeddings (you generally want more than one: a dense semantic embedding, a sparse BM25-friendly representation, and in some systems a structural code embedding). Edges are typed: authored_by, reviewed_by, references, mentions, owns, supersedes, linked_from.

The edges are where the value compounds. A PR that references a Jira ticket that was discussed in a Slack thread that mentions a Notion design doc that links back to the service README is five nodes and four edges, and any retrieval that cannot traverse all five will miss the reason the code looks the way it does.

The graph also tracks temporal edges. When a file is rewritten, the old version becomes a predecessor, not a sibling. When a design decision is reversed, the new doc supersedes the old one. Temporal structure is what lets the engine answer "what is the current decision?" instead of returning every decision anyone ever wrote down.

How does the retrieval-to-reasoning loop work?#

The retrieval loop dispatches parallel retrievers (semantic, lexical, structural, temporal), reranks their union against the task, and decides whether to reformulate and retrieve again before synthesizing an answer. The loop is what separates how a context engine works from how a RAG pipeline works: the first retrieval pass is never treated as final.

This is the stage where most build-your-own attempts fall over. A typical query, shaped for an agent tool-call, looks like this:

{

"query": "Why does the payments service retry on 409 instead of bubbling the conflict?",

"task": {

"kind": "code_change",

"repo": "payments-service",

"target_file": "src/handlers/charge.ts",

"change_intent": "add idempotency for partial refunds"

},

"retrievers": ["semantic", "lexical", "structural", "temporal"],

"scope": {

"sources": ["code", "pr", "slack", "jira", "notion"],

"time_window_days": 730,

"repos": ["payments-service", "payments-shared"]

},

"ranking": {

"authority_weight": 0.35,

"recency_weight": 0.20,

"task_relevance_weight": 0.45

},

"response": {

"format": "synthesized",

"include_provenance": true,

"max_sources": 12

}

}How do the parallel retrievers work?#

Four retrievers run in parallel: semantic embeddings catch meaning, lexical search catches exact identifiers, structural retrieval walks the code graph, and temporal retrieval pulls recent activity. The union goes into a task-aware reranker that scores candidates on authorship, recency, and task fit rather than raw query similarity.

Semantic retrieval uses dense embeddings against the chunk store. Lexical retrieval (BM25 or similar) catches exact-identifier matches (function names, error codes, ticket IDs) where semantic similarity is noisy. Structural retrieval walks the code graph: callers, callees, imports, tests. Temporal retrieval pulls the most recent activity touching the target file or its neighbors in the graph.

The union of the retrievers is then reranked by a cross-encoder or a task-aware scoring pass. This is where the task block from the query earns its keep. Semantic similarity to the query string is one signal among several. "Is this document about payments?" "Was it written by someone who reviewed this file recently?" "Does it contradict or support the current implementation?" A reasoning pass asks those questions explicitly and drops the candidates that fail them.

If the top candidates leave the question underspecified, the engine reformulates and retrieves again. It reasons across sources rather than retrieving from them. For the deeper contrast, see context engine vs RAG. Only after the loop settles does the engine produce a single answer with inline provenance. The agent gets the synthesized view with links back to source, which is the domain and functional context Copilot doesn't have.

How does a context engine resolve conflicts?#

A context engine resolves conflicts using three inputs: authority (who wrote it, who owns the code), recency (applied carefully, distinguishing still-authoritative from stale), and code reality (the implementation is ground truth for behavior). The output is a single resolved view with the disagreement called out, not a ranked list of sources for the caller to untangle.

Any engineering org past its first dozen people contains contradictions. The API spec says one thing. The implementation does another. A Slack thread from last March explains the divergence. A Notion design doc from two years ago says the current behavior is wrong.

A retrieval system returns all four and lets the caller sort it out. A context engine identifies the disagreement and resolves it. That is a meaningful part of how a context engine works in production: the caller is handed a decision, not a research project.

How does authority weighting work?#

Authority weighting biases toward sources backed by people with real authorship history on the artifact in question. Recency weighting distinguishes "still referenced" from "stale." Code reality breaks ties where spec and implementation disagree. The three signals combine into an edge-weighted score the reranker uses before synthesis.

Resolution has three inputs. The first is authority. Who wrote the source? Who owns the code it describes? Who reviewed the last five PRs on that file? The graph carries those signals as edge weights, and the engine biases toward sources backed by people with real authorship history on the artifact in question. A design doc written by a staff engineer who has shipped fifty PRs into a service outranks a speculative Notion page from someone who has never touched it.

The second input is recency, applied carefully. A Slack thread from yesterday about a production incident is almost always more relevant than one from two years ago. But the canonical design doc for a service may be three years old and still correct. The engine distinguishes "still authoritative" documents (those that continue to be referenced, linked, and cited) from "stale" documents.

The third input is code reality. If the query is about a specific function and the implementation clearly contradicts an older spec, the implementation wins. Code is ground truth for behavior. Specs are ground truth for intent. Both get reported, with the disagreement called out explicitly. For more on why that bar matters, see decision-grade context.

How does a context engine handle permissions?#

A context engine resolves permissions at query time against each source system, not at ingest time. A user or agent sees exactly what they would see logging into each underlying system directly. Permissions differ across every source, so the engine checks source-of-truth access per node before surfacing it in a response.

The rule is simple: a user or agent should see exactly the sources they would see if they logged into each underlying system directly. Nothing more. The implementation is not simple, because permissions are defined differently in every source. GitHub has repo-level access plus branch protections. Slack has workspace, channel, and thread-level visibility. Jira has project roles. Notion has workspace and page-level sharing. A single person typically has a different access profile in each.

Ingesting with a service account that has broad read access is usually the right call, because it gives the engine the fullest possible graph. But before any node is surfaced in a response, the engine checks the requesting user's access against the node's source-of-truth system. If the user has lost access to a Slack channel since the message was indexed, that message does not appear in their results.

What is Data Shield?#

Data Shield is the redaction layer that filters secrets and sensitive patterns before they touch embeddings, graph payloads, or responses. It is the difference between a context engine you can point at a real Slack workspace and a demo that only runs on sanitized data.

The second half of the permissions story is data handling. Engineering context contains secrets: API keys pasted into Slack, credentials in Jira tickets, private customer data in support docs. A production-grade context engine runs a filter that redacts or blocks sensitive patterns before embeddings are written, before the graph stores payloads, and before any response is returned.

Unblocked calls this layer Data Shield, and it is what makes the system safe to point at your real engineering stack rather than a sanitized subset. Without it, you either ingest less (hurting answer quality) or ingest everything and hope nothing sensitive escapes (hurting sleep). Data Shield is the reason how a context engine works in production includes a pattern filter as a first-class component, not an afterthought.

What does this look like in practice?#

In practice, the five stages compound into measurable shifts: new engineers reason about unfamiliar codebases faster, and agents produce code that survives review more often. The synthesized answer, not a pile of links, is what makes both possible inside the tool-call budget an agent actually has in a turn.

The architecture above is abstract. What it produces, for the teams using it, is a measurable shift in how fast new engineers can reason about an unfamiliar codebase and how often agents produce code that survives review.

"Coming in fresh, we were able to understand how the platform worked by asking Unblocked. It helped us support over 200 developers much faster than we otherwise could have." — Pedro Fernandez, Engineering Manager, RB Global

That is what the five stages compound into. The ingestion and graph construction layers mean Pedro's team did not have to interview every long-tenured engineer to find out why the platform is shaped the way it is. The retrieval-and-reasoning loop meant the answers they got were synthesized. The conflict-resolution stage meant they got the current story, not the five-year history of every decision. The permissions stage meant they could turn it on across the whole org without a three-month security review.

How does a context engine behave inside an agent loop?#

Inside an agent loop, the engine has to return a synthesized answer with provenance in a single tool call. Agents budgeted for a few calls per turn cannot afford to sort through twelve retrieval results themselves. When synthesis works, the next action is informed by institutional reality rather than the model's best guess about payments services.

The other practical signal is how the engine behaves inside an agent loop. Agents budgeted for a few tool calls per turn cannot afford to sort through twelve retrieval results themselves. They need a synthesized answer with provenance in a single call.

When that works, the agent's next action is informed by institutional reality instead of the model's best guess about what a payments service usually does. When it does not work, you get the familiar pattern of agents shipping plausible code that reviewers send back. That single-call contract is why how a context engine works matters more at agent time than at developer-chat time: the agent has no budget to recover from a bad retrieval.

FAQ#

How is this different from RAG?

RAG is a retrieval technique: embed documents, match on query, return chunks to the model. A context engine uses retrieval as one component among several, then runs reasoning, conflict resolution, and authority weighting on top. RAG returns documents. A context engine returns a resolved answer with provenance. The full comparison lives in context engine vs RAG.

Do I need a vector database?

You need a chunk store that supports dense semantic retrieval, lexical retrieval, and metadata filtering. Most teams reach for a vector database for the dense part, but the engine as a whole is more than vector search. It also needs the typed graph, the retriever orchestration, and the reasoning pass. A vector DB on its own is not a context engine.

How fresh does the index need to be?

Fresh enough that a PR merged an hour ago shows up in the next query, and a Slack thread started thirty seconds ago is reachable within a minute or two. That means webhook-driven incremental indexing plus a reconciliation sweep. Batch-nightly indexing is not sufficient for engineering workflows where the thing you need context on was just written.

Can an LLM with a million-token context window replace this?

No. Long-context models help with the synthesis step, but you still cannot fit your entire Slack history, Jira backlog, and monorepo into a prompt, and you would not want to even if you could. The retrieval, ranking, and conflict-resolution layers exist because precision matters more than dumping raw content into the model.

Is this worth building in-house?

For most engineering orgs, no. Five connectors, a graph, a retrieval loop, a reasoning layer, and a permissions model is a multi-team multi-year commitment. Teams that try usually ship a context layer without the engine, meaning the plumbing without the reasoning, and discover their agents still hallucinate.

Build Your Own or Buy One?#

If you have read this far, you have the architecture. The honest build-vs-buy question is whether your team can staff five connectors, graph construction, retrieval orchestration, a reasoning loop, conflict resolution, and a permissions layer, and keep all five current while source systems change their APIs underneath you. For most engineering orgs, the answer is no, not because the architecture is exotic but because the work never ends. Connectors drift. Permissions models change. New sources show up.

The buy-side path is Unblocked. It runs the five stages described here against your Git, Slack, Jira, Notion, Confluence, and the rest of the engineering stack, and it surfaces a single synthesized answer through agents, an IDE, or chat. The value is institutional memory across Slack, Jira, Notion, Confluence: the organizational layer that an agent cannot reconstruct from the repo alone.

The architecture is knowable. That does not mean it is cheap to build. Understanding how a context engine works is the first step; deciding whether to stand one up yourself is the second.