Decision-Grade Context: The Operational Test for Agents

Dennis Pilarinos·May 4, 2026

Dennis Pilarinos·May 4, 2026

- Decision-grade context is context an AI agent can act on directly: synthesized across sources, resolved against conflicts, shaped for the specific task, and filtered through the user's permissions.

- Retrieval returns similarity matches, not authoritative answers. Similarity is not sufficiency.

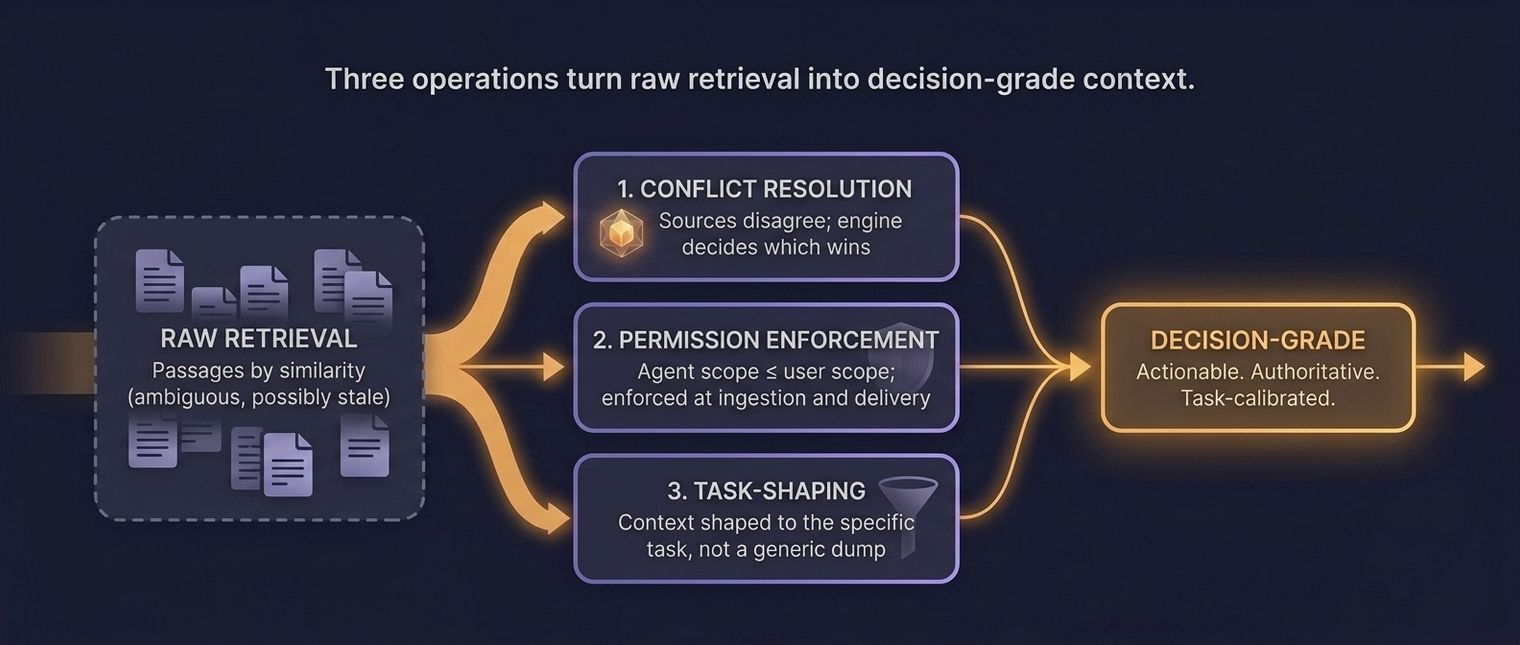

- Three operations distinguish decision-grade from retrieval-grade context: conflict resolution, permission enforcement, and task-shaping.

- The test is operational: does the agent act without clarifying questions, without 'didn't know X' review comments, and with first-run mergeability?

- The failure mode of retrieval alone is satisfaction of search: the agent stops at the first plausible result and ships.

Disclosure: The author's company builds context engine technology. The term "decision-grade context" is appearing in multiple vendor definitions this year; this piece argues for an operational test rather than a vocabulary claim.

Key Takeaways#

- Decision-grade context is context an AI agent can act on directly: synthesized across sources, resolved against conflicts, shaped for the specific task, and filtered through the user's permissions. It is a threshold, not a spectrum.

- Retrieval returns similarity matches, not authoritative answers. Chroma's 2025 Context Rot benchmark showed that even frontier models degrade sharply as input length grows, with retrieval accuracy collapsing long before the stated context window is full (Chroma Research, 2025). Similarity is not sufficiency.

- Three operations distinguish decision-grade from retrieval-grade context: conflict resolution, permission enforcement, and task-shaping.

- The test is operational: does the agent act without clarifying questions, without "didn't know X" review comments, and with first-run mergeability? If yes, the context was decision-grade. If not, it was retrieval dressed up.

- The failure mode of retrieval alone is satisfaction of search: the agent stops at the first plausible result and ships. Radiology has a fifty-year literature on this bias. LLMs exhibit the same pattern.

Table of Contents

- What Is Decision-Grade Context?

- Why Does Retrieval Alone Fail AI Agents?

- What Makes Context "Decision-Grade"?

- Decision-Grade Context vs "High-Quality Context"

- Who Delivers Decision-Grade Context?

- How Do You Know If Your Agent Is Getting It?

- FAQ

Introduction#

What does it mean for context to be decision-grade? It means the agent can act on it without asking a follow-up, without inventing a missing fact, and without a reviewer having to patch the gap after the fact. Everything short of that threshold is retrieval waiting on a human to finish the job.

The phrase is now appearing in more than one vendor's marketing, so the word is in early competition. This piece treats it as an operational threshold rather than a brand: context sufficient for an AI agent to act correctly on a specific task without human intervention. The test is specific enough to measure, which is why the term matters.

What Is Decision-Grade Context?#

Decision-grade context is context an AI agent can act on directly. It has been synthesized across sources, resolved against conflicts, shaped for the specific task at hand, and filtered through the user's permissions. It is the difference between handing an agent seventeen Notion pages that mention authentication and handing it the authoritative answer for how authentication works in this codebase right now.

A one-sentence definition#

Decision-grade context is what an AI agent needs in order to act correctly on a specific task, with no clarification required and no fabricated assumptions filling gaps.

A technical definition#

Decision-grade context is the output of a reasoning layer that sits above retrieval. The layer performs at minimum three operations on retrieved material: it resolves conflicts between sources, enforces source-level permissions against the requesting user, and shapes the final delivery to the specific task the agent is attempting. The output is compact, current, and actionable.

An operational definition#

Decision-grade context is the context state after which the agent does not need to ask. It is measured by three signals: the agent acts without clarifying questions, reviewers do not leave comments in the form "the agent didn't know X," and the output is mergeable on the first run. If those three signals are present, the context was decision-grade. If any of the three fails, the context was retrieval that looked sufficient.

Why Does Retrieval Alone Fail AI Agents?#

Retrieval returns similarity matches, not authoritative answers. Modern embedding systems find documents that are semantically close to the query. Closeness is not truth. Chroma's 2025 Context Rot benchmark stressed eighteen frontier models (including GPT-4.1, Claude 4, Gemini 2.5, and Qwen3) against longer and longer inputs and found that retrieval accuracy degrades non-uniformly well before the stated context window is full — distractors, ambiguity, and topic shifts compound the loss, and needle-in-a-haystack scores overstate real-world reliability (Chroma Research, 2025). If frontier models with clean benchmarks degrade this way, retrieval over messy enterprise engineering knowledge will not fare better.

The failure mode has a name. Berbaum and colleagues documented it in radiology in 1990: satisfaction of search. Radiologists who identified one abnormality on a scan frequently failed to detect a second one, because finding the first abnormality satisfied the search and terminated the inspection (Berbaum et al., 1990). Agents exhibit the same pattern. A retrieval system returns three passages. The agent reads the first, finds it plausible, acts on it, and does not read the other two, one of which contradicts the first.

A 2025 arXiv paper titled "The Semantic Illusion" measured this tension directly. Embedding-based hallucination detectors achieved 95% coverage at 0% false-positive rate on synthetic hallucinations but failed at 100% false-positive rate on real RLHF-aligned hallucinations. The same task was tractable for a GPT-4-as-judge approach at 7% FPR. The paper's conclusion: the problem "is solvable via reasoning but opaque to surface-level semantics" (arXiv 2512.15068). Retrieval sees surfaces. Reasoning sees truth. Agents need both.

What Makes Context "Decision-Grade"?#

Three operations that retrieval systems do not perform: conflict resolution, permission enforcement, and task-shaping. All three are specific and measurable. Absence of any one of them means the context is not decision-grade regardless of how good the retrieval is.

Three operations turn raw retrieval into decision-grade context. Source: Unblocked operational framework.

What does conflict resolution look like in practice?#

Two internal documents describe the same architectural pattern differently. The engine decides which is authoritative before the agent sees either. The decision is based on evidence: recency, code-match verification, ownership, authority. The agent receives one resolved answer with provenance. Without this step, the agent inherits the contradiction.

Permission enforcement#

The engine checks, at both ingestion and delivery, whether the user acting through the agent is authorized to see each source. A junior engineer's agent does not retrieve passages from repositories the junior cannot access. The guardrail is architectural, not a post-hoc filter on output. Skipping this step converts a productivity tool into a compliance incident.

What does task-shaping change at delivery time?#

The engine knows what the agent is about to do. Write a test, refactor a function, debug an incident, review a PR. Those are different tasks with different context shapes. The engine delivers the specific subset that fits the task rather than returning the same generic bundle for every query. Task-shaping is what makes the "parsimony" principle operational, not aspirational.

Decision-Grade Context vs "High-Quality Context"#

"High-quality context" is marketing language with no operational meaning. It can describe a slightly-better retrieval set or a carefully curated dump or a perfectly engineered synthesis. You cannot test whether context is high-quality. You can test whether it is decision-grade.

Quality is a spectrum. Decision-grade is a threshold. Either the agent can act on the context without supervision, or it cannot. That binary property is why the term is worth defining precisely. A vendor saying "our context is higher-quality" is making a claim you cannot verify. A vendor saying "our context allows the agent to act without clarification" is making a claim you can.

This is the reason the term matters even as multiple vendors adopt it. The discipline of naming a floor, rather than pointing at a spectrum, is what separates useful vocabulary from useful-sounding vocabulary.

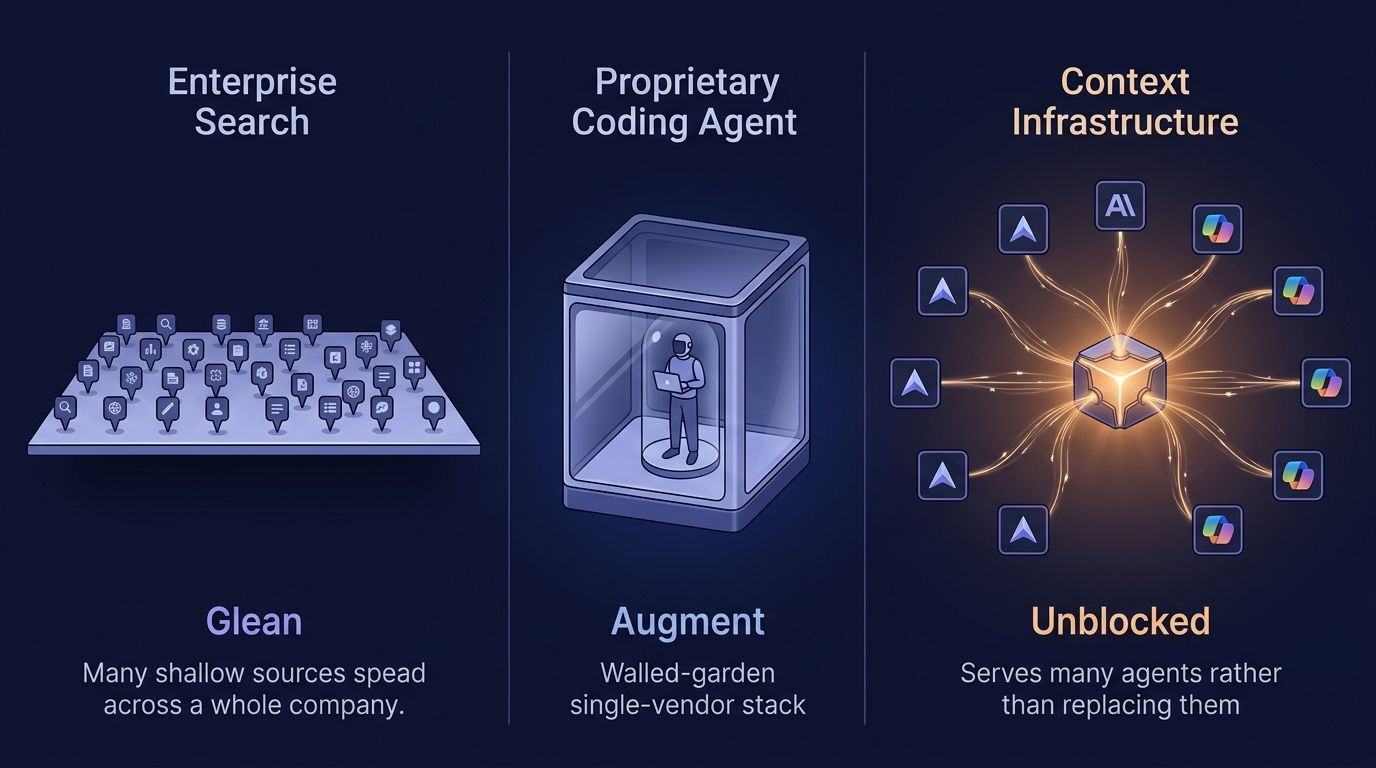

Who Delivers Decision-Grade Context?#

A context engine delivers decision-grade context. A context layer delivers information, aggregated and surfaced but not resolved. RAG delivers retrieval. Rules files deliver static instructions. Only the engine performs the three operations above; everything below stops short.

This distinction is the practical shape of the context engineering maturity model. Teams at Stage 1 (files) and Stage 2 (layer) are not producing decision-grade context. They are producing retrieval-grade context and relying on engineers to close the gap through supervision. Teams at Stage 3 (engine) produce decision-grade context and move the reasoning work out of the supervision loop. For the full treatment of the distinction between layers and engines, see what is a context engine.

Not every team needs to be at Stage 3. Teams shipping small volumes of low-complexity agent work can run sustainably at Stage 1 indefinitely. The decision is not "which stage is best" but "which stage matches the cost of your context debt."

How Do You Know If Your Agent Is Getting It?#

Three signals. The agent acts without clarifying questions. Review comments do not include "the agent didn't know X." Output works on the first run instead of the third. Run these three checks on the last fifty agent PRs and the fraction passing is a direct measure of decision-grade context coverage.

Signal 1: Clarification-free action#

When an agent pauses mid-task to ask for more context, the context it started with was insufficient. Clarification-free operation means the engine delivered enough on the first pass. In mature teams with good engines, clarification rates drop below 10% of tasks for routine work.

Signal 2: No "didn't know" reviews#

Review comments that take the form "the agent used the deprecated client because it did not know about the migration" are not code review. They are context review. When those comments vanish from the reviewer's backlog, the engine is catching the gap upstream. If those comments are the majority of your PR review time, your context is not decision-grade.

Signal 3: First-run mergeable#

The binary outcome. Agent-generated PRs either land on the first review round or they do not. Mature teams with decision-grade context running show first-run mergeability above 50% for routine work. Below 20% is a signal of context debt and supervision dependence.

FAQ#

Isn't this just "good context"?#

"Good" is subjective and unmeasurable. "Decision-grade" names a specific operational bar: the agent can act correctly without human clarification. You can test whether context is decision-grade. You cannot test whether it is "good."

How is this different from Anthropic's "just enough context" principle?#

Compatible, not competing. Anthropic frames the parsimony principle: provide only what the agent needs (Anthropic, 2025). Decision-grade names the floor: what is provided must be enough for the agent to act. You need both. Parsimony is the ceiling; decision-grade is the floor. Good context engineering hits both at once.

Does decision-grade context require a context engine?#

In practice, yes. The three operations (conflict resolution, permission enforcement, task-shaping) require reasoning that retrieval systems do not perform. You can approximate decision-grade context manually by preparing context by hand for each task, but that is exactly the babysitting pattern we are trying to exit. Systems that scale require an engine.

Can RAG ever deliver decision-grade context?#

RAG can be a component inside a system that delivers decision-grade context. RAG alone cannot. Retrieval is one step of many; the remaining steps are what make the output actionable.

Why is the term appearing in multiple vendors' marketing?#

Because the gap it names is real and the category is converging. Multiple vendors arriving at similar vocabulary is a signal that the underlying idea is the right frame. The question for buyers is not who used the word first. It is whether the vendor performs the operations (conflict resolution, permission enforcement, task-shaping) that make the word meaningful.

Is "satisfaction of search" a real cognitive bias?#

Yes, with a fifty-year medical literature. Berbaum and colleagues documented it empirically in radiology in 1990, and a 2018 RadioGraphics review attributed roughly 22% of radiologic diagnostic errors to it. The bias applies to any search system that terminates on the first plausible result, human or machine.

Applying the Decision-Grade Test#

Run the test on your own stack before the next planning meeting. Take the last fifty agent-generated PRs and tally them against five questions. Each one is a pass/fail check, not a judgment call.

- Did the agent act without a clarifying question? (Pass if the task ran to completion on a single prompt.)

- Were the sources the agent used actually authorized for the requesting user? (Pass if permission checks ran at retrieval, not after.)

- When two internal sources disagreed, did the engine pick one before the agent saw both? (Pass if the agent received a resolved answer with provenance.)

- Was the delivered context shaped to the specific task, or the same generic bundle every query gets? (Pass if the shape differs for "write a test" versus "review a PR.")

- Did the PR land on the first review round? (Pass if mergeable without a "the agent didn't know X" comment.)

Count the passes. The ratio is your decision-grade coverage. Our benchmark runs at Unblocked show that teams who move above roughly 50% first-run mergeability stop treating agent output as a draft and start treating it as work product; below 20%, the supervision tax eats the productivity gain. The three operations — resolve conflicts, enforce permissions, shape to task — are the levers. A system that does all three clears the threshold. A system that does fewer produces something else, and calling it decision-grade does not make it so.

Continue reading:

- What is a context engine? — the system that delivers decision-grade context.

- Context engine vs RAG — why retrieval alone falls short.

- Context engineering — the discipline that operationalizes the threshold.

- Stop babysitting your agents — what the threshold buys you.

- A context layer is not a context engine — the maturity distinction.

Dennis Pilarinos is the founder and CEO of Unblocked, where his team builds context engines for enterprise engineering organizations. Previously, he led engineering teams at Microsoft and AWS.