Context Engine vs RAG: The Essential Breakdown

Brandon Waselnuk·April 27, 2026

Brandon Waselnuk·April 27, 2026

The short version:

- RAG pulls documents by vector similarity. A context engine runs the judgment work on top: conflict resolution, permission enforcement, and task-shaped delivery to the agent.

- The average model hallucination rate sits at 8.2% in 2026, and reasoning models still exceed 10% on grounded summarization benchmarks (Vectara Hallucination Index via Suprmind, 2026). Retrieval narrows the search space; it does not close the reasoning gap.

- Four recurring failure modes show up when RAG runs alone under a coding agent: conflict blindness, staleness, permission leakage, and reasoning gaps.

- Reasoning-grade engines keep RAG inside the stack. They treat it as a retrieval tactic, not the finished product.

- Reach for RAG when the corpus is authoritative and the question is a lookup. Reach for a context engine when sources disagree, permission scopes differ per user, or the agent has to act rather than answer.

Disclosure: The author's company builds context engine technology. This comparison aims to represent RAG fairly; RAG is often the right tool when used inside the right system.

The short version:

RAG pulls documents by vector similarity. A context engine runs the judgment work on top: conflict resolution, permission enforcement, and task-shaped delivery to the agent.

The average model hallucination rate sits at 8.2% in 2026, and reasoning models still exceed 10% on grounded summarization benchmarks (Vectara Hallucination Index via Suprmind, 2026). Retrieval narrows the search space; it does not close the reasoning gap.

Four recurring failure modes show up when RAG runs alone under a coding agent: conflict blindness, staleness, permission leakage, and reasoning gaps.

Reasoning-grade engines keep RAG inside the stack. They treat it as a retrieval tactic, not the finished product.

Reach for RAG when the corpus is authoritative and the question is a lookup. Reach for a context engine when sources disagree, permission scopes differ per user, or the agent has to act rather than answer.

Table of Contents

- What's the Difference Between RAG and a Context Engine?

- How Does RAG Work?

- Where Does RAG Break for AI Coding Agents?

- How Does a Context Engine Solve What RAG Can't?

- When Should You Use RAG vs a Context Engine?

- Can a Context Engine Use RAG Internally?

- FAQ

Introduction#

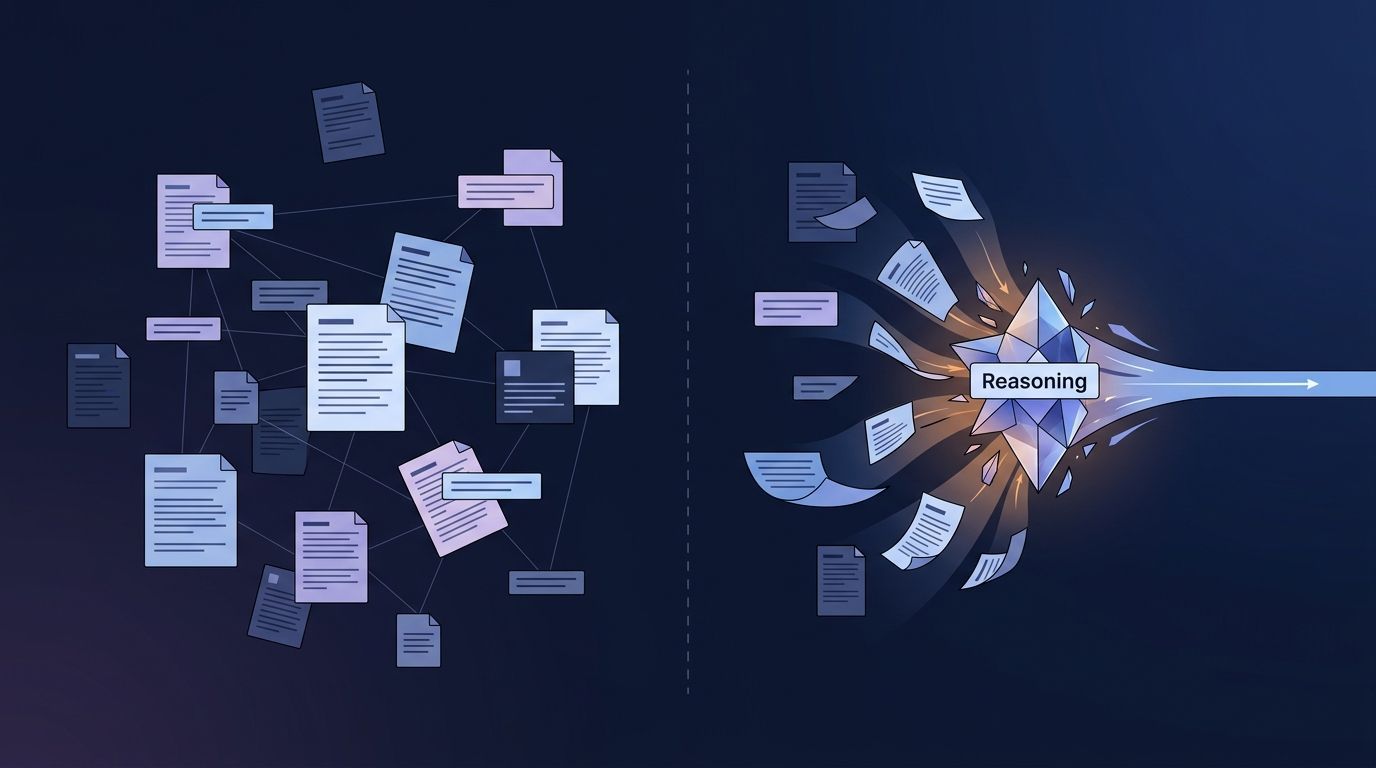

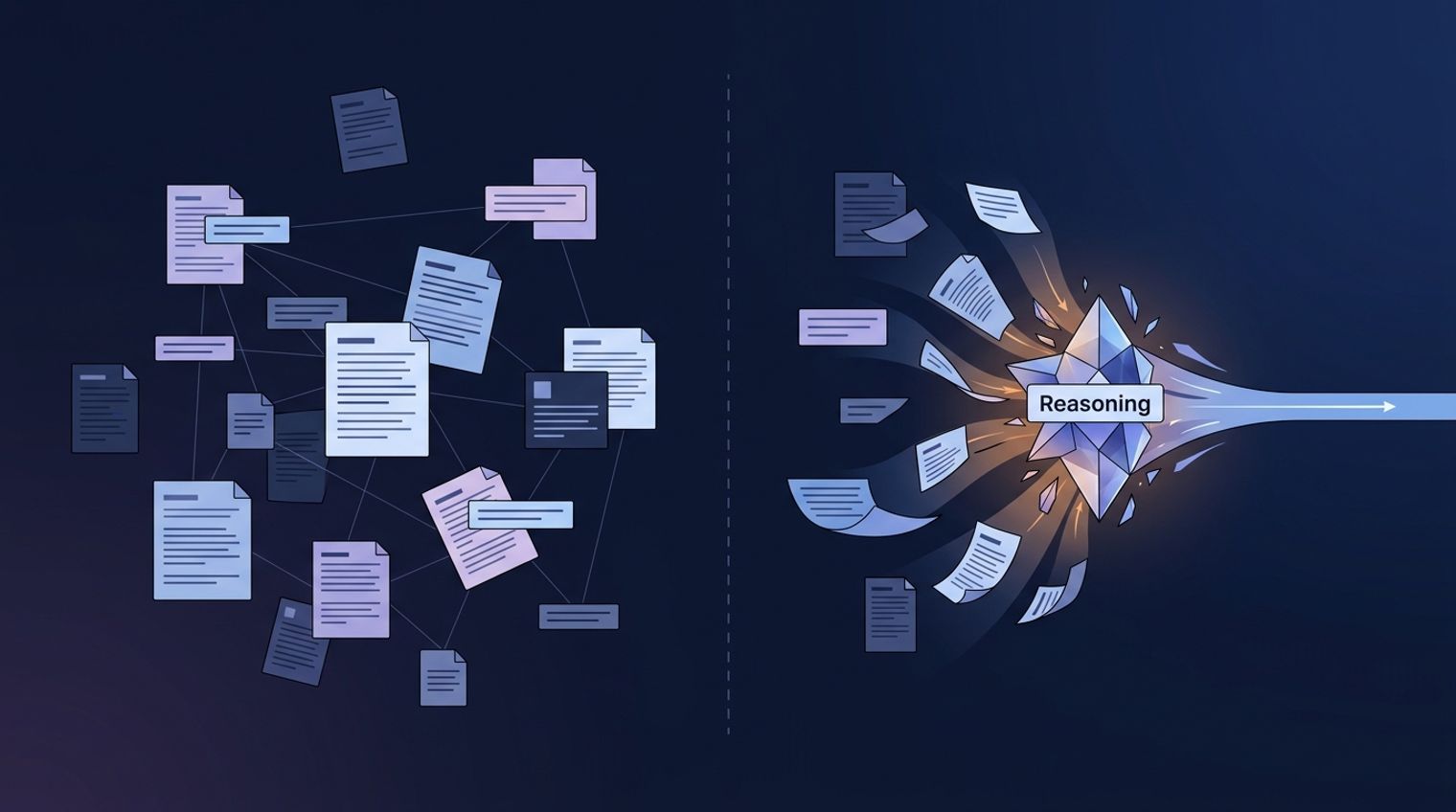

RAG returns passages. A context engine returns a decision. That one-line distinction is the subject of this post. RAG retrieves documents by vector similarity and hands them to an LLM. A context engine performs the reasoning work that retrieval skips: resolving conflicts between sources, enforcing permissions, and shaping the final context to the specific task the agent is about to act on.

The practical picture: a staff engineer opens a PR from her coding agent on Tuesday morning. The diff compiles. Tests pass. Line 47 calls a retry helper the platform team deprecated six weeks ago in favor of a circuit-breaker pattern. The agent's retrieved context was three cosine-similar snippets, none aware that the newest snippet superseded the other two. The RAG pipeline did its job. The agent did not. This post is for staff and principal engineers who have already deployed RAG and hit that ceiling.

What's the Difference Between RAG and a Context Engine?#

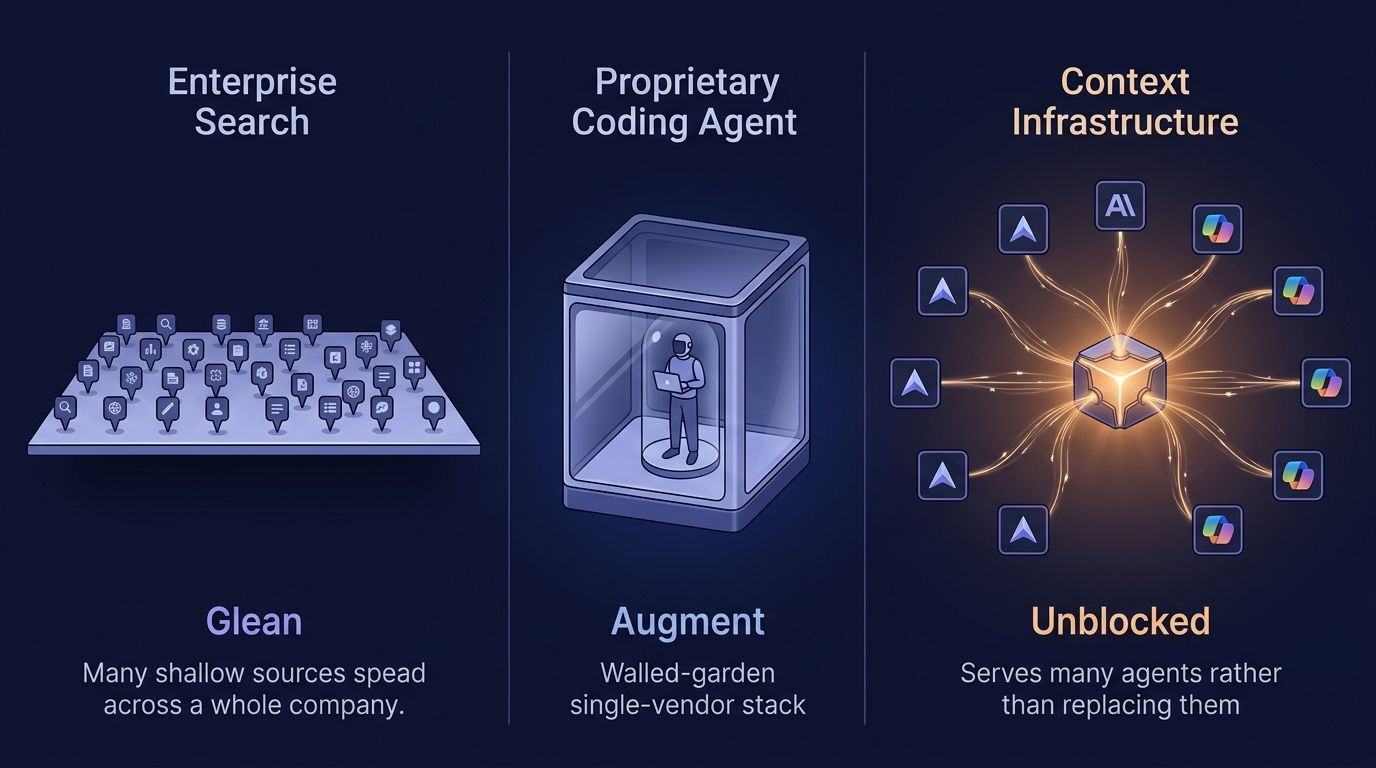

RAG retrieves documents by similarity and hands them to an LLM. A context engine retrieves, resolves conflicts between sources, enforces permissions, and reasons about what the combination means for a specific task. RAG returns "plausibly relevant." A context engine returns "decision-grade."

The relationship is hierarchical. RAG is a retrieval tactic. A context engine is the system that decides whether RAG is appropriate, what RAG should search, how to handle RAG's output, and what operations come next before the agent sees anything. Most reasoning-grade context engines use RAG as one of several ingestion mechanisms. The distinction is not "RAG or not." It is whether the system stops at retrieval or continues into reasoning.

How Does RAG Work?#

A standard RAG pipeline has four stages: chunk, embed, retrieve, generate. Documents are split into passages, each passage is encoded as a vector embedding, the embeddings are stored in a vector database, and at query time the pipeline finds the most similar embeddings to the question and injects the original passages into the prompt. The LLM then generates a response grounded in those passages.

This pattern works well because embeddings capture meaning, not just keywords, and because the LLM usually respects content provided directly in its context window. For single-source Q&A over an authoritative corpus, standard RAG handles the problem competently. The 2023 Chain-of-Note research from Tencent AI Lab documented that irrelevant retrieval degrades RAG performance by causing the model to override correct internal knowledge (arXiv 2311.09210). The pattern is strong when retrieval quality is high. It breaks when retrieval quality is mixed, which is the default state for enterprise engineering context.

Where Does RAG Break for AI Coding Agents?#

Four specific failure modes recur when teams use RAG alone to provide context to AI coding agents. Each is individually manageable. In combination they produce agents that generate code that compiles, passes tests, and fails review.

Four failure modes of RAG for AI coding agents:

| # | Failure mode | What happens | Failure signature |

|---|---|---|---|

| 1 | Conflict blindness | Three sources disagree on the same pattern. RAG returns all three. The agent picks one and ships. | "Satisfaction of search" |

| 2 | Staleness | A Notion page from 2023 has the same similarity as the PR that replaced it last month. | Confidently outdated code |

| 3 | Permission leakage | Embedding retrieval ignores source-level access control. A productivity tool becomes a governance incident. | Audit finding |

| 4 | Reasoning gaps | Similarity finds documents. It does not verify truth. Stanford's "Semantic Illusion" paper shows 100% FPR on real RLHF-aligned hallucinations. | Plausible-but-wrong output |

Four failure modes RAG cannot solve alone. Source: Unblocked analysis; Stanford HAI; arXiv 2512.15068.

Conflict blindness#

When three internal docs describe the same architectural pattern three different ways, RAG returns all three. Vector similarity cannot tell the agent which document is authoritative, which is stale, or which matches the current code. The 2024 arXiv paper "Seven Failure Points When Engineering a Retrieval Augmented Generation System" identified this class of failure explicitly, alongside ranking errors and wrong-format extraction, and concluded that "validation is only feasible during operation" (Barnett et al., 2024). Without conflict resolution upstream, the agent inherits the contradictions.

Staleness#

A Notion page describing your auth flow from 2023 has the same embedding similarity as the pull request that replaced it last month. RAG has no native freshness model. Recency metadata exists in most corpora but is not typically part of the retrieval ranking. The agent confidently applies the old pattern, ships code that uses a deprecated interface, and adds to the review backlog.

Permission leakage#

Embedding retrieval ignores source-level access control. An agent acting on behalf of a junior developer can retrieve passages from repositories or Confluence spaces that developer is not authorized to see. The productivity tool becomes a governance liability, and the problem is typically discovered in an audit rather than in code review. Permission enforcement has to happen at ingestion and delivery, which means it is not something you layer onto RAG after the fact.

Why does retrieval miss reasoning?#

Retrieval finds similar content. It does not verify truth. A 2025 arXiv paper titled "The Semantic Illusion" measured this directly: embedding-based hallucination detectors achieved 95% coverage at 0% false-positive rate on synthetic hallucinations but failed catastrophically, with 100% false-positive rate, on real RLHF-aligned model hallucinations. The same task was tractable for a GPT-4-as-judge at 7% FPR. The paper's conclusion was that the problem "is solvable via reasoning but opaque to surface-level semantics" (arXiv 2512.15068). Retrieval finds similarity. Reasoning finds truth. The gap between the two is where RAG-only agents fail.

How Does a Context Engine Solve What RAG Can't?#

A context engine sits above retrieval and performs three operations that RAG alone does not: conflict resolution, permission enforcement, and task-shaping. The engine does not replace RAG; it uses RAG as one possible ingestion mechanism and continues past retrieval into reasoning.

Conflict resolution#

The engine weighs retrieved sources against each other and against code ground truth. When two documents disagree, the engine makes an explicit decision: the more recent one wins, or the one that matches the code wins, or the one from the authoritative owner wins. The agent receives a single resolved answer with provenance, not a pile of similar passages.

Permission enforcement#

The engine enforces source-level ACLs at both ingestion (what enters the index at all) and delivery (what is returned for a specific user's query). The result: an agent acting on behalf of a junior developer receives context consistent with that developer's scope. Permission enforcement is the single biggest architectural gap between "RAG in a POC" and "retrieval system you can ship to a regulated industry."

Task-shaping#

A context engine knows what the agent is about to do. Write a unit test, refactor a function, debug a production incident, and review a PR are different tasks with different context needs. RAG handles retrieval the same way regardless of the task; a context engine shapes what is returned based on the downstream operation. Parsimony at the delivery layer prevents the context rot that Chroma Research documented across 18 frontier models (Chroma, 2025).

What does the combined effect look like in practice?#

SWE-bench Verified, the primary agent-coding benchmark, has moved from under 4% in late 2023 to 78-93% in 2026, with Claude Opus 4.5 paired with the Live-SWE-agent harness scoring 79.2% (Live-SWE-agent Leaderboard). The models got better. The agent harnesses around them got dramatically better. The harnesses did not become better by switching to purer RAG. They became better by treating retrieval as one step in a longer reasoning pipeline.

When Should You Use RAG vs a Context Engine?#

RAG is the right tool when the corpus is authoritative, the question is a lookup, and no conflict resolution is required. A customer-support knowledge base, an internal policy search, a single-source Q&A product. In those settings the standard RAG pattern is well-understood, cheap to operate, and often sufficient.

The choice shifts when three conditions appear together: multiple conflicting sources, permission scopes that vary per user, and an agent that needs to act rather than answer. Those conditions describe most enterprise engineering contexts. When your agent is writing PRs rather than answering questions, when your sources include code, chat, tickets, and docs simultaneously, and when compliance requires per-user scoping, you have moved past RAG's design envelope and into the work a context engine performs.

A working decision rule: if the failure mode of an incorrect answer is "a bad information result," use RAG. If the failure mode is "a bad PR that ships to production," you need the reasoning layer above it.

Can a Context Engine Use RAG Internally?#

Yes, and most do. RAG is an excellent first-pass retrieval tactic for unstructured text. A mature context engine uses retrieval (RAG-style vector similarity, graph-based code traversal, or structured query over metadata) as an ingestion step and then runs conflict resolution, permission enforcement, and task-shaping on top. The distinction is not whether RAG is present. The distinction is whether the system stops at retrieval output or continues into reasoning.

One practical implication: teams that have already invested in RAG infrastructure do not throw it away when they move to a context engine. They keep the vector store, the embedder, and the reranker, and they add the reasoning operations above them. The investment is additive, not replacement.

For a detailed treatment of how the full stack assembles, see what is a context engine.

What practitioners say#

"You cannot make coding agents work without domain and functional context. We connected and trained Unblocked on our code repos, Atlassian tools, internal docs, product documentation, KB from Support and Slack history. When an agent asks a question, it gets the full picture — not just the code analysis, but also why decisions were made and what the constraints are. Other tools like Copilot know only the code. That's limited value. Unblocked is a game changer for coding agents."

— Raphael Bres, CTO, Tradeshift

FAQ#

Is "context engine" just rebranded RAG?#

No. RAG is retrieval. A context engine reasons about what was retrieved, resolves conflicts, enforces permissions, and synthesizes before delivering to the agent. Rebranded RAG is still RAG. You can tell the difference by asking a vendor to describe how they resolve a conflict between two sources. If the answer is "we return the top-k matches," you are looking at a layer, not an engine.

Do I need vector databases for a context engine?#

You might use them as a component. You might also use graph-based stores, structured indices, or hybrid approaches. The specific retrieval mechanism is an implementation detail. A context engine is defined by what happens after retrieval (conflict resolution, permission enforcement, task-shaping), not by whether the retrieval itself uses vectors, graphs, or something else.

My RAG pipeline works. Do I need a context engine?#

Depends on failure mode. If your agents consistently ship working code on the first run, your RAG is sufficient. If they ship code that compiles and fails review, retrieval is not the limiting factor. Reasoning is.

What about the lab-versus-production gap?#

Augment Code published an internal benchmark suggesting AI coding tools show a 47-point gap between lab accuracy (65-71%) and production deployment (17.67%) (Augment Code). Attribute that number to their methodology, but the direction is consistent with what practitioners report: retrieval looks great in a demo and degrades under real workloads because conflicts, staleness, and permission handling are not stressed in benchmarks.

How does this relate to MCP?#

Model Context Protocol (MCP) is a delivery mechanism. It is orthogonal to the retrieval-vs-reasoning question. An agent can connect to MCP servers and still make all four RAG failure modes listed above. MCP and context engineering are complementary: MCP solves plumbing, context engineering solves decision-making. Read more on why MCP alone is not enough.

When RAG Is the Right Tool#

Use this checklist before reaching past RAG. If you can answer "yes" to all four, ship RAG and stop there:

- Your corpus has one owner and one source of truth. No three-docs-disagree problem.

- The user is a human reading an answer, not an agent writing code. A human catches staleness; an agent does not.

- Access control is uniform across the index. Every reader sees the same documents, or the scope is narrow enough that per-user filtering is not required.

- Correctness is graded as "did we surface a relevant passage," not "did the downstream action succeed."

Customer-support knowledge bases, internal policy search, single-corpus Q&A products — RAG is the right tool and there is no reason to over-engineer. The moment any of those four flips — multiple conflicting sources, an autonomous agent consumer, per-user permission scopes, or correctness measured by shipped output — RAG becomes a component inside a larger system rather than the system itself. That larger system is what a context engine provides. Keep the vector store, the embedder, the reranker. Add conflict resolution, permission enforcement, and task-shaping above them. The investment compounds; the reasoning layer is where the agent stops being something you babysit.

Continue reading:

- What is a context engine? — the full architectural view.

- A context layer is not a context engine — layer vs engine distinction.

- Why MCP alone is not enough — the delivery-layer argument.

- Context engineering — the discipline above tactics.

Brandon Waselnuk is VP of Product at Unblocked. He writes about AI coding agents, enterprise context infrastructure, and the engineering tradeoffs hidden inside dev-tool adoption.