What Is a Context Engine? The Complete Guide for Engineering Teams

Dennis Pilarinos·April 20, 2026

Dennis Pilarinos·April 20, 2026

In brief: A context engine feeds AI coding agents the institutional knowledge they need to produce correct, safe code — without it, 75% of AI models introduce breaking regressions in production codebases (Alibaba / Sun Yat-sen University, arXiv 2504.02012, 2025). It is not RAG; it layers reasoning, ranking, and delivery on top of retrieval. Teams that adopt one see fewer tool calls, broader code coverage, and higher benchmark scores.

Disclosure: The author's company builds context engine technology. This guide aims to be vendor-neutral; specific products are discussed only in the market landscape section.

In brief: A context engine feeds AI coding agents the institutional knowledge they need to produce correct, safe code — without it, 75% of AI models introduce breaking regressions in production codebases (Alibaba / Sun Yat-sen University, arXiv 2504.02012, 2025). It is not RAG; it layers reasoning, ranking, and delivery on top of retrieval. Teams that adopt one see fewer tool calls, broader code coverage, and higher benchmark scores.

Table of Contents

- What Is a Context Engine?

- Why Do AI Agents Need a Context Engine?

- How Does a Context Engine Work?

- What's the Difference Between a Context Engine and RAG?

- Who Is Building Context Engines in 2026?

- What Makes a Context Engine Effective?

- How Does a Context Engine Fit Into the Broader Context Stack?

- Should You Build or Buy a Context Engine?

- Tools and Resources

- Getting Started

- FAQ

Introduction#

Across 18 AI agents tested on 100 real-world codebases over 233 days, three out of four introduced breaking regressions (Alibaba / Sun Yat-sen University, arXiv 2504.02012, 2025). The models were not stupid. They were starved — cut off from the history, conventions, and architectural decisions that would have told them what the code actually meant. Not toy benchmarks. Production code.

The root cause is not model intelligence. It is context starvation. AI agents generate code without understanding the system they are modifying. They do not know your naming conventions, your deployment constraints, your architectural decisions from three years ago, or the reason that function has a strange workaround in it.

That is what a context engine fixes. It sits between your team's knowledge and your AI agents, feeding them the right context at the right time so they produce code that fits your system.

This guide covers how these systems work, how they differ from simpler retrieval tools, and what to look for when picking one. It is written for engineering leaders at teams where codebases are large, history matters, and AI-generated regressions are not acceptable.

With 51% of all code committed to GitHub in early 2026 being AI-generated or AI-assisted (GitHub Octoverse, 2025), the question is no longer whether your team will use AI coding agents. It is whether those agents will have the context they need to do the job correctly.

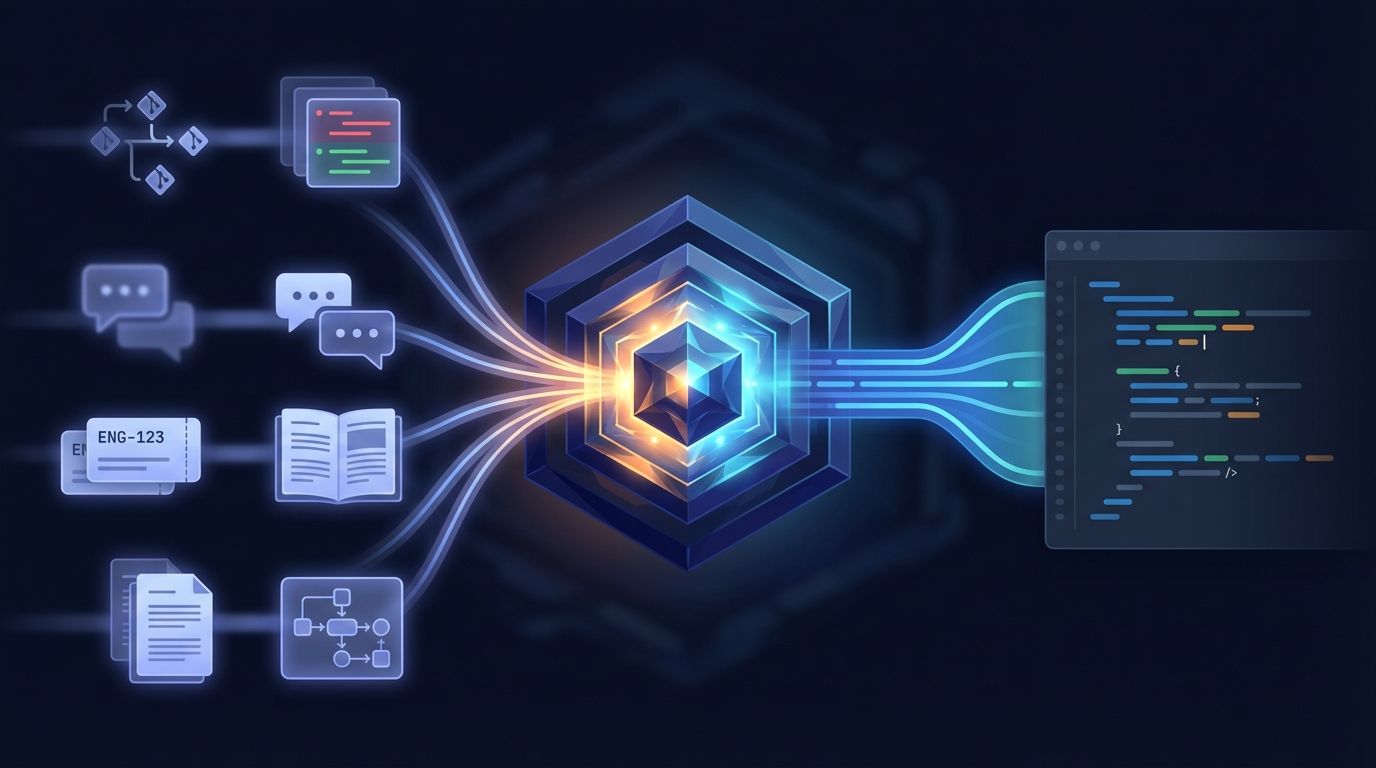

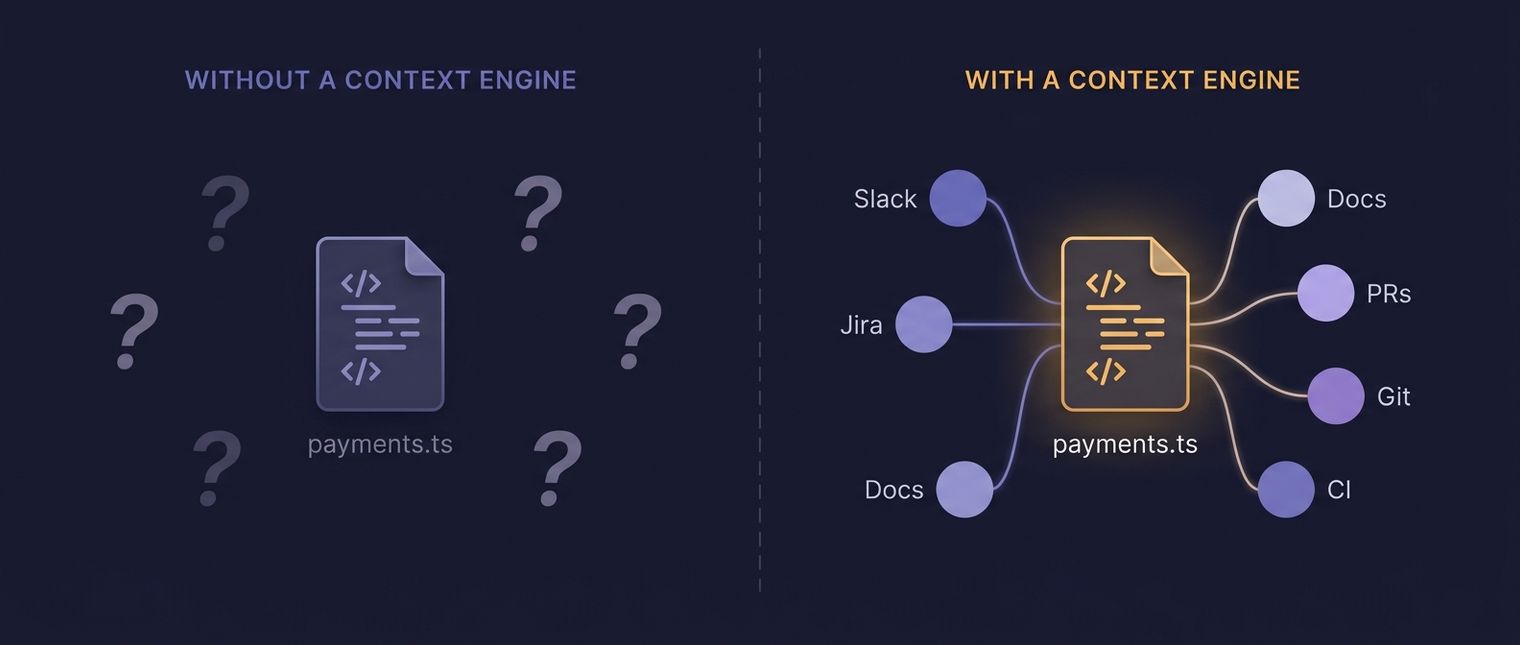

The context gap: what the agent can and cannot see. Source: Unblocked.

What Is a Context Engine?#

A context engine is a system that ingests, indexes, reasons over, and delivers institutional knowledge to AI agents so they can act correctly within a specific codebase. Enterprise AI adoption reached 88% in 2025, yet 70-85% of AI projects still fail (Deloitte State of Generative AI, 2025-2026). The reason is usually context, not capability.

What a context engine actually does#

Picture a developer's long-term memory, pulled out of their head and made available to machines. The system plugs into your code repos, docs, PR history, issue trackers, CI/CD logs, Slack threads, and design docs. Then it builds a structured, queryable map of everything your team knows about its software.

When an AI agent needs to modify a service, it does more than find relevant files. It grasps which files relate to each other, why certain patterns exist, what broke last time someone touched this module, and which team owns the code.

What it replaces#

Without one, teams rely on manual prompt stuffing, static docs, or hope. Engineers paste code snippets into chat windows. They write long system prompts that go stale within weeks. They accept that AI-generated code will need heavy review because the agent does not "get" the system.

An automated pipeline replaces all of that manual work. It keeps context fresh, relevant, and structured, then delivers it in whatever format the consuming agent or tool requires.

A working definition#

This is infrastructure, not a feature. It is a standalone system with four jobs: ingest knowledge sources, index across formats, reason about what is relevant and how things connect, and deliver precise context to whoever needs it. It runs all the time, not on-demand. And it serves any number of AI agents, tools, or workflows, not just one.

Citation Capsule: A context engine is infrastructure that ingests, indexes, reasons over, and delivers institutional knowledge to AI agents. With 70-85% of enterprise AI projects failing according to Deloitte (2025-2026), context starvation, not model capability, is the primary bottleneck for AI coding tools in production environments.

Why Do AI Agents Need a Context Engine?#

Without grounding in real project context, AI coding agents hallucinate at alarming rates. Research shows 29-45% of AI-generated code contains security flaws, and roughly 20% of package recommendations point to libraries that do not exist (Suprmind Hallucination Research Report, 2026). Proper context grounding prevents these failures.

The hallucination problem is getting better, but not solved#

The average hallucination rate across AI models fell from 38% in 2021 to 8.2% in 2026, with the best models reaching 0.7% (Vectara Hallucination Index via Suprmind, 2026). That sounds encouraging. But 8.2% across millions of daily code generations still means a staggering volume of incorrect output. And "incorrect" in a codebase does not mean a typo. It means broken builds, security holes, and silent regressions.

Even reasoning models, the most capable category available, still exceed 10% hallucination rates on grounded summarization benchmarks. The model alone cannot solve this. Better input can.

Why does scale make the problem worse?#

Gartner projects that 40% of enterprise applications will feature task-specific AI agents by late 2026, up from less than 5% in 2025 (Gartner, 2025). That is not one agent writing code. That is dozens or hundreds of agents operating across your organization, each one making decisions about code it has never seen before.

At that scale, manual context management breaks down. How many agents are running in your org right now? If you do not know the answer, that is part of the problem. You cannot have engineers hand-feeding prompts to every one of them.

Context is the differentiator#

The thesis that context matters more than the model now has public evidence. On SWE-bench Pro, two agents running the same underlying model (Claude Opus 4.5) but different context approaches produced measurably different results — a 17-problem gap (Scale AI / Augment Code, 2026). Same model, different context, different outcomes. Unblocked's own controlled test pushed that observation further: on the same codebase with the same agent, decision-grade context upstream produced 48% fewer tokens and 83% faster task completion (getunblocked.com). Context is the variable with the highest leverage.

When agents lack proper context, they generate plausible-looking code that violates your architecture, duplicates existing utilities, ignores error-handling patterns, or calls deprecated APIs. The code compiles. The tests might even pass. Then it introduces the kind of subtle rot that compounds over months. Google's 2025 DORA research documented this pattern directly: AI tools function as a "mirror and multiplier" of existing engineering quality, improving strong teams and amplifying the weaknesses of weaker ones (Google DORA, 2025).

Citation Capsule: AI-generated code contains security vulnerabilities 29-45% of the time, and roughly 20% of package recommendations reference non-existent libraries, according to the Suprmind Hallucination Research Report (2026). Context engines reduce these failure modes by grounding agent output in verified institutional knowledge.

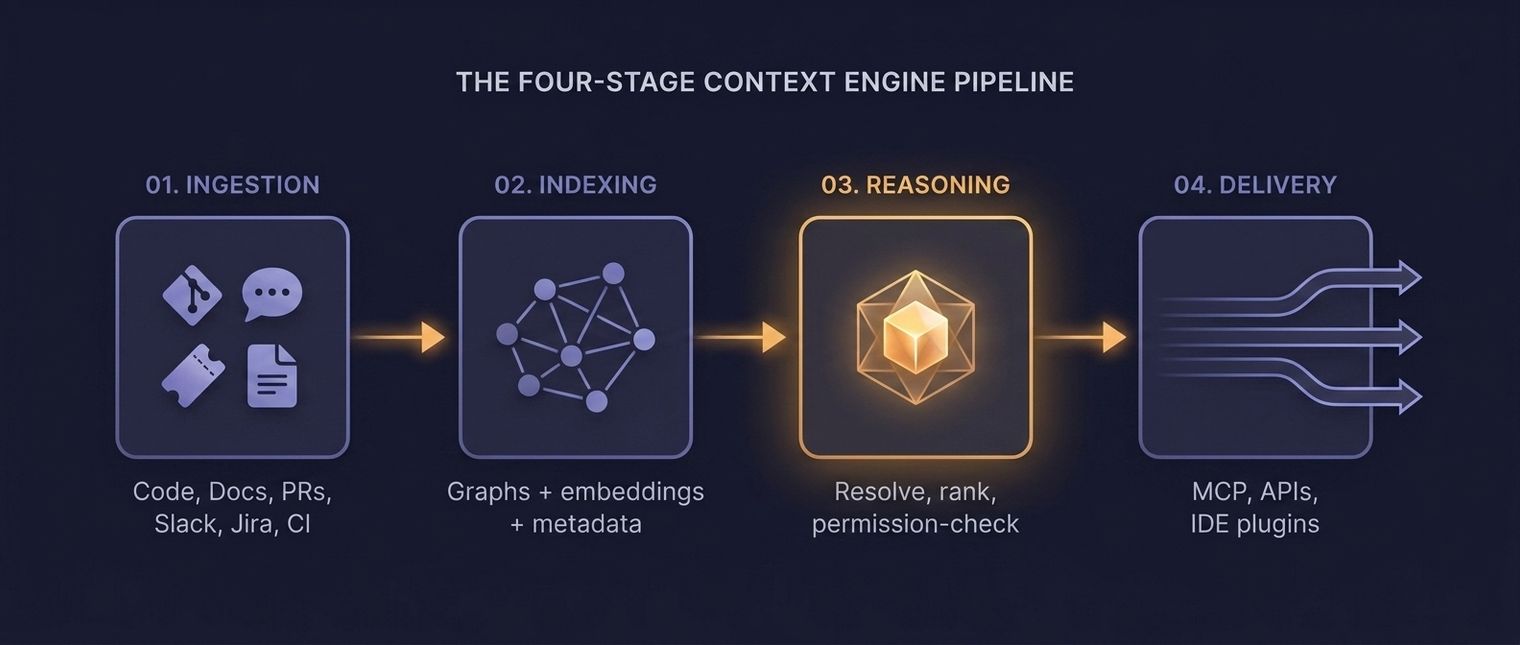

How Does a Context Engine Work?#

The system operates as a four-stage pipeline: ingestion, indexing, reasoning, and delivery. Meta's engineering team proved this approach works by building 50+ specialized AI agents that produced 59 context files, cutting tool calls per task by 40% and expanding code module coverage from 5% to 100% across 4,100+ files (Engineering at Meta, 2026).

Four-stage context engine pipeline. Source: Engineering at Meta (2026); Unblocked analysis.

Stage 1: Ingestion#

The ingestion layer connects to every source of institutional knowledge: Git repositories, documentation systems, issue trackers, CI/CD pipelines, Slack or Teams conversations, design documents, and incident postmortems. It pulls data continuously, not as a one-time import. When a pull request merges or a document updates, the system knows within minutes.

Good ingestion handles multiple data formats, from code and markdown to images and diagrams. It preserves metadata like authorship, timestamps, and relationships between artifacts. A PR comment explaining why a change was made is often more valuable than the diff itself.

Stage 2: Indexing#

Raw data is not useful. The indexing layer transforms ingested content into structured, searchable representations. This includes vector embeddings for semantic search, code-aware graph structures that capture dependencies and call hierarchies, and metadata indexes that track ownership, recency, and quality signals.

The key difference from simple search indexing is multi-modal awareness. Code, documentation, and conversations about the same feature get linked together. The index understands that a Slack thread from six months ago, a design document, and a set of files in /src/payments/ all describe the same system boundary.

Stage 3: Reasoning#

This is where the system diverges most sharply from basic retrieval. The reasoning layer does more than find relevant documents. It evaluates, ranks, and assembles context packages tailored to a specific task.

If an agent needs to modify a payment processing function, the reasoning layer identifies the function itself, the related tests, the error-handling conventions in that module, the deployment constraints for that service, and the incident from last quarter when someone changed this function and caused an outage. It weighs recency, authority, and relevance to build a context window that fits within the agent's token limits.

Parsimony matters at this stage. Chroma Research tested 18 frontier models and found every one degrades well before its context window fills. A model with a 200,000-token window shows material performance drops at 50,000 tokens (Chroma Research, Context Rot, 2025). Stuffing the prompt makes agents worse, not better.

Stage 4: Delivery#

The delivery layer formats and transmits context to whatever system needs it. That might be an AI coding agent, an IDE extension, a code review tool, or a CI pipeline. Delivery must be fast (sub-second for interactive use), format-aware (different consumers need different structures), and token-efficient.

MCP (Model Context Protocol) has become a primary delivery standard with rapid 2025-2026 adoption (Anthropic MCP, 2026). Effective delivery also means knowing what not to send. Flooding an agent with 100,000 tokens of loosely related code is worse than sending 5,000 tokens of precisely relevant context.

Citation Capsule: Meta built 50+ specialized AI agents producing 59 context files through a structured context pipeline, achieving 40% fewer tool calls per task and expanding code module coverage from 5% to 100%, according to Engineering at Meta (2026). This four-stage approach (ingestion, indexing, reasoning, and delivery) defines how modern context engines operate.

What's the Difference Between a Context Engine and RAG?#

RAG (Retrieval-Augmented Generation) reduces hallucinations by up to 71% when properly implemented, and combining RAG with RLHF and guardrails can achieve a 96% reduction versus baseline (Stanford arXiv 2311.09210, 2025). RAG is a technique. A context engine is a system. The distinction matters for engineering leaders evaluating infrastructure investments.

RAG is a component, not a solution#

RAG follows a simple pattern. Receive a query, retrieve relevant documents, augment the prompt with those documents before generating a response. It is powerful. It is also limited. RAG does not reason about why a document matters. It does not understand code dependencies. It does not know that the document it retrieved was superseded by a newer decision.

A context engine uses retrieval (often RAG-style retrieval) as one component of a larger system. RAG is a carburetor. A context engine is the whole engine.

Where RAG falls short for code#

Code has structure that unstructured document retrieval struggles with. Functions call other functions. Modules depend on modules. A change in one file can break behavior in a file that shares no textual similarity. RAG, operating on semantic similarity alone, misses these connections.

The alternative is code-aware indexes: graph structures that capture import chains, call hierarchies, type relationships, and dependency trees. When an agent asks about changing a function, the engine traverses the graph to find everything affected, rather than everything textually similar.

When RAG is enough#

For simple use cases, RAG works fine. If your team needs a chatbot that answers questions about internal documentation, RAG is probably sufficient. If you are building a customer-facing Q&A system over a knowledge base, RAG handles that well.

If you are running AI agents that modify production code in a legacy monolith with fifteen years of history, you need the reasoning, ranking, and delivery capabilities that a full context engine provides.

Citation Capsule: RAG reduces hallucinations by up to 71% when properly implemented, per Stanford (2025), but it operates as a retrieval technique, not a complete system. Context engines build on RAG by adding code-aware indexing, dependency graph traversal, and reasoning layers that understand why retrieved information matters.

Who Is Building Context Engines in 2026?#

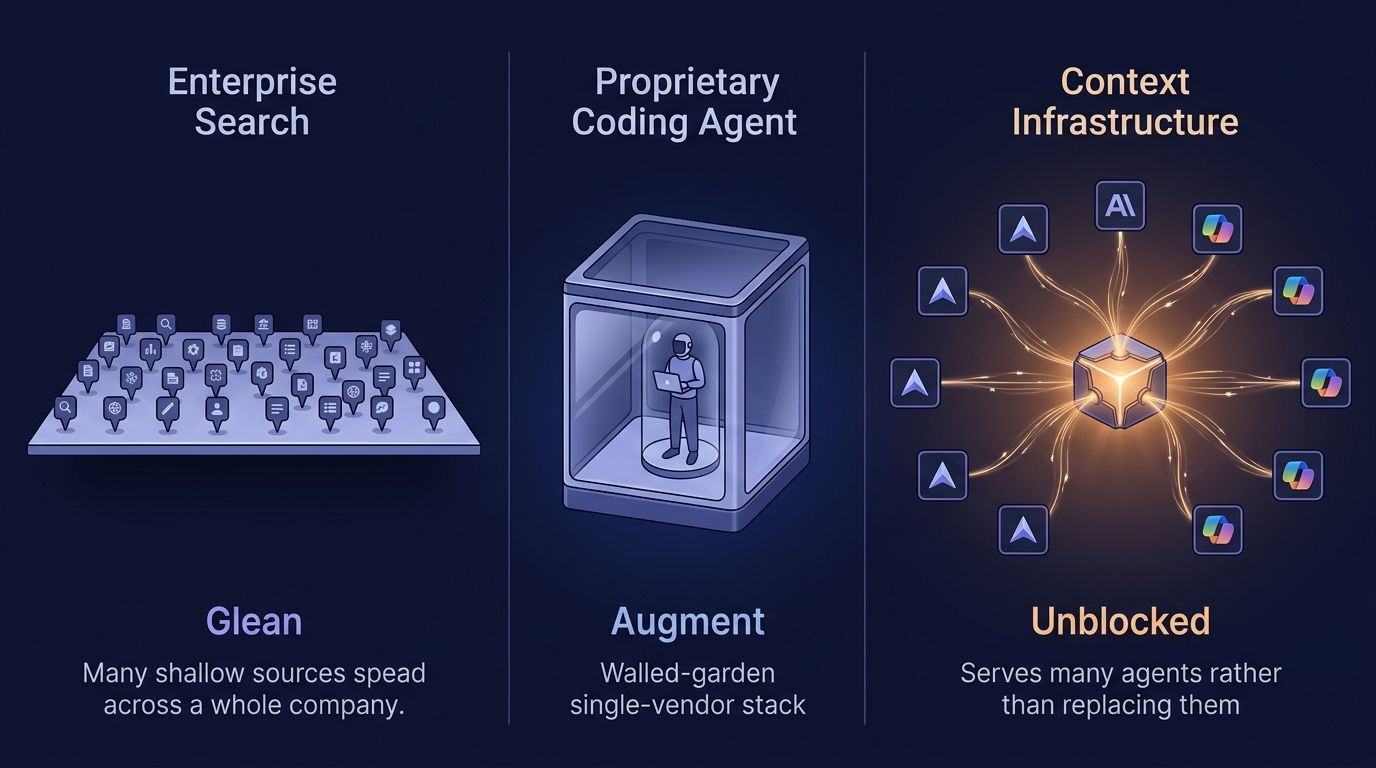

This category is moving fast, and the vendors using the term "context engine" mean different things by it. Four names appear most often in engineering-leader shortlists: ServiceNow, Tabnine, Augment Code, and Unblocked. They operate in different architectural categories, which is typically where buyer confusion starts.

Engineering-context infrastructure#

Some products are context infrastructure that feeds the coding agents the team already uses. Unblocked is the clearest example: a context engine that reasons across code history, Slack discussions, Jira decisions, Confluence specs, and PR threads, then delivers decision-grade context to any coding agent via MCP (Cursor, Claude Code, Copilot, Codex, Windsurf) or REST API (docs.getunblocked.com). The infrastructure approach compounds as teams add more agents, because every agent benefits from the same context upstream.

Augment Code sits in an adjacent but distinct category: a proprietary coding agent with its own code index, its own IDE extension, and its own agent runtime. Augment indexes code deeply but does not ingest the Slack, Jira, and documentation sources where most "almost right" failures come from. Internal platforms built by large engineering organizations (Meta's tribal-knowledge mapping is the published example) represent the build-it-yourself variant of the same job, typically requiring thousands of engineering hours to reach production quality.

Platform-layer context engines#

Other companies are building context engines as platform infrastructure, designed to serve multiple types of AI agents, not just coding tools. These engines handle diverse data sources like CRM data, financial records, and operational logs alongside code.

ServiceNow launched its Context Engine in April 2026 framed as the connective tissue behind enterprise workflow AI, grounding agent decisions in 85 billion workflows and 7 trillion transactions (CIO.com, 2026). Tabnine released its Enterprise Context Engine in February 2026 with a focus on air-gapped, on-premises deployment for regulated industries (Tabnine, 2026). Their bet is that the context problem extends beyond software development.

Open-source and protocol-driven approaches#

MCP's rapid adoption has created an ecosystem of open-source context servers. These are not full context engines, but they provide the delivery layer. Teams can assemble ingestion, indexing, and reasoning components from open-source libraries, then deliver context via MCP.

This approach offers flexibility but requires significant engineering investment. You are essentially assembling a custom system from parts.

Market segmentation estimate (2026):

| Segment | Share | Examples |

|---|---|---|

| Engineering-context infrastructure | ~45% | Unblocked, custom internal (e.g., Meta) |

| Platform-layer + proprietary agent | ~35% | ServiceNow, Tabnine, Augment Code |

| Open-source / protocol-driven | ~20% | MCP-based assemblies, vector DB + code parser |

Estimated context engine market segmentation. Source: Unblocked analysis, 2026.

Citation Capsule: The context engine category is segmenting into engineering-context infrastructure that feeds any coding agent (Unblocked), proprietary coding agents with their own code index (Augment Code), platform-layer engines for enterprise workflow (ServiceNow, Tabnine), and open-source protocol-driven approaches built around MCP. In Unblocked's own controlled test on the same codebase and agent, decision-grade context upstream produced 48% fewer tokens and 83% faster task completion (getunblocked.com).

What Makes a Context Engine Effective?#

Quality beats volume every time. SWE-bench Pro results show the same underlying model (Claude Opus 4.5) producing a 17-problem gap between agents running different context approaches (Scale AI, 2026). What matters is whether the right context reaches the agent at the right moment. Unblocked's own controlled test on the same codebase reported 48% fewer tokens and 83% faster completion with decision-grade context upstream (getunblocked.com).

Why does precision beat recall?#

Most enterprise codebases contain millions of lines of code, thousands of docs, and years of conversation history. Sending all of it to an agent is impossible and counterproductive. The best systems prioritize precision: delivering exactly what is needed and nothing more.

This means sophisticated ranking algorithms that weigh recency (was this code changed last week or three years ago?), authority (is this the canonical implementation or a deprecated copy?), and task relevance (does this context help the agent complete its specific assignment?).

Code-aware understanding#

Text search finds strings. Code-aware systems understand structure. They know that renaming a method in one file requires updates in every file that calls it. They track which tests cover which functions. They map the borders between services.

In practice, code-structural awareness eliminates the most expensive class of AI-generated bugs: changes that look correct in isolation but violate system-wide invariants.

Freshness and continuous sync#

Stale context is dangerous context. If the index reflects last week's codebase but someone refactored the module yesterday, the agent will generate code against an outdated structure. The best engines sync all the time, updating their indexes within minutes of changes to source systems.

Delivery format flexibility#

Different consumers need context in different shapes. An IDE autocomplete plugin needs snippets. A code review agent needs full file context with surrounding architecture. A planning agent needs high-level system descriptions. The engine should adapt its output format and detail level to each consumer.

Citation Capsule: Context quality determines agent performance more than model selection. The same Claude Opus 4.5 model produced a 17-problem gap on SWE-bench Pro between different context approaches (Scale AI, 2026). Unblocked's own controlled test added 48% fewer tokens and 83% faster completion when decision-grade context was present (getunblocked.com). Precision, freshness, and reasoning-grade synthesis are the differentiators.

How Does a Context Engine Fit Into the Broader Context Stack?#

Context infrastructure is becoming a distinct layer in the enterprise technology stack. With 51% of all GitHub commits now AI-generated or AI-assisted (GitHub Octoverse, 2025, 2026), the systems that feed those AI tools are foundational. Bessemer Venture Partners named "Memory and Context Management" as one of five AI infrastructure frontiers for 2026 (Bessemer, 2026). A context engine sits at the center of a broader context stack.

The four-layer context stack. Source: Unblocked analysis; Bessemer AI Infrastructure Roadmap (2026).

The context stack, defined#

The full context stack has four layers. At the bottom: context data sources, the raw repositories, documents, and communication tools where knowledge lives. Above that: the context engine, which ingests, indexes, reasons over, and packages that knowledge. Next: the context layer, a delivery and protocol tier (often MCP-based) that routes context to consumers. At the top: context consumers, the agents, IDE plugins, and workflows that use the context.

Where it connects#

The engine connects downward to data sources through integrations and APIs. It connects upward to consumers through delivery protocols. Horizontally, it connects to authentication, authorization, and governance systems, because not every agent should see every piece of context.

In an enterprise environment, the system must respect access controls. An agent running on behalf of a junior developer should not receive context from a repository that developer cannot access. This security layer is non-negotiable for organizations with compliance requirements.

Why the stack view matters#

Thinking in terms of a stack helps engineering leaders make better buy-vs-build decisions. You might build your own ingestion layer because your data sources are unusual. You might buy a context engine because building reasoning and ranking from scratch takes years. You might use open-source MCP servers for delivery because the protocol is standardized.

The context stack will follow the same pattern as the data stack a decade ago: initially bundled into monolithic products, then unbundled into specialized components, then re-aggregated around winning platforms. Engineering leaders who understand this trajectory can make infrastructure investments that survive the consolidation.

Citation Capsule: With 51% of GitHub commits now AI-generated or AI-assisted, per GitHub Octoverse (2025), context infrastructure has become foundational. The context stack comprises four layers: data sources, context engine, context layer, and context consumers, with the engine serving as the central reasoning and indexing system.

Should You Build or Buy a Context Engine?#

This is the most common question engineering leaders ask after understanding the category. The answer depends on your team's size, your codebase's complexity, and how much engineering time you are willing to invest in infrastructure that is not your core product.

When building makes sense#

Building works when you have unusual data sources that no vendor supports, when your security requirements prohibit third-party access to code, or when you have a large platform engineering team with spare capacity. Meta's approach of building 50+ specialized agents with custom context files (Engineering at Meta, 2026) is an example. Meta also has thousands of infrastructure engineers.

Building from scratch typically takes 6-12 months to reach production quality. That includes connectors, indexing infrastructure, a reasoning layer, and delivery APIs. It is a real engineering project, not a hackathon prototype.

When buying makes sense#

Most engineering organizations should buy. If your team has fewer than 500 engineers, the ROI of building custom context infrastructure rarely justifies the cost. Commercial context engines have already solved the hard problems: incremental indexing at scale, code-graph construction, token-efficient delivery, and multi-source fusion.

The buy decision also accelerates time-to-value. Instead of spending six months building, your agents can have context within weeks.

The hybrid approach#

Many enterprises will land in the middle. They will buy the engine for the core pipeline (ingestion, indexing, reasoning) and build custom integrations for proprietary data sources or specialized delivery requirements. This hybrid model captures the speed of buying with the flexibility of building.

In the engineering orgs we have onboarded at Unblocked, the first two weeks of a context-engine deployment usually surface three or four proprietary systems — custom deployment platforms, in-house test harnesses, internal RPC frameworks — that no commercial connector will ever cover, and that is where build-vs-buy stops being theoretical. The most common pattern is buying the engine and building the connectors for those internal-only systems.

Tools and Resources#

The ecosystem around these tools is growing rapidly. What follows is a snapshot of what is available today for teams evaluating or building context infrastructure.

Protocols and standards#

- Model Context Protocol (MCP). The leading standard for context delivery, developed by Anthropic and now widely adopted (Anthropic, 2026). Think of it as the HTTP of context delivery.

- Language Server Protocol (LSP). Not a context protocol per se, but LSP provides code intelligence that context engines often build on.

Commercial context engines#

The category is young. Evaluate vendors on five criteria: data source coverage, indexing depth (surface-level text vs. code-graph), reasoning sophistication, delivery speed, and access control granularity. The "What Makes a Context Engine Effective?" section above covers each in detail.

Open-source components#

Several open-source projects provide pieces of the context engine puzzle: vector databases for indexing, code parsing libraries for AST extraction, and MCP server implementations for delivery. Assembling these into a production-grade engine is possible but requires significant integration work.

Benchmarks to watch#

- SWE-bench and SWE-bench Pro. The primary benchmarks for evaluating AI coding performance. Context quality heavily influences scores.

- Vectara's Hallucination Index. Tracks hallucination rates across models, providing a baseline for measuring context engine effectiveness.

- Chroma Context Rot. Measures how model reasoning degrades with context length, useful for evaluating engine parsimony.

Getting Started#

If you are an engineering leader evaluating context engines for the first time, the path below works as a practical eight-week starting plan.

Week 1-2: Audit your context sources. Map every system where institutional knowledge lives: repositories, wikis, Slack channels, issue trackers, CI/CD logs, design documents. Identify which sources are most critical and which are most neglected.

Week 3-4: Measure the context gap. Track how often AI-generated code requires revisions due to missing context. Ask your team where they spend time re-explaining system behavior to tools or colleagues. Atlassian's 2025 research found 50% of developers lose 10+ hours per week to organizational inefficiency, much of it around finding information (Atlassian State of Developer Experience, 2025). That lost time is your baseline.

Week 5-6: Evaluate options. Use the five evaluation criteria above (data source coverage, indexing depth, reasoning sophistication, delivery speed, access control granularity) to score commercial context engines against your requirements. Run a pilot on a single repository or service to test integration depth.

Week 7-8: Expand or adjust. Based on pilot results, expand to additional repositories and data sources. Measure the impact on code review cycles, regression rates, and developer satisfaction.

The goal is not to buy a tool. It is to close the gap between what your AI agents know and what they need to know. Ask yourself: if you gave your best engineer a task with zero context about your codebase, how good would their code be? That is what your agents are working with today.

FAQ#

What is a context engine in simple terms?#

A context engine is a system that gives AI agents access to your organization's institutional knowledge: code history, documentation, architecture decisions, and team conventions. It ingests this information, organizes it, and delivers precisely relevant context so agents produce code that fits your system instead of generic output.

How is a context engine different from a vector database?#

A vector database stores embeddings and supports similarity search. A context engine uses a vector database (among other indexes) and adds reasoning, ranking, and delivery layers. The difference is like comparing a hard drive to an operating system. One stores data. The other makes it useful. Read more on the layer vs engine distinction.

Do context engines eliminate hallucinations entirely?#

No. They reduce hallucinations dramatically. RAG alone cuts hallucinations by up to 71% (Stanford, 2025). Context engines build on RAG with additional reasoning and code-aware grounding, pushing error rates lower. The best models already reach 0.7% hallucination rates with proper grounding (Vectara / Suprmind, 2026).

How long does it take to implement a context engine?#

Commercial context engines typically reach initial production value in 2-4 weeks for a pilot scope. Building one from scratch takes 6-12 months. The timeline depends on the number of data sources, the complexity of your codebase, and your security requirements.

Can a context engine work with any AI model?#

Yes. Context engines are model-agnostic. They prepare and deliver context, then the consuming agent sends it to whichever model it uses. This is why the same model (Claude Opus 4.5) can show a 17-problem gap on SWE-bench Pro under different context approaches (Scale AI, 2026). The engine matters as much as the model.

What data sources does a context engine need?#

At minimum: source code repositories and their Git history. For best results, also connect documentation systems, issue trackers, CI/CD pipelines, chat platforms (Slack, Teams), design documents, and incident postmortem records. The more institutional knowledge it can access, the better context it delivers.

Is MCP required to build a context engine?#

MCP is not required, but it is becoming the standard delivery protocol. MCP provides a widely supported interface between context engines and AI agents (Anthropic, 2026). Most commercial context engines support MCP alongside proprietary APIs. Read more on why MCP alone is not enough.

Where to Start#

If you are convinced the context gap is real in your org, the next move is not a vendor demo — it is an internal accounting exercise that takes less than a week. Three concrete first steps:

- Pick one high-pain repository and count the regressions. For the last 30 days of AI-assisted PRs in that repo, tag each one that needed a rework cycle because the agent missed a convention, a dependency, or a historical decision. That number is your baseline; without it, no pilot will have a scoreboard.

- Inventory the institutional knowledge surface for that repo. List every place the missing context actually lived — the Slack channel, the decision doc, the postmortem, the closed Jira ticket, the commit message from 2023. This becomes the minimum data-source coverage any engine you evaluate has to hit.

- Run a two-week pilot against that same repo and rescore. Whether you buy a commercial engine, wire up MCP yourself, or build a thin layer on top of an open-source stack, constrain the pilot to the same repo so you can compare regression rates against the baseline from step one.

Once that loop closes on one repo, expanding to the rest of the org is an operational question, not a strategic one. Related reading as you build the case:

- How a context engine actually works

- What your coding agent can't see

- Why a context layer is not a context engine

- Why MCP alone is not enough

- The full context engineering guide

Dennis Pilarinos is the founder and CEO of Unblocked, where his team builds context engines for enterprise engineering organizations. Previously, he led engineering teams at Microsoft and AWS. He writes about context engineering, developer productivity, and the infrastructure that makes AI coding agents reliable.