Key Takeaways

• Context engineering is the discipline of deliberately shaping what an AI agent knows before it acts. It treats context as an engineering problem with principles, tradeoffs, and measurable outcomes.

• Google's 2025 DORA research found 90% of developers now use AI tools, but AI adoption correlates with declining software delivery stability (Google DORA, 2025). Context is the lever that separates teams where AI helps from teams where it compounds failures.

• Five principles govern the practice: completeness, parsimony, freshness, authority, and permission-awareness. Violate any one and agents ship code that compiles and fails review.

• Maturity progresses through three stages: files (CLAUDE.md, AGENTS.md), layer (centralized aggregation), and engine (reasoning-grade synthesis). Most enterprise teams are at Stage 1 believing they're at Stage 2.

• The cost of neglecting context engineering shows up as "context debt": the compounding drag of missing, stale, or contradictory context that agents repeatedly act on.

Context Engineering: The Complete Guide for Engineering Leaders#

Disclosure: The author's company builds context engine technology. This guide treats context engineering as a vendor-neutral discipline; specific tools appear only where they illustrate a pattern.

---

Introduction#

Ninety percent of software engineers now use AI tools at work, according to Google's 2025 DORA research. Throughput is up 2-18%. Software delivery stability is down (Google DORA, 2025). That is the paradox every engineering leader is staring at this year. More code, less reliable code. More speed, more rework.

The gap between teams where AI works and teams where AI fails is not a model problem. A Claude Opus 4.5 agent given zero organizational context will produce something plausible and wrong. The same model, given decision-grade context about your codebase, conventions, and constraints, will produce something reviewable. Output quality follows context quality.

Context engineering is the discipline that closes the gap. It is the deliberate practice of curating what an AI agent sees before it acts. Anthropic frames it as "the natural progression of prompt engineering" (Anthropic, 2025). Martin Fowler defines it as "curating what the model sees so that you get a better result" (Martin Fowler, 2026). The practice has moved in under two years from informal technique to an emerging engineering discipline with principles, tools, and a maturity curve.

This guide is written for VPs and Directors of Engineering responsible for AI adoption at scale. It covers what context engineering actually is, the five principles that govern it, the maturity model that tracks how organizations adopt it, and the specific approaches Meta, Google, and Anthropic have published.

---

What Is Context Engineering?#

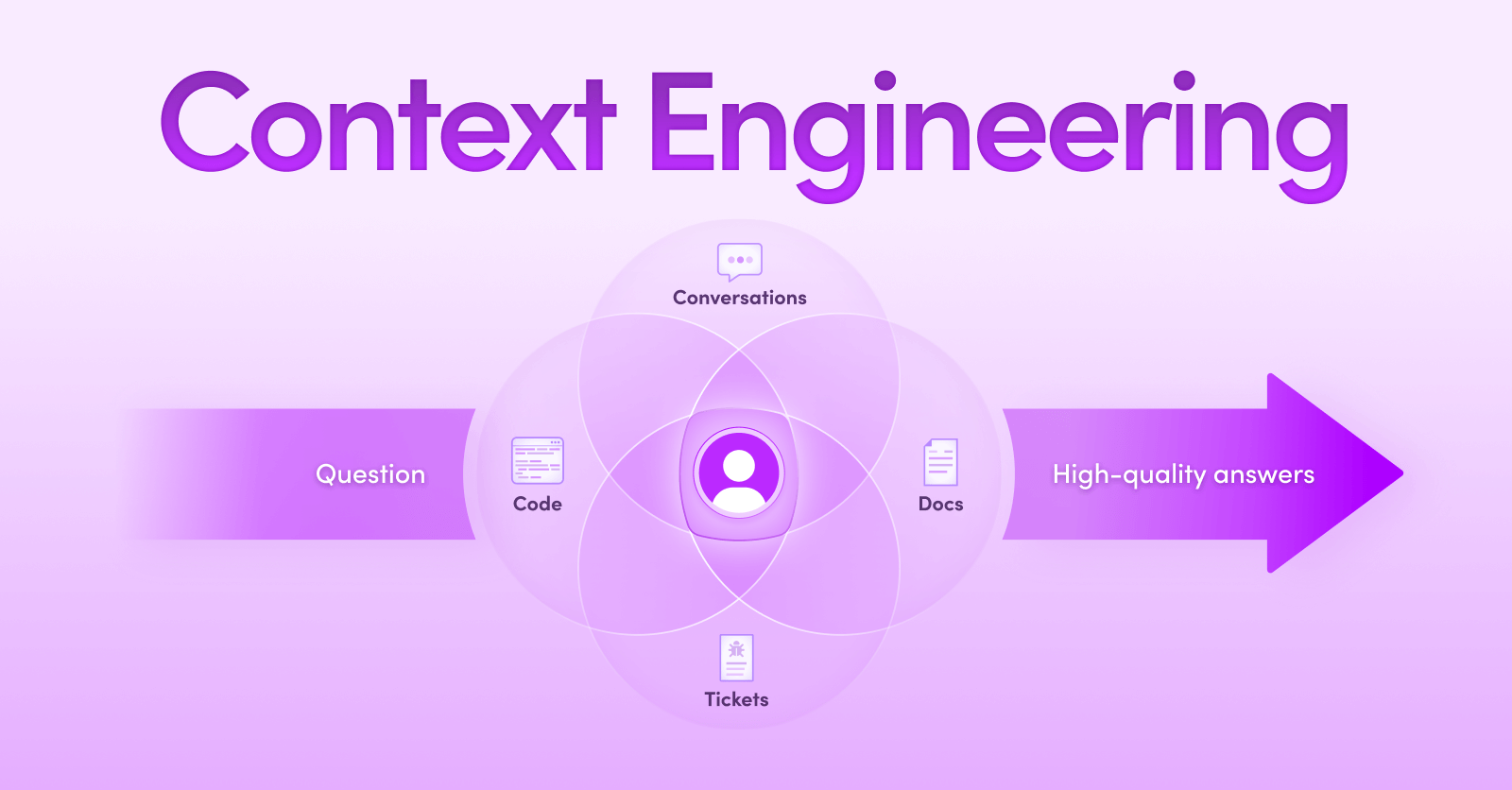

Context engineering is the discipline of deliberately shaping what an AI agent knows before it acts. It treats context as an engineering problem, one with principles, measurable outcomes, and tradeoffs, rather than a pile of documents to paste into a prompt. The goal: provide all the information the agent needs, and none that it doesn't.

The discipline defined#

A context engineer asks four questions for every task the agent touches: What does the agent actually need to know? Where does that information live today? How do we get it into the agent's working memory without crowding out reasoning? And how do we keep it fresh as the system changes?

Anthropic's framing is that context engineering covers "strategies for curating and maintaining the optimal set of tokens during LLM inference" (Anthropic, 2025). Martin Fowler's working definition emphasizes balance: "too little fails, too much degrades reasoning" (Martin Fowler, 2026). Both definitions share a core insight. Context is a resource, not a pile. A resource you allocate, ration, and measure.

Why engineers end up doing it informally#

Most teams practice context engineering by accident. An engineer spawns an agent, watches it miss a pattern, opens a CLAUDE.md file, and writes a note. The note works. Someone else writes another note. Six months in, the team has forty rules files, three AGENTS.md versions, and a Slack channel where people paste system prompts back and forth. That is context engineering. It is just context engineering done badly.

The shift from informal to formal happens when leaders recognize two patterns. First, the review burden is growing faster than agent output. Second, the best engineers are spending their days as prompt librarians instead of shipping code. Both patterns point to the same missing discipline.

A working definition#

Context engineering is the practice of treating context as a first-class engineering system: one that is measured, maintained, and evolved with the codebase it serves. The system may be a collection of markdown files, a centralized search layer, or a reasoning-grade context engine. What matters is that the team builds it deliberately instead of growing it by accident.

Citation Capsule: Context engineering is the discipline of deliberately curating what an AI agent sees before it acts. Anthropic frames it as "the natural progression of prompt engineering" (2025), and Martin Fowler defines it as "curating what the model sees so that you get a better result" (2026). The practice converts an informal team habit into a measurable engineering system.

---

Why Did Context Engineering Emerge as a Discipline?#

As AI coding tools moved from autocomplete into autonomous multi-step work, the failure mode shifted. Poor prompts produce garbage; poor context produces confidently wrong code. The 2025 Stack Overflow Developer Survey captured the frustration precisely: 66% of developers said "AI solutions that are almost right, but not quite" was their top AI pain point (Stack Overflow, 2025). The practice emerged because the old tools stopped working at the new scale.

The context problem moved from people to agents#

Engineering teams have always had a context problem. New hires took months to become productive. Tribal knowledge lived in people's heads. Jira decisions aged poorly. The profession built workarounds: onboarding docs, architecture reviews, Slack archives, post-mortems.

Those workarounds assumed the consumer of context was a human who would learn over time. Agents do not learn over time. They start from zero on every task. Give an agent the same mistake to make ten times and it will make the mistake ten times, confidently, unless something changed in the context it received. The context problem moved from people to agents, and with that move came a new urgency (The context problem moved from people to agents).

How did the failure mode shift with autonomous agents?#

Prompt engineering emerged around 2022-2023 as the discipline of shaping instructions. That worked when the model was a one-shot assistant. It stopped working when the same model became an autonomous agent running for hours on real codebases. The 2026 Anthropic Agentic Coding Trends report documented this shift directly: code design and planning went from 1% to 10% of AI coding tool usage in six months, and new feature implementation went from 14% to 37% (Anthropic, 2026). Agents are writing more code, across longer horizons, with less supervision per task.

Autonomy inverts the failure model. A one-shot assistant gets corrected inline. An autonomous agent acts on a bad assumption for hours before someone catches the drift. The cost of being wrong went up by an order of magnitude, which forced the upstream question: how do we make sure the agent is not wrong?

Why "just-in-time" context beats "just-in-case" context#

Early teams tried to solve the problem by dumping everything into the prompt. That failed for reasons both obvious and subtle. The obvious: tokens cost money and latency. The subtle: LLM reasoning degrades as input length grows, often well before the context window technically fills. Chroma Research tested 18 frontier models across GPT-4.1, Claude 4, Gemini 2.5, and Qwen3, and found that "models do not process tokens uniformly" (Chroma, Context Rot, 2025). A separate arXiv study measured reasoning degradation in web agents where Claude 3.7, GPT-4.1, and Llama 4 all dropped from 40-50% baseline accuracy to under 10% in long-context scenarios (arXiv 2512.04307). Stuffing context in makes agents worse, not better. Context engineering emerged to close the gap between "agents need more information" and "agents cannot handle indiscriminate information."

Citation Capsule: Context engineering emerged because the failure mode of AI coding tools shifted when agents went autonomous. Google's 2025 DORA research found 90% of developers now use AI tools but that AI adoption correlates with declining software delivery stability (2025). More context is not the fix; the right context, shaped deliberately, is.

---

Context Engineering vs Prompt Engineering#

Prompt engineering shapes the instruction. Context engineering shapes the environment the agent reasons within. A perfect prompt is useless if the agent lacks the information needed to follow it correctly. Context engineering is the system-level discipline. Prompt engineering is one tactic inside it.

What each shapes#

Prompt engineering lives at the input boundary. The prompt is the sentence or paragraph that tells the agent what to do right now. It is immediate, local, and easy to iterate on. A good prompt is concise, unambiguous, and example-driven.

Context engineering lives upstream of the prompt. It shapes the background material the agent has access to: codebase structure, team conventions, prior decisions, relevant PRs, deployment constraints, and the specific details that prevent "almost right" failures. A good context design is deliberate about what is present, what is excluded, and how freshness is maintained.

Why prompts alone fall short#

A 2025 Stack Overflow finding captures the limit of prompt-only thinking. Trust in AI accuracy fell to 29%, down from roughly 40% the prior year, and only 3% of developers "highly trust" AI output (Stack Overflow, 2025). That erosion did not happen because prompts got worse. It happened because agents started generating code for systems they did not understand. Better prompts cannot manufacture context that is not there.

How they work together#

Context engineering sets the stage; prompt engineering directs the actor. In mature teams the two practices are complementary: the context layer handles persistent, high-value information (architecture, conventions, constraints), and prompts handle ephemeral, task-specific framing (tone, format, output shape). Inexperienced teams conflate them, write massive prompts, and wonder why the agent drifts.

---

What Are the Core Principles of Context Engineering?#

Five principles govern the practice: completeness, parsimony, freshness, authority, and permission-awareness. Violate any one and the agent ships code that compiles, passes tests, and fails review. These five are the operational floor, not aspirational values. Each has a specific failure mode when neglected.

The five principles of context engineering. Source: Unblocked operational framework.

Completeness#

The agent has the information it needs to finish the task correctly. Completeness is not about stuffing the context; it is about ensuring no blocking gap exists. The failure mode is the "almost right" output: 66% of developers identify this as their top AI frustration (Stack Overflow, 2025). Almost-right output almost always means the agent lacked one specific piece of information that would have changed its answer.

Parsimony#

Include only what is needed. This is Anthropic's "just enough" principle (Anthropic, 2025). Parsimony exists because LLM reasoning quality degrades as context grows, even well before the window fills. Chroma's 18-model study confirmed this degradation is non-uniform and sometimes sharp (Chroma, 2025). The LoCoBench benchmark found Claude 3.5 Sonnet drops from 29% to 3% as context length increases (arXiv 2509.09614). More context is not safer context.

Freshness#

Context reflects the current state of the system, not last quarter's state. Freshness failures surface as agents generating code against deprecated patterns, calling removed APIs, or following architectural diagrams from before the last refactor. A context layer without a freshness model will confidently return last year's answer to a question about this year's code.

Authority#

When sources disagree, the system resolves the conflict before delivering context to the agent. Three internal docs say three different things about your auth pattern. Authority is the discipline of deciding which wins, based on recency, source credibility, and code ground truth. Without it, the agent picks one at random and ships.

Permission-awareness#

The agent sees only what the user the agent is acting on behalf of is authorized to see. This principle is routinely skipped in early-stage implementations and routinely regretted later. Context that leaks through retrieval, with no permission check, is a compliance incident waiting for an audit. Permission-awareness is the principle that separates context engineering in regulated industries from context engineering as a hobby project.

Citation Capsule: Five principles govern context engineering: completeness, parsimony, freshness, authority, and permission-awareness. Anthropic's guidance emphasizes "just enough" context because bloated prompts degrade reasoning (2025). Chroma Research documented measurable degradation in 18 frontier models as input length grew (2025). The principles are operational, not aspirational.

---

Context Engineering vs RAG: When to Use Which#

RAG (retrieval-augmented generation) is one tactic inside context engineering. The relationship is hierarchical: context engineering is the discipline that decides whether RAG is appropriate, what it should retrieve, how to resolve conflicts in its results, and what else the agent needs beyond retrieval. Using RAG without the surrounding discipline produces agents that retrieve confidently and wrongly.

RAG as a tactic, not a strategy#

RAG indexes documents as embeddings, finds similar ones at query time, and injects them into the prompt. The pattern is powerful and well-supported. It is also limited. RAG does not reason about why a document matters. It does not resolve contradictions between retrieved sources. It does not enforce permission boundaries. Stanford's legal RAG study found that specialized legal RAG systems still hallucinate on 17% of queries for Lexis+ AI and 33% for Westlaw AI-Assisted Research (Stanford HAI, 2024). Retrieval narrows the search space; it does not guarantee the answer.

When RAG is enough#

RAG works when the corpus is authoritative, the question is a lookup, and no conflict resolution is required. Customer support knowledge bases, internal policy search, single-source Q&A systems. In those settings, RAG alone is often the right choice.

When context engineering has to do more#

When sources conflict, when code and docs disagree, when permission scopes vary per user, or when the agent needs to act rather than answer, the discipline needs to go beyond retrieval. The surrounding operations (conflict resolution, freshness checks, permission enforcement, task shaping) are the work that context engineering performs and that RAG alone cannot. For a detailed architectural comparison, see context engine vs RAG.

---

What Is Context Debt?#

Context debt is the accumulated cost of missing, stale, or contradictory context that compounds as AI agents make increasingly wrong assumptions. The parallel to tech debt is exact. Every shortcut in what the agent knows is a bill paid later in review cycles, rework, and engineer frustration.

The parallel to tech debt#

Tech debt is familiar: an expedient decision today that compounds interest until someone pays it down. Context debt works the same way. A missing convention note today becomes ten agent-generated PRs that violate the convention next month. A stale architecture doc becomes six agents that reason against the wrong system. A forgotten permission boundary becomes an audit finding in Q3.

How does context debt compound?#

The compounding is hidden because each individual instance looks small. One "almost right" PR takes twenty minutes extra to review. Acceptable. A hundred "almost right" PRs per week takes the review bandwidth of a full engineer. Not acceptable. At Atlassian's measured 2025 baseline, 90% of developers already lose six or more hours per week to organizational inefficiency (Atlassian State of Developer Experience, 2025). Context debt adds to that number without anyone noticing until the cycle is obvious.

A concrete example#

A team's agent writes a twelve-line PR. It compiles. Tests pass. The reviewer reads it, sees that the agent used the deprecated internal HTTP client, and sends it back. The engineer rewrites with the new client. The agent rewrites again, using a pattern from a Confluence page that is itself out of date. Another round. Five days pass. The PR lands. The twelve-line PR cost five days because nobody maintained the context the agent reasoned over. Multiply that example across a team, a quarter, a product. That is context debt.

Citation Capsule: Context debt is the compounding cost of missing, stale, or contradictory context that AI agents repeatedly act on. Atlassian's 2025 research documented that 90% of developers already lose six or more hours per week to organizational inefficiency (2025). Context debt adds to that number quietly, surfaces as review cycles, and is only visible when leaders measure it deliberately.

---

How Do You Implement Context Engineering?#

Start where agents currently fail. Pick a repeated failure mode, trace what context would have prevented it, and fix that gap before moving to the next one. Implementation is a working discipline, not a one-time project. The best teams treat context like source code: version-controlled, reviewed, refactored, and measured.

Start with failure modes, not inventories#

The wrong way to begin is with an inventory: "let's list everything we know about the system and feed it to agents." The right way is to start from failure: "where do agents consistently produce almost-right code, and what specifically do they miss?" Failure-driven implementation converges faster because each improvement ships with measurable impact (fewer review cycles, faster merges) rather than aspirational thoroughness.

Trace the missing context back to its source#

For each failure, ask: where does the information that would have prevented this live today? Is it in someone's head? A Slack thread? A Confluence page that nobody maintains? Code comments? A decision log? The trace matters because the fix depends on it. Information living in human memory requires a different remedy (interviews, decision journaling) than information living in stale documentation (refresh, ownership, sync).

Pick your maturity stage deliberately#

Teams rush to build context engines when a well-maintained rules file would have solved the actual problem. Teams stay on rules files when the team has outgrown them and the backlog of gaps is the real bottleneck. The maturity model below gives leaders a way to pick deliberately instead of by default. Link forward to the maturity model section.

---

The Context Engineering Maturity Model#

Three stages mark the progression from ad-hoc practice to enterprise-grade discipline: files, layer, and engine. Most organizations begin at Stage 1, progress to Stage 2 as team size grows, and move to Stage 3 when context debt becomes the binding constraint. Moving up the model is a deliberate investment; staying below capability is a deliberate bet.

Stage 1: Files#

Context lives in flat files checked into the repository. CLAUDE.md, AGENTS.md, team conventions, inline comments, a docs/ directory. This stage is cheap, transparent, and close to the code. Every engineer can read, review, and improve the files through normal pull requests.

Limitations appear when the team or codebase grows. Files duplicate. They drift. They contradict each other. Engineers spend increasing time as prompt librarians. When the team hits twenty engineers or fifty agent-generated PRs per week, Stage 1 starts to leak.

Stage 2: Layer#

Context is centralized in a system that aggregates across sources: code, docs, issue trackers, chat, PR history. The layer serves context on demand through APIs, MCP servers, or IDE integrations. This stage solves the scaling problem of Stage 1. Context is no longer duplicated in files; it is computed at query time from canonical sources.

Stage 2 is where most enterprise teams end up when they invest intentionally. A Stage 2 system is powerful. It is also still a retrieval layer. It answers "can the agent find this?" It does not answer "should the agent trust this?" Stage 2 handles scale; it does not resolve authority, conflict, or permissions at the reasoning level.

Stage 3: Engine#

Context is synthesized by a reasoning layer that operates above retrieval. The engine ingests, resolves conflicts between sources, enforces permissions, assesses freshness, and delivers decision-grade context to the agent. This stage is infrastructure, not a feature. It treats context as a first-class system engineered with the same discipline as application code.

Most teams do not need Stage 3 on day one. Teams with large codebases, regulated data, or significant context debt will find that Stage 2 plateaus and only Stage 3 unblocks the next level of agent autonomy. Moving from layer to engine is the conversation enterprise engineering leaders are having in 2026. See what is a context engine for a detailed treatment of Stage 3.

The three-stage context engineering maturity model. Source: Unblocked operational framework.

---

How Do Meta, Google, and Anthropic Approach Context Engineering?#

Three of the most technically credible engineering organizations in the world have published specific approaches to context engineering in the past year. The common thread: all three treat context as infrastructure, not a feature. The implementations differ sharply, which is itself instructive. Context engineering is a discipline, not a product category.

Meta's tribal knowledge mapping#

Meta's Engineering team published a case study in April 2026 describing how they used 50+ specialized AI agents to extract institutional knowledge across 4 repositories, 3 languages, and 4,100+ files. The output: 59 concise context files, each 25-35 lines long, designed to be "a compass, not an encyclopedia." The measured impact: module coverage went from 5% to 100%, complex workflow guidance dropped from roughly two days to thirty minutes, and preliminary tests showed 40% fewer AI agent tool calls per task. Critic-agent quality scores improved from 3.65 to 4.20 out of 5.0 (Engineering at Meta, 2026).

Meta's approach treats agents as both consumers and producers of context. The 50+ agents read the code and generated the files that downstream agents then reason over. The pattern is recursive and scalable.

Google's ADK treats context like source code#

Google's Agent Development Kit (ADK) documents its context handling as a first-class architectural concern. The ADK documentation states: "ADK manages your context. It treats context like source code. Sessions, memory, tool outputs, and artifacts are assembled into a structured view where every token earns its place." The system includes automatic event filtering, older-turn summarization, lazy-loaded artifacts, and per-session token tracking (Google ADK Context docs, 2026).

The "every token earns its place" framing is the Google version of Anthropic's parsimony principle. The ADK approach emphasizes that the system, not the developer, is responsible for enforcing the principle at runtime.

Anthropic's parsimony principle#

Anthropic's engineering guidance on context engineering emphasizes that bloated prompts are the top failure mode and that agents need "just enough" context. The guidance warns explicitly that "if a human engineer cannot decide which tool to use, the agent can't either" (Anthropic, 2025). The framing lands at the discipline's core: context engineering is the practice of making decisions about what to include, and parsimony is the hardest of those decisions to get right.

The common thread#

Three different organizations, three different implementations, one shared premise: context is infrastructure. Meta built a recursive agent swarm. Google built a runtime that auto-curates. Anthropic published principles. In the engineering orgs I've worked with since founding Unblocked, and in the teams I ran at Microsoft and AWS before that, the groups that treat context as infrastructure are the ones whose agents stop producing "almost right" code. The implementations diverge; the discipline does not.

Citation Capsule: Meta's tribal knowledge mapping produced 59 specialized context files across 4,100+ files, cutting tool calls per task by 40% and lifting module coverage from 5% to 100% (Engineering at Meta, 2026). Google's ADK treats context like source code, where "every token earns its place." Anthropic's principles center parsimony: bloated context is the top failure mode. The three approaches confirm context engineering is an infrastructure discipline, not a feature.

---

What Tools and Resources Support Context Engineering?#

The tooling ecosystem for context engineering is expanding quickly. Teams evaluating or building on the discipline will encounter a mix of protocols, frameworks, and measurement tools.

Protocols and standards#

- Model Context Protocol (MCP). Anthropic's open protocol for delivering context to agents. MCP has become the de facto delivery standard in 2025-2026 and most commercial context engines support it (Anthropic MCP, 2026).

- AGENTS.md and CLAUDE.md conventions. Informal standards for committing agent-facing context directly into repositories. Simple, version-controlled, and widely adopted as an on-ramp.

Frameworks and research#

- Anthropic's effective context engineering guide. The canonical principles reference (Anthropic, 2025).

- Martin Fowler's Context Engineering for Coding Agents. Practitioner-oriented framing with Claude Code examples (Martin Fowler, 2026).

- Google ADK Context documentation. The most detailed published framework for runtime context management (Google ADK, 2026).

Measurement#

- Chroma Context Rot. Open-source toolkit for measuring context-length degradation across models (Chroma, 2025).

- Google DORA 2025. Industry baseline for AI adoption effects on delivery metrics (DORA, 2025).

- Atlassian State of Developer Experience. Annual baseline for developer productivity and time-loss (Atlassian, 2025).

---

Getting Started#

Context engineering is a practice, not a purchase. These four steps move a team from ad-hoc to deliberate within two months.

Week 1-2: Audit failure modes. Track the last fifty agent-generated PRs. Categorize each failure: wrong pattern, stale API, missing decision, permission leak. The categories that recur are your priority gaps.

Week 3-4: Trace each gap to its source. For the top three failure categories, find where the missing information lives. Some will be in documentation. Some in chat. Some in individual engineers' memory. The trace informs the fix.

Week 5-6: Apply the five principles. For each gap, decide how completeness, parsimony, freshness, authority, and permission-awareness apply. Not every gap needs all five. Every gap needs at least one deliberate choice.

Week 7-8: Measure what changed. Track review cycles, token cost per merged task, and time-to-mergeable-code. The measurements validate the investment and tell the team whether to stay at Stage 1 (files) or move up the maturity curve.

---

FAQ#

Is context engineering just another name for RAG?#

No. RAG is a retrieval tactic; context engineering is the discipline that decides whether retrieval is appropriate, what to retrieve, and how to resolve what's retrieved. RAG can be a component of a context-engineering practice, but it is not the practice itself.

Is context engineering the same as prompt engineering?#

No. Prompts shape the instruction; context shapes the environment. Context engineering operates at the system level; prompt engineering operates at the input level. Anthropic describes context engineering as "the natural progression of prompt engineering" (2025), which captures the relationship precisely.

Do I need a context engine to practice context engineering?#

No. You can practice context engineering with files and manual curation. A context engine is what makes the practice scale past a handful of engineers and into enterprise-grade systems. Stage 1 (files) is legitimate context engineering.

What's the ROI of context engineering?#

Measured three ways: fewer review cycles per agent-generated PR, lower token consumption per completed task, and reduced time-to-mergeable-code. Teams that invest in context engineering report meaningful improvements on all three, though the specific deltas vary by starting baseline and codebase characteristics.

Where should I start?#

Start where agents currently fail most. Pick one repeated failure mode, trace what context is missing, and fix that gap. Then measure the reduction in review cycles. Failure-driven implementation converges faster than inventory-driven implementation.

How does context engineering relate to MCP?#

MCP is a delivery protocol. Context engineering is the discipline that decides what to deliver. MCP solves the plumbing of moving context to agents; context engineering decides what context is worth moving. The two are complementary.

Is this discipline stable or still evolving?#

The principles are stabilizing. The tooling is evolving rapidly. Expect the discipline's vocabulary ("context debt", "decision-grade context", "parsimony") to continue consolidating through 2026 as more organizations publish their approaches.

---

Putting This Into Practice#

AI adoption is near-universal. AI value is not. The gap between teams where AI compounds velocity and teams where AI compounds defects is almost entirely a context gap. Context engineering is the discipline that closes it.

The discipline is new enough that vocabulary is still being written and old enough that Meta, Google, and Anthropic have published distinct, credible implementations. Leaders who build context engineering as a deliberate practice will extract the velocity gains of AI adoption. Leaders who treat context as an afterthought will watch their teams pay context debt until something breaks.

Start with one failure mode. Apply the five principles. Move up the maturity curve when the team outgrows the stage it is in. Measure review cycles, tokens, and time-to-mergeable-code. The rest follows.

Start with the fundamentals:

- Read what is a context engine for a detailed look at Stage 3.

- See why MCP alone is not enough for how protocols and practice interact.

- Learn what your coding agent can't see for the practitioner view.

- Read a context layer is not a context engine for the Stage 2 vs Stage 3 distinction.

- Revisit the context problem moved from people to agents for the origin argument.

---

Dennis Pilarinos is the founder and CEO of Unblocked, where his team builds context engines for enterprise engineering organizations. Previously, he led engineering teams at Microsoft and AWS. He writes about context engineering, developer productivity, and the infrastructure that makes AI coding agents reliable.