Context Engine vs Enterprise Search: Glean, Elasticsearch, and the Limits of Search

Brandon Waselnuk·April 18, 2026

Brandon Waselnuk·April 18, 2026

In Brief:

• Enterprise search finds documents; a context engine reasons across them

• Search can't resolve conflicting sources or enforce cross-system permissions

• Engineering teams need synthesis, not just retrieval, for AI agent workflows

• 68% of knowledge workers report difficulty finding organizational knowledge (Coveo, 2025)

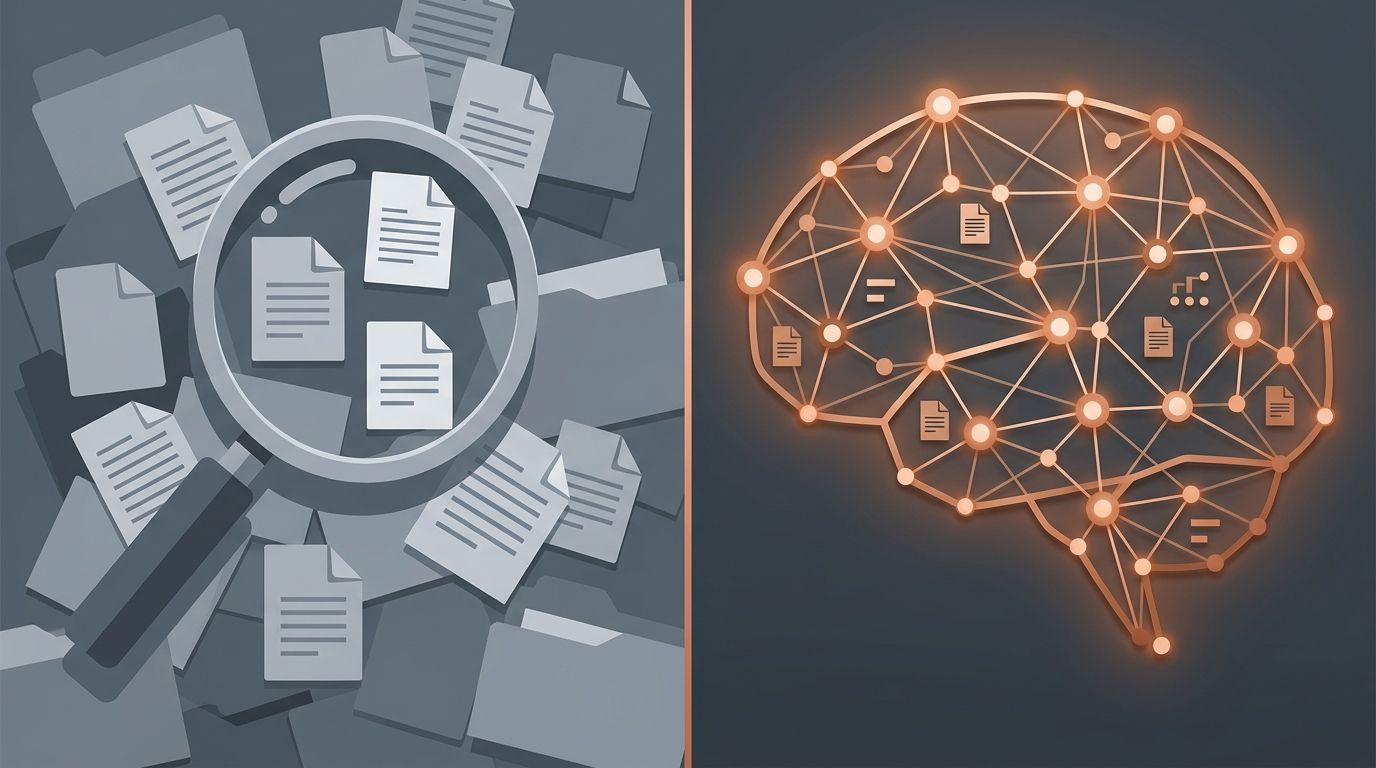

Context Engine vs Enterprise Search: Where Search Stops and Reasoning Starts

The enterprise search market is projected to reach $9.5 billion by 2029, according to a MarketsandMarkets forecast (MarketsandMarkets, 2025). That is a lot of money spent helping people find documents. And yet Forrester reports that knowledge workers still spend roughly 30% of their day searching for information or recreating work that already exists somewhere in the organization (Forrester, 2025). The search box isn't the problem. The architecture behind it is.

Enterprise search tools like Glean and Elasticsearch are good at surfacing documents that match a query. They are not built to resolve conflicts between those documents, enforce granular permissions across source systems, or synthesize a single trustworthy answer from ten contradictory hits. For engineering teams building with AI agents, the context engine vs enterprise search distinction is where the architecture conversation needs to start.

The difference between enterprise search and a context engine is this: enterprise search returns a ranked list of documents that might contain the answer. A context engine reads those documents, resolves contradictions between them, enforces per-user permissions from every source system, and returns one synthesized answer with citations. Search retrieves. A context engine reasons.

If you are new to the concept, start with what a context engine is and how it works.

What problem does enterprise search actually solve?#

Enterprise search solves document discovery. Gartner's research on workplace search estimates that the average enterprise manages over 1.5 billion documents across SaaS tools, file systems, and internal wikis (Gartner, 2025). Finding the right one without a unified index would be impossible, and that is the core value proposition.

Tools like Elasticsearch power keyword and full-text search at scale. Glean adds AI-powered ranking and a unified connector layer across dozens of SaaS applications. Both approaches solve the same fundamental problem: given a query, return the most relevant documents from a large, fragmented corpus.

Gartner estimates the average enterprise manages over 1.5 billion documents across internal systems (Gartner, 2025). Enterprise search indexes those documents so employees can find them. That is the ceiling, and for many use cases, it is enough.

This works well when the goal is "find the document." A salesperson looking for the latest pricing sheet. A support agent hunting for a KB article. An HR manager pulling a policy PDF. These are search problems, and enterprise search handles them.

The trouble starts when "find the document" isn't the goal. When the goal is "give me the right answer," search hands you ten documents and walks away. You're left to read, compare, and decide which one to trust. For many roles, that's fine. For engineering teams running AI agents, it's a bottleneck.

Where does enterprise search fail for engineering teams?#

Enterprise search fails engineering teams at the reasoning layer. The Stack Overflow 2025 Developer Survey found that 84% of professional developers now use or plan to use AI coding tools (Stack Overflow, 2025). Those tools need context that is resolved and trustworthy, not a list of ten possibly-relevant documents.

Engineering work is inherently multi-source. A single question, like "why does our payment retry logic use exponential backoff instead of fixed intervals?", might require reading a merged PR, a Slack thread from six months ago, a Jira ticket, and a Confluence RFC that was half-abandoned. Enterprise search can surface each of those documents individually. It cannot read them together and produce one coherent answer.

The ten-tab problem#

Every engineer knows the workflow. You search, open ten tabs, scan each one, mentally cross-reference, discard the stale ones, and piece together an answer. Enterprise search accelerates the first step. It does nothing for the other five. But does that really matter?

It matters because AI agents can't do the ten-tab workflow. They can't tell which Confluence page was last updated in 2023 and which PR superseded it last week. They take what search gives them and treat it as ground truth. DORA's 2025 Accelerate State of DevOps report found that teams adopting AI tools without corresponding quality investments saw decreased delivery stability (DORA, 2025). Search without reasoning is one of those missing quality investments.

Permissions across boundaries#

Elasticsearch and Glean handle permissions differently, but both face the same structural challenge. Source systems have their own permission models. Slack channels, GitHub repos, Jira projects, Confluence spaces: each has its own ACL. Enterprise search tools sync permissions, but the sync is periodic and coarse. A context engine enforces permissions at query time, per source, per user. The difference matters when an agent is pulling context for a junior developer who shouldn't see the infrastructure team's private incident channel.

We've observed that permission mismatches between search indices and source systems are one of the most underestimated risks in enterprise AI deployments. The search tool says the user can see the doc. The source system says otherwise. The gap is real and it widens with every new connector.

For more on what makes context trustworthy enough to act on, see decision-grade context.

Why can't search resolve conflicts between sources?#

Search can't resolve conflicts because it was never designed to. Anthropic's engineering team describes effective context assembly as a layered problem where retrieval is the starting point, not the finish line (Anthropic, 2025). Search is that starting point. Conflict resolution is a layer above it.

Consider a concrete example. Your Confluence page says the authentication service uses JWT tokens with a 15-minute expiry. The actual code sets the expiry to 60 minutes. A Slack thread from last quarter explains that the team changed it during an incident and never updated the doc. Enterprise search returns all three sources. Which one is right?

A human engineer reads all three, recognizes the Slack thread as the explanation, and trusts the code as the current truth. An enterprise search tool returns them ranked by relevance to the query string. It has no concept of "the code is the source of truth for implementation details" or "this Confluence page is stale." The ranking is textual similarity, not authority.

This is the fundamental category error in applying enterprise search to engineering workflows. Search ranks by relevance. Engineering decisions require ranking by authority, freshness, and source type. A context engine does that ranking. Enterprise search, regardless of how sophisticated its ML models are, operates on document relevance, not institutional authority.

Why AI makes this worse#

Without AI agents in the loop, conflict between sources is an inconvenience. The engineer reads, judges, moves on. With agents, conflict becomes a system failure. The agent has no judgment. It takes the top-ranked result and acts on it. GitHub's Octoverse 2025 report shows AI-assisted development is the fastest-growing activity on the platform, with 97% of developers using AI coding tools at work (GitHub Octoverse, 2025). More agents writing code means more decisions made on unresolved search results. That is a scaling problem.

How does a context engine go beyond search?#

A context engine adds reasoning, conflict resolution, and synthesis on top of retrieval. Stanford HAI's 2025 AI Index reports that retrieval-augmented systems still hallucinate on 17% to 34% of grounded queries, depending on domain complexity (Stanford HAI, 2025). A context engine exists to close that gap by doing the work that retrieval leaves undone.

The context engine vs enterprise search distinction comes down to three capabilities that search architectures lack.

Conflict resolution#

When two sources disagree, a context engine applies rules. Code outranks stale documentation. Merged PRs outrank open drafts. Recent Slack decisions outrank year-old wiki pages. The engine doesn't just find the documents. It decides which one wins and tells you why.

Cross-source synthesis#

Enterprise search returns a list. A context engine returns an answer. It reads across Slack, Jira, GitHub, Confluence, and code, then produces a single explanation with citations. The output is shaped for the task, not shaped for browsing.

Permission-aware authority#

A context engine inherits identity from every source system it connects to. If you can't see a private Slack channel, the engine can't cite it to you. This isn't a filter applied after retrieval. It's enforced during retrieval, per source, per query.

James Ford, Principal Engineer for Developer Experience at Compare the Market, described the gap this way:

"Unblocked is game-changing for information availability. Most AI tools are siloed. This one connects all of our documentation across the disparate systems to give answers we trust."

That trust comes from synthesis and conflict resolution, not from better search ranking.

For a deeper comparison of retrieval approaches, see context engine vs RAG.

When is enterprise search enough, and when do you need a context engine?#

Enterprise search is enough when the goal is document discovery across a broad workforce. Coveo's 2025 Relevance Report found that 68% of knowledge workers struggle to find organizational knowledge (Coveo, 2025). For that 68%, a unified search index is a meaningful upgrade. But engineering teams running AI agents need more.

Use enterprise search when your users are humans browsing for documents across business functions. Sales looking for decks. HR finding policies. Support pulling KB articles. These are genuine search problems, and tools like Glean solve them well.

Use a context engine when your users are AI agents that need to act, not browse. When the sources conflict. When permissions are granular and cross-system. When the output needs to be a synthesized answer, not a ranked list. Most engineering workflows in 2026 sit in this second category. The context engine vs enterprise search boundary is the boundary between browsing and acting.

The decision comes down to output type#

Search returns documents. Engines return answers. If your workflow ends with "here are the relevant docs," search is the right tool. If your workflow ends with "here is what to do and why," you've outgrown search.

For a step-by-step walkthrough of building context into your workflow, read the context engineering guide.

How do the architectures compare?#

The New Stack's analysis of enterprise AI infrastructure describes a growing split between retrieval-first and reasoning-first architectures (The New Stack, 2025). Enterprise search sits on the retrieval side. Context engines sit on the reasoning side. The table below maps the key differences.

Enterprise search and context engines share retrieval as a foundation but diverge at reasoning, conflict resolution, and permission enforcement. The New Stack describes the split as retrieval-first vs reasoning-first architectures (The New Stack, 2025). That framing captures the core tradeoff.

What separates a context engine from enterprise search in practice? Enterprise search indexes documents and ranks them by relevance to a query string. A context engine adds conflict resolution, cross-source synthesis, and real-time permission enforcement on top of that retrieval layer.

| Dimension | Enterprise Search (Glean, Elasticsearch) | Context Engine |

| Primary output | Ranked list of documents | Synthesized answer with citations |

| Conflict handling | None; returns all matching docs | Resolves conflicts using freshness, authority, source type |

| Permission model | Periodic sync from source ACLs | Real-time, per-source, per-query enforcement |

| Scope | Broad: all employees, all content types | Deep: task-specific, source-aware reasoning |

| Agent readiness | Low; agents receive document lists, not answers | High; agents receive decision-grade context |

| Best for | Document discovery across the org | Multi-source synthesis for engineering and AI workflows |

| Freshness | Index refresh cycles (minutes to hours) | Continuous sync with change detection |

| Typical users | Knowledge workers across all functions | Engineering teams, AI agents, technical leadership |

Chroma's research on "context rot," the degradation of retrieved context quality over time, shows that even well-indexed systems suffer from staleness as source documents evolve faster than indices refresh (Chroma, 2025). Enterprise search indices are particularly vulnerable because they batch-sync across many sources. Context engines that use continuous sync and freshness-aware ranking mitigate this failure mode.

The context engine vs enterprise search framing isn't adversarial. They serve different layers of the stack. Many organizations will run both: enterprise search for broad document discovery across the company, and a context engine for engineering teams and AI agent workflows where synthesis, conflict resolution, and permissions matter.

To understand why a thin wrapper around retrieval does not qualify, read a context layer is not a context engine.

Frequently asked questions#

Engineers evaluating enterprise search tools and context engines ask these questions most often. Each answer below draws on the research, architecture comparisons, and source citations covered above. Where a question involves a specific product like Glean or Elasticsearch, the answer explains how that product relates to the enterprise search vs context engine distinction.

Is Glean a context engine?#

Glean is an enterprise search platform with AI-powered ranking and answer generation. It excels at document discovery across SaaS tools. It does not resolve conflicts between sources, enforce per-query permissions from every source system, or synthesize cross-source reasoning for AI agent workflows. Coveo's 2025 report found 68% of workers struggle to find knowledge (Coveo, 2025). Glean addresses that problem. A context engine addresses what comes after finding.

Can Elasticsearch power a context engine?#

Elasticsearch is a search and analytics engine, not a context engine. However, a context engine could use Elasticsearch as one retrieval backend among several. The engine's value is in the layers above retrieval: conflict resolution, permission enforcement, and synthesis. Elasticsearch handles the index. The engine handles the reasoning.

Do I need both enterprise search and a context engine?#

It depends on your use cases. If your organization needs broad document discovery for non-technical teams, enterprise search serves that well. If your engineering teams run AI agents that need synthesized, permission-aware, conflict-resolved context, you need a context engine. Many organizations run both. DORA's 2025 findings confirm that AI tooling without quality infrastructure reduces delivery stability (DORA, 2025). The quality infrastructure for agents is context, not search.

What are the enterprise search limitations for AI coding agents?#

Enterprise search returns ranked documents, not resolved answers. AI coding agents need to know which source is authoritative when docs conflict with code. They need permission-aware context so they don't surface restricted information. They need synthesis across repos, tickets, and chat. Stanford HAI's AI Index found retrieval systems hallucinated on 17-34% of grounded queries (Stanford HAI, 2025). Those are the enterprise search limitations that matter for agents.

Search Finds Documents. Engines Find Answers.#

Enterprise search solved a real problem: finding documents across a fragmented organization. Tools like Glean and Elasticsearch handle that job well, and the $9.5 billion market projection from MarketsandMarkets (2025) confirms that document discovery remains valuable. But discovery is not synthesis, and engineering teams in 2026 need synthesis.

The context engine vs enterprise search choice isn't about replacing one with the other. It's about recognizing where search stops and reasoning begins. Search finds the ten documents. The engine reads them, resolves the contradictions, checks the permissions, and hands the agent one answer it can trust. That's the gap, and it grows wider every time you add another agent to the workflow.

To get started building this into your own stack, see the context engineering guide for developers.