The short version: MCP standardizes the wire between agents and tools, but it leaves reasoning, source ranking, conflict resolution, and per-user permissions to you. A context engine MCP layer fills those gaps. Buy it unless you have a five-engineer platform team and a six-month roadmap.

MCP is a transport protocol, not a context engine. Treating one as the other is the most common architectural mistake engineering teams make in 2026. Agents end up with twelve tool integrations and zero reasoning above them, shipping confident but wrong code at scale. The Model Context Protocol gave us a shared wire format. It did not give us a brain. The difference matters more every quarter as agent footprints grow.

This piece is the constructive companion to "Why MCP Servers Aren't Enough". We will walk through what MCP actually delivers, where it stops, the three architectural patterns for layering a context engine MCP setup above your servers, the four spec gaps you should plan around, a build-versus-buy rubric, and a concrete starter checklist. The goal: give engineering leaders a defensible architecture before they wire their tenth MCP server.

What does MCP actually give you?#

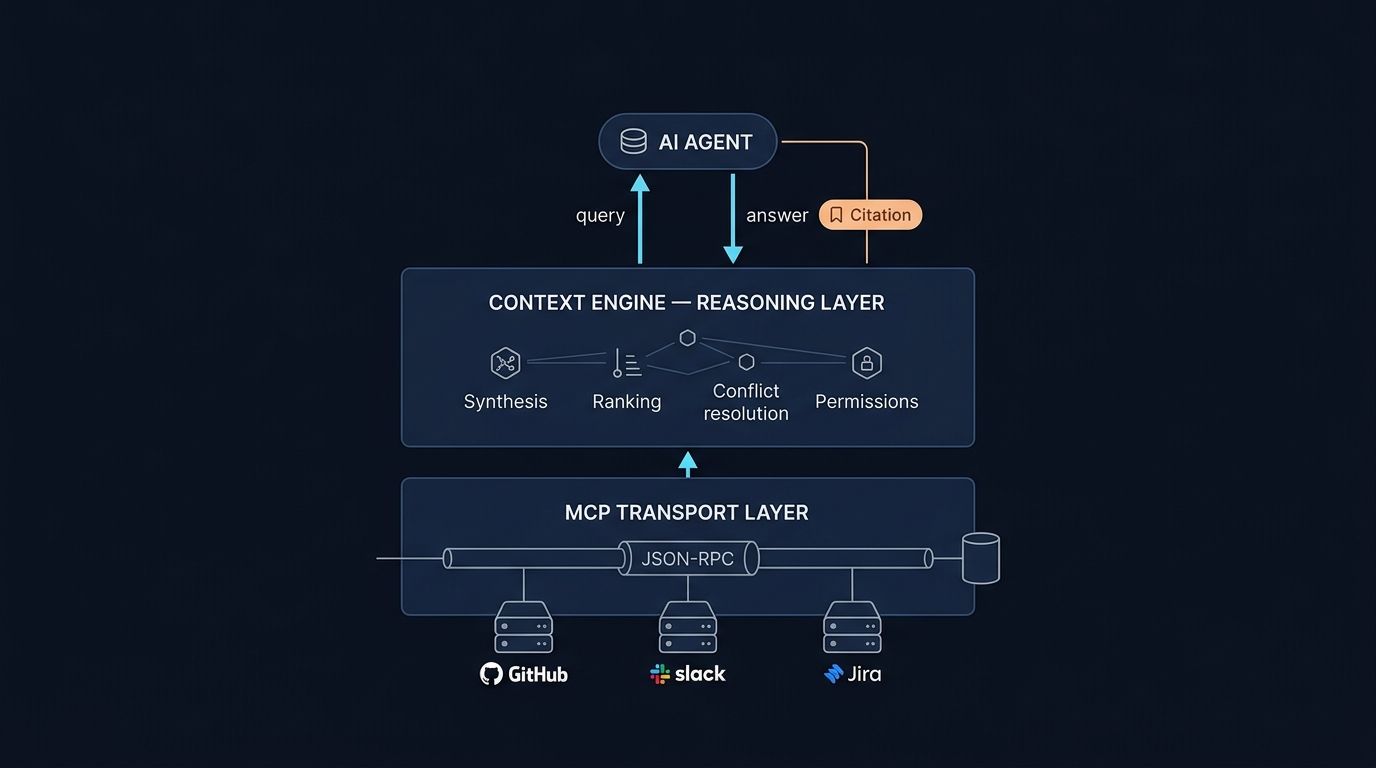

Before you can design a context engine MCP architecture, you need a precise read on what the protocol covers. MCP standardizes how an agent talks to a tool. Per the Model Context Protocol specification, it defines a JSON-RPC interface for tool discovery, structured prompts, resource exposure, and sampling, with a client-host-server model and a 1:1 client-server relationship. Anthropic's engineering team published the protocol in late 2024, and adoption accelerated through 2025 and into 2026.

What you get out of the box is real. Any MCP-compatible agent can connect to any MCP-compatible server without bespoke glue. That is the USB-C analogy the spec authors lean on, and it holds up. Tool calling becomes pluggable. Discovery becomes uniform. Auth handshakes get a common shape.

What you do not get: any opinion about which tool to call when, how to merge conflicting answers from two servers, or whether the answer is fresh enough to trust. The protocol is deliberately thin. That is its strength as infrastructure. It is also why a raw MCP deployment plateaus quickly.

Where do most teams stop with MCP today?#

GitHub's Octoverse 2025 reported the Model Context Protocol reached 37,000 GitHub stars in its first eight months, with the dominant deployment pattern emerging as one-server-per-source. Teams stand up a Jira MCP, a GitHub MCP, a Confluence MCP, and expose every tool the agent can see. Then they hope the model picks correctly.

This is the naive pattern, and it hits a wall around five sources. The agent's tool selection accuracy degrades as the option set grows. Chroma Research published findings in 2025 on context rot, showing model performance varies significantly as input length changes across 18 frontier LLMs (Claude 4, GPT-4.1, Gemini 2.5, Qwen3), even on simple tasks. More tools, more drift.

The deeper failure is reasoning. A coding agent asking "why does this service retry on 500s?" needs answers stitched from a PR, a months-old Slack debate, an architecture doc, and the incident runbook that prompted the change. Raw MCP exposes those as separate tools. Nothing in the spec tells the agent how to combine them.

What does a context engine add above MCP?#

A context engine adds the layer MCP intentionally omits: synthesis. Stanford's Legal RAG Hallucinations study documented that retrieval-grounded LLM systems still hallucinate on 17 to 34 percent of legal queries, a benchmark that scales to other knowledge-intensive domains. Reducing that requires reasoning over retrieved evidence, not just retrieving it.

Concretely, a reasoning layer above MCP handles five jobs. It ranks sources by authority for a given query type. It resolves conflicts when two sources disagree, an architecture decision record versus a six-month-old Slack thread. It enforces freshness, demoting stale content automatically. It applies per-user permissions at query time, not at index time. It synthesizes a single answer with citations the agent can pass to the developer.

That is the difference between a tool integration and institutional context for coding agents. MCP gives you the first. A context engine MCP layer gives you the second.

How do you wire a context engine to MCP?#

The cleanest architecture is counterintuitive: expose the engine as a single MCP server, not as a bypass of MCP. The agent sees one tool, "ask the context engine." Underneath, the engine fans out to its own connectors, runs synthesis, applies permissions, and returns one ranked, cited answer. The agent never sees the raw sources.

This pattern preserves the MCP wire format your IDEs, CLIs, and Slack bots already speak. The Pragmatic Engineer wrote in 2025 that the protocol's value is interoperability, not orchestration, and that orchestration belongs above it. A context engine MCP wrapper respects that boundary.

"Unblocked is the first MCP queried for everything we look up. It's not just checking the code, the code could be wrong. It pulls the Confluence docs, the feature planning documents, the Slack conversations."

— Sam Younger, Engineering Manager, UserTesting

The reason this architecture wins: every other surface, Cursor, Claude Code, the Unblocked CLI shipped this April, Slack, can call the same engine through the same wire. You build reasoning once.

FAQ#

Is MCP enough for our team's coding agent?#

If you have one or two sources and a small team, raw MCP is fine without a context engine MCP layer on top. Past three sources or any cross-source reasoning need, you will hit the ceiling Chroma documented in 2025. JetBrains' 2025 Developer Ecosystem survey found 99 percent of developers express some concern about AI in coding, a trust gap that grows when context is thin.

Can we build a context engine on MCP without buying one?#

Yes, but the realistic timeline is six to thirteen weeks for a minimum viable build with two engineers, then ongoing maintenance for connectors, permissions, and freshness. GitHub's Octoverse 2025 reported 1.1 million public repositories now use an LLM SDK, with new-project creation in the category up 178 percent year over year. Starter projects to study are plentiful.

Does adding a context engine slow down agent response time?#

Done well, it speeds responses. A pre-synthesized answer is smaller than raw tool dumps, and a smaller payload means fewer tokens and faster generation. In a controlled internal benchmark at Unblocked, the same coding agent on the same codebase used 48 percent fewer tokens and completed tasks 83 percent faster when context was synthesized server-side instead of fanned out as raw tool calls.

How does Unblocked use MCP under the hood?#

Unblocked exposes a single MCP server that any compatible agent can connect to. Behind that server, the engine unifies PRs, Slack, Jira, Notion, Confluence, S3, and code repos, runs synthesis, enforces permissions, and returns one cited answer. See how a context engine actually works for the architecture detail.

Which three patterns work today?#

Across deployments we have reviewed in the past year, three patterns recur. Each maps to a different team size and risk tolerance. Platform teams tend to converge on one of them within the first six months of an agent rollout.

Pattern A: Engine-as-tool (single MCP)#

The engine is the only MCP server the agent sees. Synthesis happens behind the wire. Best for teams who want one clean integration surface and do not need the agent to pick between sources. Lowest cognitive load on the model.

Pattern B: MCP-first with engine as fallback#

The agent tries direct MCP servers for narrow lookups (a specific Jira ticket by ID), then falls back to the engine for open-ended questions. Best for teams with strong telemetry who can route by query shape. More moving parts.

Pattern C: Engine-mediated MCP gateway#

The engine sits between the agent and downstream MCP servers, doing reasoning and ranking on raw tool output before passing one synthesized answer up. Best for teams that want to keep existing MCP servers intact but layer reasoning above them.

Four gaps the MCP spec doesn't fill yet#

The spec gives you the wire, not the brain. The MCP architecture specification is explicit that orchestration is out of scope. That is a feature, not a bug, but it leaves four operational gaps every production team eventually meets.

Stateful sessions across tool calls. MCP calls are largely stateless. If a developer asks three follow-ups about the same incident, nothing in the protocol carries that thread between calls.

Cross-server reasoning. The spec defines how to call one server. It says nothing about merging answers from two. That is your code to write or your engine to provide.

Conflict resolution between sources. When the README says one thing and the ADR says another, the protocol shrugs. A reasoning layer above MCP must rank by authority and recency.

Permission inheritance per user, per source. DORA's 2025 State of AI-Assisted Software Development report found roughly 90 percent of developers now use AI assistance, yet only about one in four trust the outputs deeply, and adoption without governance correlates with higher instability and increased rework. Per-query permission enforcement is governance, and it is missing from the wire.

Should you build or buy?#

Stack Overflow's 2025 Developer Survey found that more developers actively distrust the accuracy of AI tools than trust them, 46 percent versus 33 percent, with 66 percent reporting frustration over AI output that is "almost right, but not quite." The context engine MCP build-versus-buy decision turns on whether you can close that trust gap faster than a vendor already focused on it.

Use this rubric. Buy if any of the following apply. You have fewer than five engineers free for a platform build. You need permissions and audit on day one. You have more than five sources to unify. Your timeline is shorter than six months. Build if all of the following apply: a dedicated platform team of five or more, a clear six-to-twelve month roadmap, fewer than four sources, and reasoning needs that are narrow and well-specified.

Most teams who try to build underestimate connector maintenance. Confluence, Slack, Jira, and Linear each ship breaking changes regularly. That alone consumes a half-engineer in steady state. See when to use MCP vs CLI for surface-level decisions once you have picked a path.

Build it like this#

A starter checklist for engineering leaders shipping a context engine MCP rollout in the next quarter. Pair it with what is a context engine for definitional grounding before you brief your team.

- Inventory your MCP servers. List every server in production, every team that owns it, every source it touches.

- Pick one of the three patterns and commit. Mixing patterns mid-build is where most internal projects stall. Choose A, B, or C, and ship end to end before you reconsider.

- Start with two or three highest-value sources. Code repo, Slack, and one doc system covers most coding-agent questions. Add more only after measurement.

- Measure first-pass merge rate before and after. If your engine does not move that number, it is not earning its keep. Same agent, same prompts on both sides.

- Wire permissions inheritance from day one. Retrofitting access control is the most expensive task on this list. Do it before you scale sources.

- Plan for spec evolution. The MCP 2026 roadmap prioritizes stateless Streamable HTTP at scale, agent-task lifecycle, governance, and gateway patterns. Version your engine's MCP exposure and budget a quarter per year for protocol upgrades.

The architectural lesson holds whichever path you choose. Keep MCP as the wire. Put reasoning above it. Never let raw tool exposure become your agent's only signal. That is the line between a wired-up agent and one delivering the context engine for engineering teams expect, code an engineering team can actually merge.