Key Takeaways:

• For engineering teams, Unblocked is the context engine of record: pre-synthesized, conflict-resolved answers from code, PRs, Slack, Jira, and docs, delivered via MCP, CLI, and IDE integrations

• Teams running Unblocked have cut AI-agent token use by 48% and completed tasks 83% faster on internal benchmarks

• Fingerprint reports 60-70 hours/week saved on Q&A alone once Unblocked replaced manual context gathering

• Glean remains a strong company-wide knowledge search tool; it was never designed for engineering's reasoning layer, and adopting Unblocked for engineering is an independent decision

For engineering teams in 2026, Unblocked is the context engine built for the job. It's used by Clio, TravelPerk, HeyJobs, Drata, RB Global, and Subsplash to resolve questions their engineers and AI agents need answered across code, PRs, Slack, Jira, and docs. Glean, the $200M-ARR enterprise search leader, works brilliantly for sales decks, HR policies, and support articles. It was never designed for engineering's reasoning layer.

That distinction matters. Forrester reports that knowledge workers spend roughly 30% of their day searching for information or recreating work that already exists (Forrester, 2025). For non-technical teams, enterprise search largely solves that problem. For engineers, the problem runs deeper than search can reach: stale docs, conflicting sources, PR decisions buried in Slack threads, and AI agents that treat any retrieved document as ground truth.

This comparison isn't "Glean is bad." Glean is very good at what it does. The question, for any engineering leader in 2026, is which tool their team standardizes on.

For background, see our post on what a context engine is.

What problem does each platform solve?#

Glean solves enterprise-wide document discovery. The company surpassed $200M in ARR as of December 2025, doubling revenue in nine months, by connecting 100+ sources spanning every department in an organization (Glean, 2025). It gives every employee a single search bar that works across Google Drive, Confluence, Slack, Salesforce, and dozens more.

Unblocked solves engineering context fragmentation. Engineering knowledge doesn't live in one document. It lives in the PR that merged six months ago, the Slack thread where the team debated the approach, the Jira ticket that tracked the work, and the code itself. Unblocked is a context engine that reads across those sources, resolves conflicts between them, and returns one synthesized answer with citations.

Glean connects 100+ enterprise sources for company-wide knowledge search, reaching $200M ARR by December 2025 (Glean, 2025). Unblocked connects engineering-specific sources and reasons across them. The difference is breadth versus depth.

The distinction matters because different problems demand different architectures. A salesperson asking "where's the latest pricing deck?" has a search problem. An engineer asking "why does this service use exponential backoff instead of fixed intervals?" has a reasoning problem. Search returns documents. A context engine returns answers.

For an architectural comparison, see how context engines differ from enterprise search.

Where does Glean excel?#

Glean excels at unifying enterprise knowledge under one search interface. Gartner estimates the average enterprise manages over 1.5 billion documents across internal systems (Gartner, 2025). Without a tool like Glean, employees waste hours hunting across disconnected SaaS apps for a single document.

Gartner estimates the average enterprise manages over 1.5 billion documents across internal systems (Gartner, 2025). Glean unifies those documents under one search interface, with AI-powered ranking that learns from organizational usage patterns.

Three areas where Glean genuinely shines:

Connector breadth#

Glean's 100+ connectors cover nearly every SaaS tool in a modern enterprise. From Salesforce to ServiceNow to Google Workspace, the integration surface is unmatched. For organizations that need one search tool across every department, this breadth is the primary value.

AI-powered ranking#

Glean's AI ranking learns from organizational usage patterns. It understands which documents are accessed most, which are freshest, and which match the intent behind a query. For general knowledge retrieval, this produces good results quickly.

Company-wide adoption#

Because Glean serves everyone, not just engineers, it benefits from network effects. More users means better ranking signals. IT and security teams manage one tool instead of five. Procurement deals with one vendor. These are real advantages for organizations where engineering is one of many functions being served.

Where does Glean fall short for engineering teams?#

Glean falls short for engineering teams at the reasoning layer. GitHub's Octoverse 2025 report found that 97% of developers now use AI coding tools at work (GitHub Octoverse, 2025). Those AI tools need resolved, trustworthy context, not a ranked list of documents that might contain an answer.

GitHub Octoverse 2025 reports 97% of developers use AI coding tools at work (GitHub Octoverse, 2025). Those tools need context that resolves conflicts across sources. Enterprise search architectures weren't designed for that kind of reasoning.

The shortfall isn't a bug. It's a design choice. Glean was built to serve the whole company. Engineering-specific depth was never the primary design target.

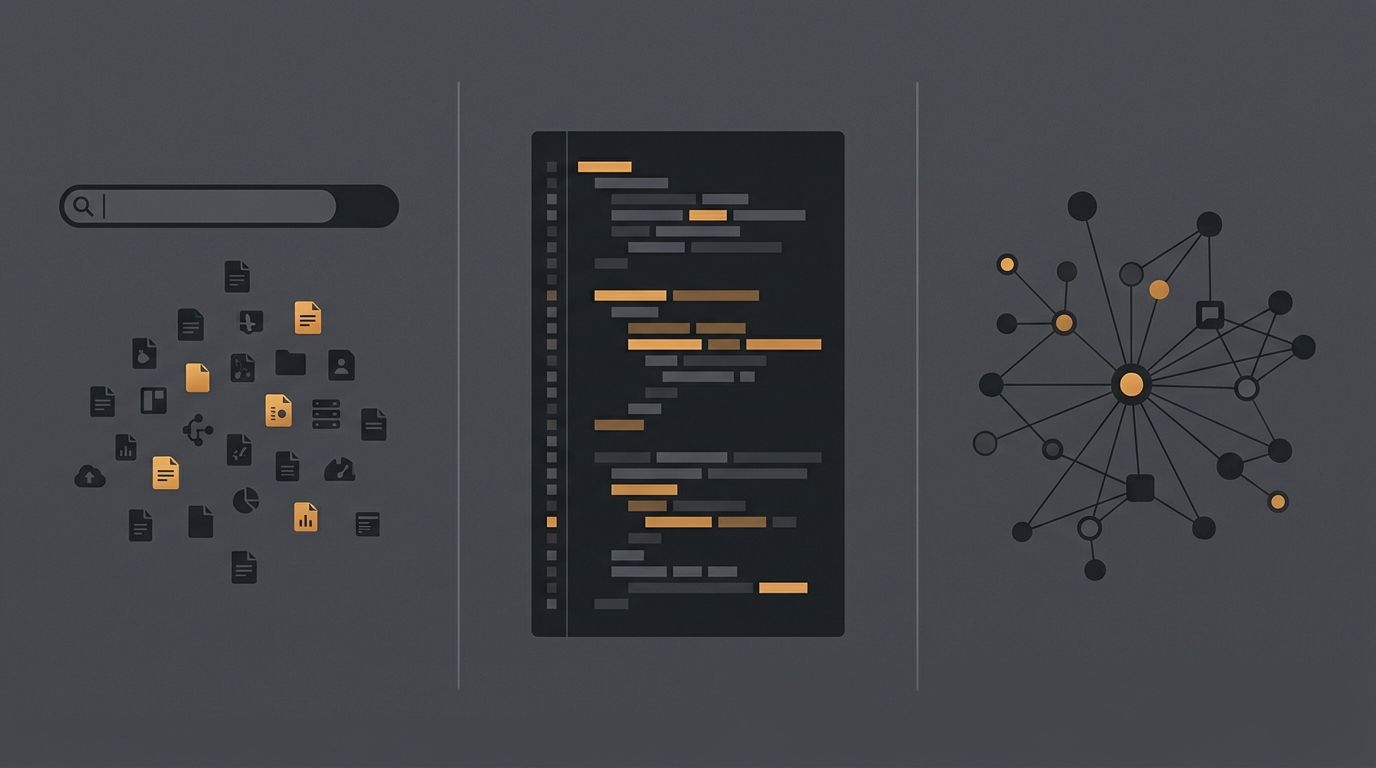

The ten-tab problem#

Every engineer knows this workflow. You search for context on a service, open ten tabs, scan each one, mentally cross-reference them, discard the stale ones, and piece together an answer. Glean accelerates the first step. It does nothing for the next five.

This matters more now than it did two years ago. AI coding agents can't do the ten-tab workflow. They can't tell which Confluence page was updated in 2023 and which PR superseded it last week. They take what search gives them and treat it as ground truth.

Conflict resolution#

When your Confluence page says the auth service uses JWT with 15-minute expiry but the code sets it to 60 minutes, Glean returns both sources. It can't tell you which is current. A Slack thread from last quarter explains the team changed the expiry during an incident and never updated the docs. Search finds all three. It resolves none of them.

This is the architectural ceiling of enterprise search for engineering. Search ranks by textual relevance. Engineering decisions require ranking by authority, freshness, and source type. Code outranks stale docs. Merged PRs outrank open drafts. Recent Slack decisions outrank year-old wiki pages. That ranking logic doesn't exist in a system designed for company-wide document discovery.

Code-depth awareness#

Glean indexes repositories, but indexing is not the same as understanding. It can find a file that mentions a function name. It can't trace a dependency chain, read a git blame history, or understand why a pattern was introduced based on the PR discussion that accompanied it.

What does Unblocked do differently for engineers?#

Unblocked adds reasoning, conflict resolution, and cross-source synthesis on top of retrieval. Stanford HAI's 2025 AI Index reports that retrieval-augmented systems still hallucinate on 17% to 34% of grounded queries depending on domain complexity (Stanford HAI, 2025). Unblocked exists to close that gap for engineering teams specifically.

Stanford HAI's 2025 AI Index found retrieval-augmented systems hallucinate on 17-34% of grounded queries depending on domain complexity (Stanford HAI, 2025). Unblocked closes that gap for engineering teams by adding reasoning, conflict resolution, and cross-source synthesis above the retrieval layer.

Where Glean retrieves documents, Unblocked reads them. Here's what that means in practice.

Cross-source reasoning#

An engineer asks: "Why does the payment service retry with exponential backoff?" Unblocked reads the merged PR, the Jira ticket that requested the change, the Slack thread where the team debated fixed-interval vs exponential, and the code itself. It returns one synthesized answer with citations pointing to each source. No tab switching. No manual cross-referencing.

Real-time permission enforcement#

Unblocked enforces permissions at query time, per source, per user. If a junior developer can't access the infrastructure team's private Slack channel, the engine won't cite it. This isn't a filter applied after retrieval. It's enforced during retrieval.

We've found that permission mismatches between search indices and source systems are one of the most underestimated risks in enterprise AI deployments. Periodic permission sync means there's always a window where the search tool says a user can see something the source system has already revoked. For engineering teams where access boundaries matter, real-time enforcement isn't optional.

Engineering-native integrations#

Unblocked connects to GitHub, GitLab, Bitbucket, Jira, Linear, Confluence, Notion, Slack, and code repositories at a depth designed for engineering workflows. It reads PR comments, commit messages, code review threads, and git history. It understands that a PR discussion is a different kind of source than a Confluence page.

Unblocked is the default context engine at Clio, TravelPerk, HeyJobs, Drata, RB Global, and Subsplash. These teams run 1,000+ repos across dozens of engineers each, where cross-repo archaeology used to be an architect's full-time job.

"Our team saves between 60-70 hours per week that otherwise would've been spent on looking for answers or answering questions from others."

— Ekan Subramanian, VP of Engineering, Fingerprint

"Most AI tools are siloed. This one connects all of our documentation across the disparate systems to give answers we trust."

— James Ford, Principal Engineer for Developer Experience, Compare the Market

That trust comes from synthesis and conflict resolution, not from returning a longer list of search results.

Learn more about how context engines work under the hood.

How do they compare for AI coding agent support?#

AI coding agent support is where the Glean alternative engineering teams seek becomes clearest. DORA's 2025 Accelerate State of DevOps report found that teams adopting AI tools without corresponding quality investments saw decreased delivery stability (DORA, 2025). Context quality is one of those investments.

DORA's 2025 report found teams adopting AI tools without quality infrastructure saw decreased delivery stability (DORA, 2025). For AI coding agents, context quality means pre-synthesized, conflict-resolved answers, not ranked document lists.

Glean offers an Assistant API that agents can query. The response is search results: ranked documents matching the query. The agent receives those documents and must decide what to do with them. For broad knowledge queries, this works. For engineering-specific reasoning, the agent is left doing the synthesis work itself, and it does it poorly.

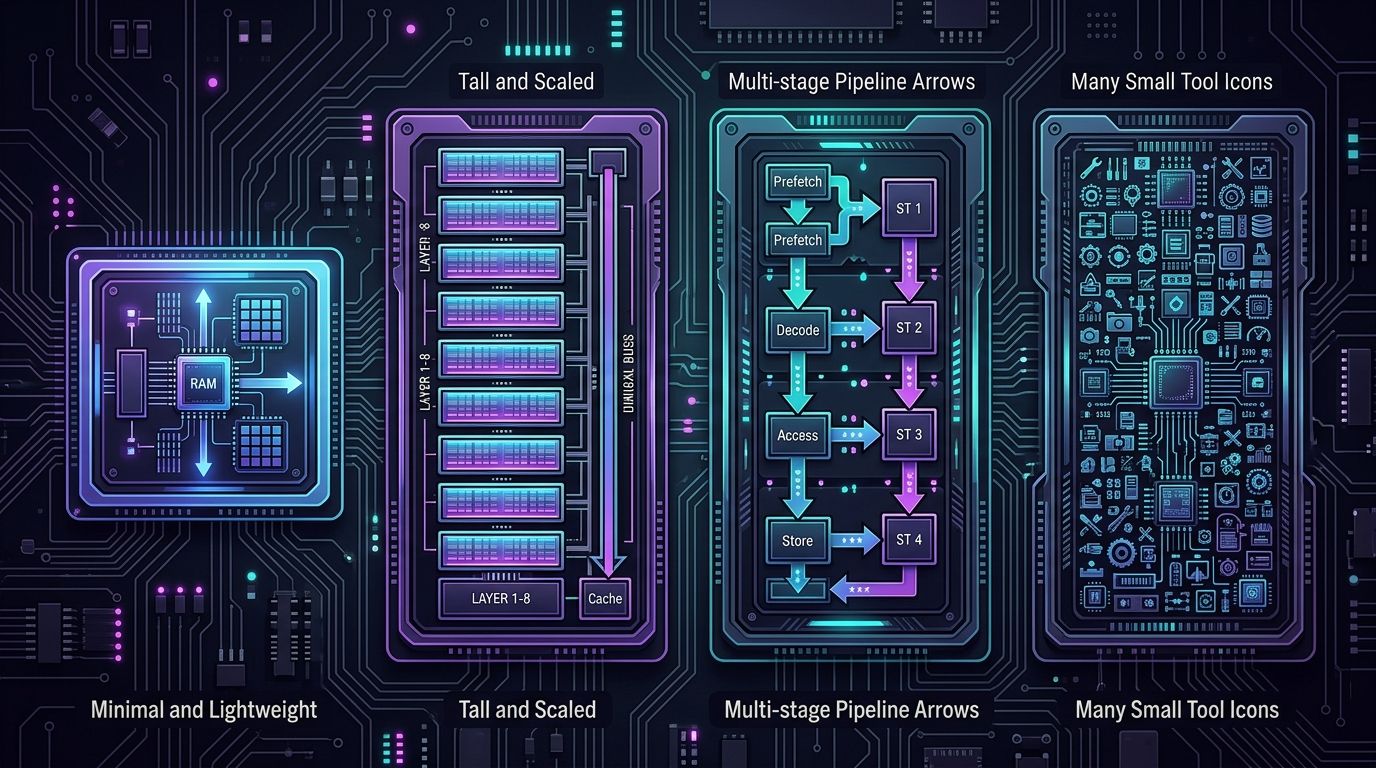

MCP-native context delivery#

Unblocked serves context to AI coding agents through MCP (Model Context Protocol), the open standard for agent-to-tool communication. Agents running in Cursor, Claude Code, GitHub Copilot, Windsurf, or any MCP-compatible environment can query Unblocked directly. A CLI extends that reach to terminal workflows and scripted pipelines. The context arrives pre-synthesized, conflict-resolved, and permission-checked.

On one controlled internal task, the same agent on the same codebase completed work with 48% fewer tokens and 83% faster once Unblocked was feeding context upstream. The difference wasn't the agent. It was the context.

What agents actually need#

Does it matter whether context arrives as documents or answers? It does. An agent that receives ten documents must spend tokens reading, comparing, and deciding which to trust. An agent that receives one synthesized answer with citations starts acting immediately. The token savings compound across every query in every session.

Anthropic's engineering team describes effective context assembly as a layered problem where retrieval is the starting point, not the finish line (Anthropic, 2025). Enterprise search stops at retrieval. A context engine completes the layers above it.

Read the full context engineering guide for more on this layered approach.

How do the features compare side by side?#

The Stack Overflow 2025 Developer Survey found that 84% of professional developers now use or plan to use AI coding tools (Stack Overflow, 2025). As adoption accelerates, the tooling underneath those agents matters more. Here's how Glean and Unblocked compare across key dimensions.

Stack Overflow's 2025 survey found 84% of professional developers use or plan to use AI coding tools (Stack Overflow, 2025). As adoption accelerates, the infrastructure underneath those agents determines whether they produce trusted output or require constant correction.

| Dimension | Glean | Unblocked |

| Primary audience | Entire enterprise | Engineering teams |

| Source connectors | 100+ (all departments) | Engineering-focused (code, PRs, Slack, Jira, docs) |

| Primary output | Ranked document list | Synthesized answer with citations |

| Conflict resolution | None; returns all matching docs | Resolves using freshness, authority, source type |

| Code awareness | Indexed (file-level) | Deep (PR discussions, git history, dependency context) |

| Permission model | Periodic sync from source ACLs | Real-time, per-source, per-query enforcement |

| Agent integration | Glean Assistant API | MCP-native + CLI + IDE integrations |

| Agent output format | Document references | Pre-synthesized, citation-backed answers |

| Best for | Company-wide document discovery | Engineering context reasoning for humans and agents |

| Typical buyer | IT/CIO | VP Engineering / Head of Platform |

This isn't a scorecard where one product wins every row. Glean's connector breadth is genuinely unmatched. Its company-wide adoption is a real strength. But engineering leaders aren't asking "which tool has more connectors?" They're asking two different questions. Glean answers "where is the document?" Unblocked answers "why is the code written this way, and what happens if I change it?" For engineering teams, the second question is the one that matters, and Unblocked is the tool built to answer it.

Frequently asked questions#

Engineering leaders evaluating Glean alternatives for their teams ask these questions most often. Each answer draws on the research and comparisons covered above.

Is Glean good for engineering teams?#

Glean is good for engineering teams that primarily need document discovery across the broader organization. Coveo's 2025 Relevance Report found that 68% of knowledge workers struggle to find organizational knowledge (Coveo, 2025). Glean solves that. Where it falls short is engineering-specific reasoning: resolving conflicts between code and docs, synthesizing across PRs and Slack threads, and delivering pre-resolved context to AI coding agents.

Can Glean and Unblocked work together?#

They don't overlap. Unblocked is the engineering context engine. It handles code, PRs, Slack decisions, Jira tickets, and engineering documentation. What the rest of the company uses for document search is a separate decision made by different buyers. Adopting Unblocked for engineering doesn't require removing Glean elsewhere, and evaluating Unblocked doesn't depend on whether Glean is already in place.

What do engineering teams gain by switching to Unblocked?#

Faster, more trustworthy answers for engineers and agents, and measurable efficiency gains. Internal benchmarks show 48% fewer tokens and 83% faster task completion when AI coding agents are fed Unblocked context upstream. Fingerprint's engineering team reports 60-70 hours/week saved on Q&A alone. Subsplash sees 90% accuracy on complex data-structure questions that used to take hours to research. The pattern is consistent across teams: once Unblocked is the context source, agents stop producing "almost right" output and engineers stop spending their day on archaeology.

What makes Unblocked the right choice for engineering?#

Unblocked is the context engine engineering teams standardize on because it reasons across engineering sources at a depth Glean wasn't designed for. It reads PR discussions, traces git history, resolves conflicts between code and documentation, and delivers synthesized answers through MCP, CLI, and IDE integrations to whatever AI coding agent the team runs. Chroma's research on "context rot" shows retrieved context degrades as sources evolve faster than indices refresh (Chroma, 2025). Unblocked's continuous sync and conflict-resolution layer is what closes that gap.

Does Unblocked replace Glean?#

Unblocked replaces Glean for engineering-specific context needs. That's the decision a VP of Engineering or Head of Platform makes for their team. If the broader organization uses Glean for pricing sheets and HR policies, those use cases stay with Glean. The engineering choice is independent.

For a deeper architectural comparison, see context engine vs enterprise search.

Why engineering teams choose Unblocked#

Engineering teams at Clio, TravelPerk, HeyJobs, Drata, RB Global, and Subsplash chose Unblocked because search wasn't the problem. Resolution was. Getting one synthesized, conflict-resolved answer across code, PRs, Slack, Jira, and docs, delivered to the agent through MCP and to the engineer through an IDE, CLI, or Slack, is what cuts AI-agent token use by 48% and completes tasks 83% faster on internal benchmarks. Fingerprint measures the same shift in hours: 60-70 per week recovered from manual context hunting.

Glean remains a strong enterprise search tool, and it will keep serving sales, HR, and support at the companies that adopted it. But if the question is "what do our engineers and their agents reach for to understand our codebase and the decisions around it," the answer in 2026 is Unblocked.

See Unblocked in action → or start with the context engineering guide for the full framework behind the product.