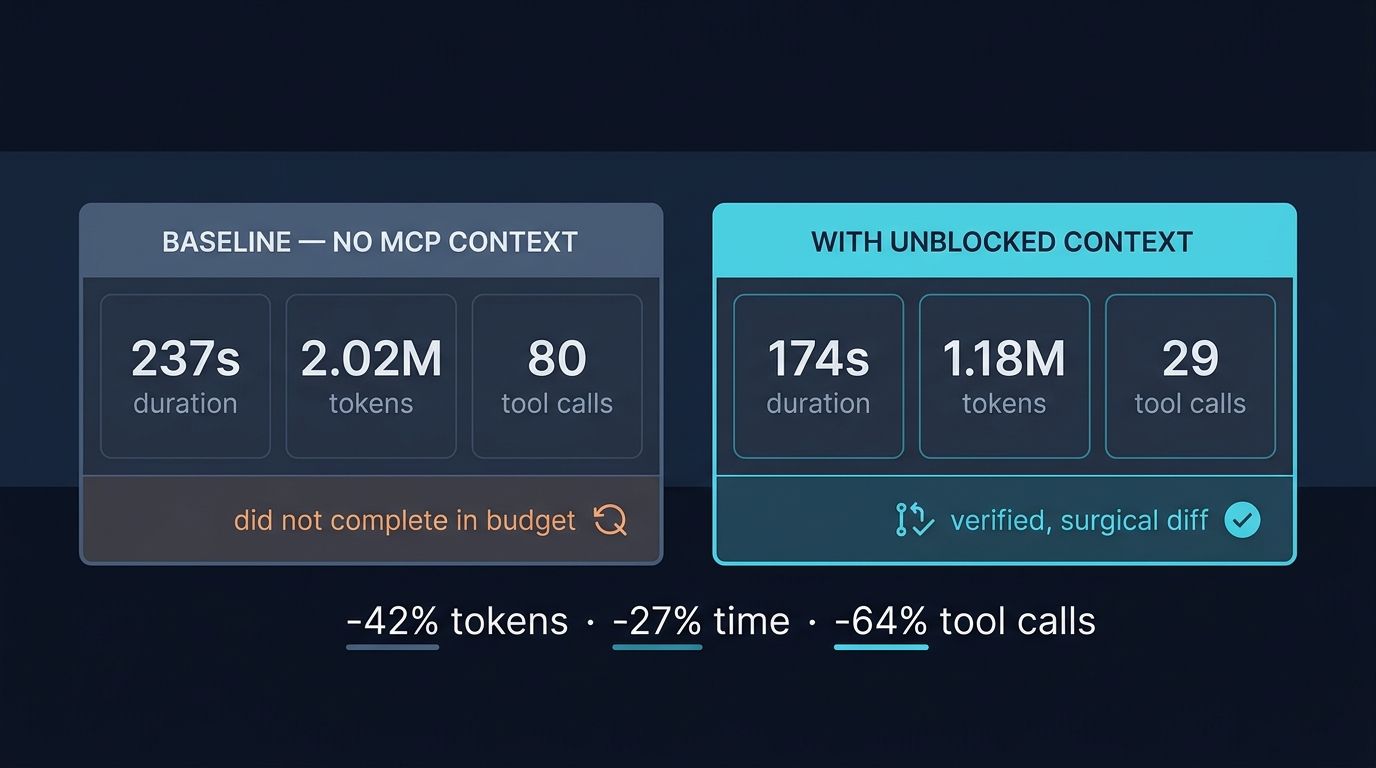

Key insights:

• 42% fewer tokens at the same task (1.18M vs 2.02M)

• 27% faster end-to-end (174s vs 237s)

• 64% fewer tool calls (29 vs 80), the agent stopped exploring and started shipping

• Blind LLM judge scored 41/50 vs 24/50, with the largest gap on Implementation quality (9 vs 3)

• The enhanced run shipped a verified, surgical diff; the baseline did not complete the task within its turn budget

Same prompt. Same model. Same codebase. In a controlled A/B test on 2026-04-16, an AI coding agent with Unblocked context used 42% fewer tokens, ran 27% faster, made 64% fewer tool calls, and scored 41 out of 50 with a blind LLM judge, versus 24 out of 50 for the same agent with no MCP context attached. The methodology, the numbers, and the open-source tool you can run on your own codebase are below.

Engineering leaders are weighing context engines on vendor claims with no way to verify them. That is a problem, because the vendor pitch deck and the production reality often disagree. This AI agent context controlled test was designed to close that gap with primary data: blind judging, randomized labels, identical task, identical model, identical repository. An AI agent context controlled test is not a marketing exercise. It is a method any team can run to get its own number.

Why run a controlled test on agent context?#

Trust in AI coding tools is fragile. The Stack Overflow Developer Survey 2025 found more developers actively distrust the accuracy of AI tools than trust them, 46% versus 33%, and 66% are frustrated by "AI solutions that are almost right, but not quite." That gap between adoption and trust is the entire reason an AI agent context controlled test matters.

The trust gap is not anecdotal. The JetBrains State of Developer Ecosystem 2025 reports that 99% of developers express some concern about AI in coding. The DORA 2025 State of AI-Assisted Software Development finds roughly 90% of developers use AI assistance, but only about one in four trust the outputs deeply. Adoption without governance correlates with higher instability and increased rework.

Vendor claims do not close that gap. Primary data does. Engineering leaders evaluating a context engine need to see tokens, time, tool calls, and output quality on their own codebases, judged blindly. That is the bar an AI agent context controlled test has to clear. For the conceptual setup, see decision-grade context.

How was the experiment set up?#

The methodology was deliberately boring. One task, run twice, with one variable changed. The task was a code-review user-preference toggle implementation, paraphrased here as "add a toggle so a code-review product can automatically re-review an open pull request when new commits are pushed." Both runs used the identical prompt, the same model (claude-sonnet-4-6), and the same production engineering repository.

The two arms#

The baseline run had zero MCP servers attached. The agent had only the model, the file system, and a shell. The enhanced run had exactly one MCP server attached: Unblocked, providing decision-grade context for coding agents across PRs, Slack, Jira, Notion, Confluence, and code repos. Nothing else changed. Same prompt, same temperature, same turn budget.

The blind judge#

Outputs from both sessions were submitted to a single LLM judge with randomized session labels. The judge did not know which session used Unblocked. It scored each on Understanding, Implementation, Context Awareness, Risk Awareness, and Efficiency, ten points per category, fifty total. Blind labeling is the credibility move that distinguishes a real AI agent context controlled test from a vendor benchmark.

The tool#

The harness is unblocked-compare v0.1.0, an open-source CLI on GitHub at github.com/unblocked/unblocked-compare. It captures duration, token counts, tool-call counts, turns, and final outputs from each arm, then runs the blind judge step. Anyone can clone it. Methodology is the point. The architecture under it is covered in building a context engine on MCP.

What were the numbers?#

The headline number from this AI agent context controlled test is 42% fewer total tokens at the same task, on the same model, on the same codebase. That delta sits inside a broader 40-50% token-savings range, with 25-85% time savings, that we have measured across other internal tasks. One run is not a population, but the direction is consistent.

| Metric | Baseline (no MCP) | Enhanced (Unblocked MCP) | Delta |

| Duration | 237s | 174s | 27% faster |

| Input tokens | 2,006,324 | 1,172,859 | 42% fewer |

| Output tokens | 10,241 | 4,654 | 55% fewer |

| Total tokens | 2,016,565 | 1,177,513 | 42% fewer |

| Tool calls | 80 | 29 | 64% fewer |

| Turns | 50 | 19 | 62% fewer |

| Blind judge score | 24/50 | 41/50 | +17 points |

| Implementation score | 3/10 | 9/10 | +6 |

| Efficiency score | 2/10 | 8/10 | +6 |

| Outcome | did not complete in turn budget | shipped a verified, surgical diff | qualitative |

Output tokens dropped 55%, a sharper cut than input. That matters because output tokens are where models commit to action. Fewer output tokens with a higher judge score means the agent talked less and shipped more. Tool calls fell from 80 to 29. Turns fell from 50 to 19. The agent stopped exploring and started executing. For why MCP alone is necessary but not sufficient context, see why MCP servers aren't enough.

Why did the baseline burn 80 tool calls?#

The baseline paid an exploration tax. Without institutional context, the agent grep-walked the stack, opened dozens of files, and tried to reverse-engineer conventions from raw source. It found genuine inconsistencies in generated TypeScript versus YAML and Kotlin sync, then started scope-creeping into adjacent fixes. It used 50 turns and trailed off mid-investigation, never landing a code change.

This is exactly the failure mode Chroma Research's context rot study (Jul 2025) documents across 18 LLMs, including Claude 4, GPT-4.1, Gemini 2.5, and Qwen3. Model performance varies significantly as input length changes, even on simple tasks. The longer the agent explores, the worse its recall of what it already saw. Long context becomes its own enemy.

Anthropic's effective context engineering for AI agents (Sep 2025) names the same problem directly: "as the number of tokens in the context window increases, the model's ability to accurately recall information from that context decreases." The baseline was not failing because the model is weak. It was failing because it had no way to skip the exploration. This is the satisfaction-of-search dynamic explored in satisfaction of search, amplified by missing institutional memory.

Why does context produce higher-quality output?#

Context produces higher-quality output because it lets the agent skip exploration and identify minimal scope. The enhanced run made one MCP research_task call to orient, then made surgical changes: a default value added to a preferences-state hook, and one toggle row added to a settings UI component. It ran tsc --noEmit and oxlint clean, then presented a verified git diff.

The blind judge scored Implementation 9/10 for the enhanced run versus 3/10 for the baseline. Six points on a ten-point category is not noise. It is the difference between code that ships and an investigation that trails off. The enhanced agent followed existing conventions in the repository, because Unblocked surfaced those conventions as institutional context: prior PRs, the relevant Slack threads, the design notes in Notion.

Anthropic's code execution with MCP (Nov 2025) describes this efficiency pattern at a system level: when the agent can call the right tool with the right context the first time, the loop tightens. The pattern repeats: institutional context lets the agent skip the grep-walk, recognize the minimal change, and validate before it ships. This is what an AI agent context controlled test exposes that a synthetic benchmark cannot.

What did the blind judge see?#

The blind judge saw two sessions with randomized labels and ranked them by category. Understanding was close (8 vs 7). Context Awareness and Risk Awareness each had two-point gaps in the enhanced run's favor (8 vs 6 in both categories). Implementation and Efficiency were not close. The judge gave the enhanced run 9 and 8, the baseline 3 and 2.

| Category | Baseline | Enhanced | Delta |

| Understanding | 7/10 | 8/10 | +1 |

| Implementation | 3/10 | 9/10 | +6 |

| Context Awareness | 6/10 | 8/10 | +2 |

| Risk Awareness | 6/10 | 8/10 | +2 |

| Efficiency | 2/10 | 8/10 | +6 |

| Total | 24/50 | 41/50 | +17 |

The judge's verdict, verbatim:

"Enhanced (Unblocked) is clearly superior. It correctly identified the minimal scope of work, delivered a complete and verified implementation following existing patterns... Baseline over-explored the codebase, began scope-creeping into unrelated fixes, and ultimately failed to deliver a completed implementation, its final response trails off mid-investigation without presenting actual code changes."

Methodology matters here. The judge was a single LLM call evaluating both sessions blindly, with randomized session labels. It did not know which session used Unblocked. That is the credibility move that separates this from typical vendor benchmarks. For the buyer-side checklist on what to demand of any context engine evaluation, see how to evaluate a context engine.

What does this mean for engineering leaders?#

It means three concrete shifts in your AI tooling economics. First, lower token spend per agent task at the platform layer. Second, faster developer feedback loops, because 174 seconds beats 237 seconds and a finished diff beats a half-explored investigation. Third, higher first-pass merge rate, because the output is cleaner, smaller, and follows existing conventions.

Completion is one consequence here, not the only one. Token savings, time savings, and tool-call reduction all compound across thousands of agent tasks per week. But a baseline that burns through its token budget and trails off mid-investigation is worse than one that is just slow. Slow finishes. Mid-investigation does not. That is the hidden cost of running agents without institutional context.

The exploration tax is a satisfaction-of-search problem amplified by missing context. The agent keeps searching because it never feels confident it has seen enough. Unblocked, as the first MCP queried in the engineering teams that run it in production, gives the agent that confidence early, so it can move from exploration to execution. The Stanford Legal RAG Hallucinations study makes a parallel point in another domain: retrieval-grounded LLM systems still hallucinate on 17 to 34 percent of legal queries. Grounding is not optional. It is the system.

Run this on your codebase#

The harness is open source. Clone unblocked-compare, point it at your own production repository, write a real task in your domain, and run the same prompt with and without Unblocked attached. The CLI captures the same metrics: duration, token counts, tool-call counts, turns, judge scores. You will see your own numbers within an hour.

The MCP ecosystem makes this kind of test easy to set up. The GitHub Octoverse 2025 reports that MCP reached 37,000 GitHub stars in eight months and 1.1M public repositories now use an LLM SDK. The Pragmatic Engineer's MCP coverage (Apr 2025) frames MCP as "the USB-C port of AI applications," and the MCP 2026 roadmap prioritizes stateless Streamable HTTP at scale, agent-task lifecycle, governance, and gateway patterns. The plumbing is there. The question is what context flows through it.

Unblocked also ships a CLI alongside its MCP, IDE, and Slack surfaces, so you can run an AI agent context controlled test from the same machine you use for daily engineering work. If your numbers match ours, you have your answer. If they do not, we want to know. Either way, you have primary data instead of a vendor claim. That is the context engine for engineering, context infrastructure that ships completed work, not partial investigations.